Start with Docker and basic CI/CD pipelines before diving into specialized MLOps tools. Most data scientists stumble when deploying their first model because they skip containerization fundamentals. Spend two weeks learning Docker basics, then practice packaging a simple scikit-learn model into a container you can run anywhere. This single skill eliminates the “it works on my machine” problem that derails countless production deployments.

Focus on one complete model lifecycle rather than collecting certificates. The gap between training models in Jupyter notebooks and running them in production feels enormous because traditional ML education stops at model.fit(). Build an end-to-end project: train a model, version it with DVC or MLflow, containerize it, deploy it to a cloud service, and set up basic monitoring. This hands-on loop teaches you more than a dozen theoretical courses because you’ll encounter real friction points like data drift, API latency, and version conflicts.

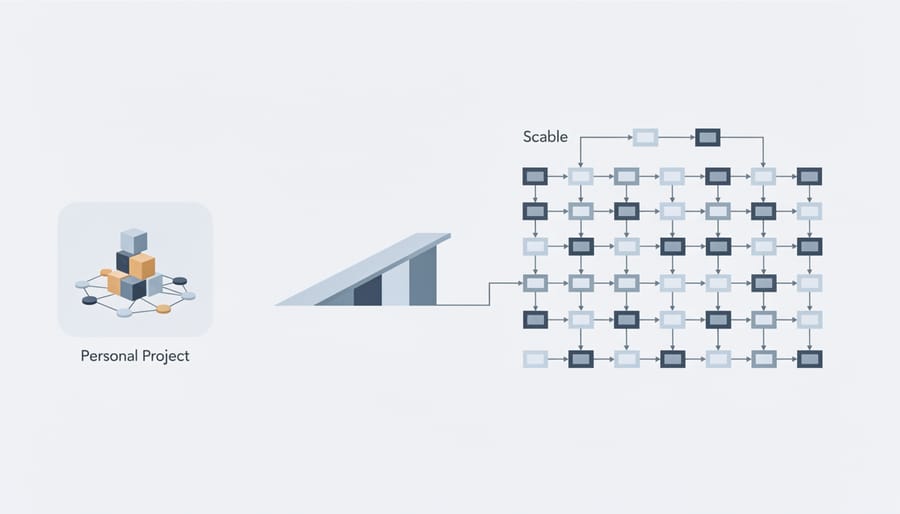

Master the collaboration layer that connects data science to engineering teams. MLOps exists because machine learning models need constant care after deployment, unlike traditional software. Learn Git for version control, understand REST APIs for model serving, and grasp basic Kubernetes concepts for scaling. These skills bridge the communication gap that frustrates both data scientists and DevOps engineers, making you invaluable for ML careers.

Prioritize monitoring and experiment tracking from day one. Set up MLflow or Weights & Biases in your first project to log metrics, parameters, and model versions. Production models degrade silently, and without proper tracking, you won’t know why your accuracy dropped from 94% to 67% last month. This observability mindset separates operational ML practitioners from academic researchers.

What MLOps Really Means (Beyond the Buzzword)

Think of it this way: building ML models in a Jupyter notebook is like cooking a delicious meal at home for your family. You experiment with ingredients, taste as you go, and if something goes wrong, you simply start over. Now imagine you need to serve that same meal to hundreds of customers every hour in a busy restaurant. Suddenly, you need a reliable kitchen system, quality control processes, inventory management, and a team that can consistently reproduce your recipe even when you’re not there.

That’s the gap MLOps bridges.

MLOps, short for Machine Learning Operations, is the practice of taking machine learning models from experimental notebooks and turning them into reliable, scalable systems that work in the real world. While data scientists focus on creating accurate models, MLOps ensures those models actually deliver value in production environments.

Here’s what MLOps really involves:

Deployment and Serving: Getting your model accessible to applications and users through APIs or cloud services. Your model needs to make predictions quickly and reliably, not just once, but thousands of times per day.

Monitoring and Maintenance: Models don’t stay accurate forever. Data changes, user behavior shifts, and what worked last month might fail today. MLOps includes tracking model performance and knowing when something goes wrong.

Version Control and Reproducibility: Just like software code, you need to track which version of your model is running, what data trained it, and be able to roll back if needed.

Automation and Pipelines: Instead of manually running scripts every time you need to retrain a model, MLOps creates automated workflows that handle everything from data processing to deployment.

The bottom line? MLOps transforms machine learning from a science experiment into a sustainable business tool. It’s the difference between saying “my model works on my laptop” and “my model is helping our company make better decisions every single day.”

The Three Pillars You Need to Master

Machine Learning Fundamentals

Before diving into MLOps, you need a solid foundation in machine learning basics. Think of it as learning to cook before managing a restaurant kitchen. Understanding ML fundamentals means grasping how models learn from data, make predictions, and improve over time.

Start with the core concepts: supervised learning (where models learn from labeled examples, like teaching a child with flashcards), unsupervised learning (finding patterns without guidance), and the training process itself. You’ll need to understand how models split data into training and testing sets to avoid a common pitfall called overfitting, where your model memorizes training data but fails on new information—like a student who only memorizes practice tests.

Model evaluation is equally crucial. Metrics like accuracy, precision, and recall help you measure performance, but choosing the wrong metric can lead you astray. For instance, a model predicting rare diseases needs different evaluation criteria than one recommending movies.

Common pitfalls include using insufficient data, ignoring data quality issues, and selecting inappropriate algorithms for your problem. Many beginners also underestimate the importance of feature engineering—the process of selecting and transforming input variables that actually matter.

Once you’re comfortable building and evaluating models in notebooks, you’ll appreciate why MLOps exists: taking these experimental models and making them work reliably in real-world applications is an entirely different challenge.

Software Engineering Essentials

Before diving into machine learning model deployment, you’ll need a solid foundation in software engineering practices. Think of these skills as the bridge between building models in Jupyter notebooks and running them reliably in production.

Version control with Git is your first essential tool. Imagine working on a model for weeks, making changes, and suddenly something breaks. Without version control, you’re stuck trying to remember what worked before. Git acts like a time machine for your code, tracking every change and letting you collaborate with teammates without overwriting each other’s work. You’ll use platforms like GitHub or GitLab to store your code repositories and manage different versions of your projects.

Testing might seem tedious, but it’s your safety net. In MLOps, you’ll write tests to verify that your data processing functions work correctly, your model loads properly, and your predictions fall within expected ranges. A simple example: if you’re building a price prediction model, a test might check that predicted prices are never negative. These automated checks catch problems before they reach production.

CI/CD, or Continuous Integration and Continuous Deployment, automates the journey from code changes to production. When you push code to your repository, CI/CD pipelines automatically run your tests, build your application, and deploy it if everything passes. This means fewer manual steps, faster iterations, and more confidence in your deployments. Tools like GitHub Actions or Jenkins make this process manageable, even for beginners, turning what once took hours into minutes.

Cloud and Infrastructure Basics

Once you’ve built a machine learning model that works beautifully on your laptop, the next challenge is getting it into the hands of actual users. This is where cloud and infrastructure knowledge becomes essential.

Think of deployment environments as the homes where your models live and work. Your local computer is like a small apartment, perfect for development but not designed to handle thousands of visitors. Cloud platforms like AWS, Google Cloud, or Azure are more like scalable office buildings that can expand or shrink based on demand. When your model needs to serve predictions to 10 users or 10,000 users, cloud infrastructure automatically adjusts.

Containers, particularly Docker, are game-changers here. Imagine packing your model along with all its dependencies (Python libraries, specific versions, configuration files) into a sealed box. This container runs identically whether it’s on your laptop, a colleague’s machine, or a cloud server. No more “but it works on my machine” headaches. Containers ensure your model behaves consistently across different environments.

Why does this matter for MLOps? Because models don’t exist in isolation. They need reliable infrastructure to serve predictions quickly, scale during traffic spikes, and stay available 24/7. Understanding these basics helps you bridge the gap between model development and real-world deployment. You don’t need to become a cloud expert immediately, but grasping these fundamentals ensures your models can actually deliver value beyond your development environment.

Your Learning Path: From Zero to Deployment-Ready

Stage 1: Building Your Foundation (Weeks 1-4)

Before diving into machine learning operations, you need solid groundwork. Think of this month as laying the concrete foundation for a house—it might not be the exciting part, but everything else depends on it.

Start with Python fundamentals if you’re rusty or new to programming. You don’t need to become a Python expert, but you should feel comfortable writing functions, working with data structures like lists and dictionaries, and understanding basic object-oriented programming. Free resources like Python.org’s official tutorial or Codecademy’s Python course work well here. Dedicate about two weeks to this, spending an hour daily on hands-on coding exercises.

Next, familiarize yourself with basic machine learning concepts. What’s the difference between supervised and unsupervised learning? How does a model actually learn from data? Andrew Ng’s Machine Learning course on Coursera remains the gold standard introduction, explaining concepts without drowning you in math. Alternatively, Google’s Machine Learning Crash Course offers a faster-paced option.

During weeks three and four, explore version control with Git and GitHub. MLOps revolves around collaboration and tracking changes, making Git essential. Create a GitHub account, practice committing code, and understand branching basics. Think of Git as your safety net—it lets you experiment without fear of breaking things.

This structured learning path might feel slow initially, but mastering these fundamentals prevents frustration later. You’re building muscle memory and confidence that will make advanced MLOps concepts much more approachable.

Stage 2: Getting Hands-On with Tools (Weeks 5-8)

Now that you’ve built your ML foundation, it’s time to roll up your sleeves and get comfortable with the tools that make MLOps possible. Think of this stage as learning to use a carpenter’s toolkit—you need to know what each tool does and when to reach for it.

Start with Git and version control. While you might have used Git for code before, MLOps requires tracking not just your scripts, but also your datasets, model versions, and experiment configurations. Create a simple project where you version control a basic machine learning model, making commits as you adjust hyperparameters. This habit will save you countless headaches later when you need to recreate results or roll back changes.

Next, tackle Docker. Imagine you’ve built a fantastic model on your laptop, but it won’t run on your colleague’s machine because of different library versions. Docker solves this by packaging your entire environment into a container. Begin with a straightforward exercise: containerize a simple prediction API that serves your machine learning model. Don’t worry if it feels awkward at first—most people find Docker confusing initially.

Finally, dip your toes into cloud platforms. Choose one major provider like AWS, Google Cloud, or Azure and focus on their basic services. Learn how to spin up a virtual machine, store data in cloud storage, and maybe deploy your containerized model. You don’t need to master everything—just understand the fundamentals of running workloads in the cloud.

Throughout these four weeks, build small projects that combine these tools. For example, create a Dockerized model, version it with Git, and deploy it to a cloud instance. These hands-on experiences transform abstract concepts into practical skills you’ll use daily.

Stage 3: Real Deployment Practice (Weeks 9-12)

Now comes the exciting part where theory transforms into reality. During weeks 9-12, you’ll build and deploy actual machine learning models that people can interact with, marking your transition from learner to practitioner.

Start by creating a simple image classification model using a pre-trained network like ResNet or MobileNet. Don’t worry about training from scratch at this stage. Focus instead on wrapping your model with Streamlit, a Python framework that turns data scripts into shareable web apps with minimal code. Within an afternoon, you can have a functioning app where users upload images and receive predictions in real-time. This immediate feedback loop is incredibly motivating and helps you understand the user experience side of ML deployment.

Next, level up by building a REST API using FastAPI. Think of this as creating a backend service that other applications can call. A practical project might be a sentiment analysis API that accepts text and returns whether the sentiment is positive, negative, or neutral. FastAPI’s automatic documentation feature means you’ll instantly see how professional APIs are structured, making the learning curve surprisingly gentle.

For your capstone project, deploy one of your models to a cloud platform. AWS SageMaker, Google Cloud AI Platform, and Azure ML all offer free tiers perfect for learning. Follow beginner-friendly tutorials that walk through every click and command. You’ll encounter errors and deployment failures during this phase, and that’s exactly the point. Each troubleshooting session teaches you resilience and problem-solving skills that tutorials alone cannot provide.

By week 12, you’ll have a portfolio of deployed models demonstrating real MLOps capabilities, transforming your resume from theoretical knowledge to proven hands-on experience.

The Best Free Resources That Actually Work

Online Courses and Tutorials

Getting started with MLOps doesn’t require expensive degrees. Several platforms offer excellent free and paid resources tailored for beginners. Coursera’s “Machine Learning Engineering for Production” specialization by Andrew Ng provides a structured introduction to the entire ML lifecycle, from model development to deployment. For those who prefer bite-sized learning, YouTube channels like “Made With ML” and “Abhishek Thakur” break down complex deployment concepts into digestible tutorials with real code examples.

If you’re looking for hands-on practice, interactive online courses on platforms like DataCamp and Udacity offer MLOps-specific bootcamps with practical labs. These let you work with tools like Docker, Kubernetes, and MLflow in simulated environments before applying them to real projects.

For free comprehensive learning paths, check out Google’s MLOps course on their Cloud Skills Boost platform or Amazon’s SageMaker tutorials. These industry-backed resources teach you the exact tools used in production environments, giving you job-ready skills without the hefty price tag.

Documentation and Interactive Platforms

Learning MLOps becomes significantly easier when you tap into quality documentation and hands-on platforms. Start with the official documentation from major cloud providers like AWS SageMaker, Google Cloud AI Platform, and Azure Machine Learning. These guides offer real-world insights into how MLOps works in production environments, complete with code examples and architectural patterns you’ll actually use.

For practical experience, Kaggle remains an excellent playground. While traditionally known for competitions, Kaggle now hosts datasets and notebooks where you can practice building end-to-end ML pipelines. You’ll find community-shared workflows that demonstrate version control, experiment tracking, and model deployment strategies. MLflow’s documentation is another essential resource, walking you through experiment tracking and model registry concepts with clear tutorials.

Don’t overlook platform-specific guides from tools like Kubeflow and Airflow, which explain orchestration and workflow management through interactive examples. The beauty of these resources is that they’re constantly updated, reflecting the latest MLOps practices. Pair reading documentation with hands-on experimentation on these platforms, and you’ll develop practical skills that translate directly to workplace scenarios. Remember, MLOps is learned by doing, so prioritize platforms where you can deploy actual models and troubleshoot real issues.

Communities Where You Can Learn Together

Learning MLOps doesn’t have to be a solo journey. Several welcoming online communities exist where you can ask questions, share your progress, and learn from others facing similar challenges.

The r/MLOps subreddit is an excellent starting point, with over 50,000 members discussing everything from CI/CD pipelines to model monitoring strategies. You’ll find beginners asking foundational questions alongside experienced practitioners sharing real-world solutions. Similarly, r/MachineLearning has a broader focus but frequently covers operational aspects of deploying models.

Discord servers offer more real-time interaction. The MLOps Community Discord hosts thousands of learners and professionals who organize study groups, share job opportunities, and troubleshoot deployment issues together. The Weights & Biases Discord is another active space where you can get help with experiment tracking and model versioning.

For more structured discussions, check out the MLOps section on Stack Overflow, where you can search for specific technical problems and find detailed solutions. The Machine Learning Engineers forum on Slack also provides dedicated channels for beginners.

These communities understand that everyone starts somewhere. Don’t hesitate to ask questions about concepts that seem basic—you’ll often find others had the same confusion and appreciate someone finally asking.

Common Mistakes That Waste Your Time

When you’re eager to master MLOps, it’s easy to fall into traps that slow your progress rather than speed it up. Let’s explore the most common mistakes so you can sidestep them entirely.

The biggest time-waster? Diving straight into complex orchestration tools like Airflow or Kubeflow before understanding the fundamentals. Picture this: you’re trying to build a house by starting with the roof. Many learners get excited about fancy tools and platforms, spending weeks wrestling with Kubernetes configurations when they haven’t yet deployed a simple model using Flask or FastAPI. Start small. Get one model working in production, even if it’s just a basic API on your local machine, before graduating to enterprise-level tools.

Another prevalent mistake is learning MLOps in complete isolation. You might watch tutorials for months, taking meticulous notes, but never actually push code to a repository or share your work. MLOps is inherently collaborative—it bridges teams and systems. Join online communities, contribute to open-source projects, or find a study partner. Real learning happens when you encounter merge conflicts, debug deployment issues with others, or receive feedback on your pipeline design.

Perhaps the most damaging habit is tutorial hopping without building anything substantial. Watching someone else set up a CI/CD pipeline feels productive, but it doesn’t cement the knowledge. Your brain needs the struggle of troubleshooting why your Docker container won’t build or figuring out why your model monitoring isn’t capturing drift properly. Aim to build at least three end-to-end projects where you handle data versioning, model training, deployment, and monitoring yourself.

Finally, many learners ignore the “Ops” part of MLOps, focusing exclusively on model development. Remember, MLOps isn’t just about building better models—it’s about making those models reliable, maintainable, and valuable in production environments. Spend time understanding monitoring, logging, and incident response. These practical skills separate hobbyists from professionals who can actually deliver ML solutions that work reliably in the real world.

Your First MLOps Project: A Simple Roadmap

Ready to build your first MLOps project? Let’s break this down into manageable steps that will take you from a trained model sitting on your laptop to a deployed solution anyone can use. Think of this as your MLOps training wheels – simple enough to complete, but comprehensive enough to teach you the fundamentals.

Start with a straightforward problem. Predicting house prices or classifying images of cats versus dogs works perfectly. You’re not trying to revolutionize artificial intelligence here; you’re learning the pipeline. Spend a day or two building and training a basic model using Python and scikit-learn or TensorFlow. Keep it simple – a model with 80% accuracy that you can actually deploy beats a 95% accurate model trapped on your computer.

Next, set up version control for everything. Create a GitHub repository and commit your code, data samples, and a requirements.txt file listing all your dependencies. This might seem tedious, but imagine returning to your project three months later and not remembering which library versions you used. Version control is your safety net.

Now comes the exciting part: containerization. Install Docker and create a Dockerfile that packages your model with all its dependencies. Yes, Docker has a learning curve, and yes, you’ll probably get error messages about missing files or wrong paths. This is normal. When you finally see “container running successfully,” you’ll understand why containers are considered MLOps magic – they ensure your model runs identically everywhere.

For deployment, start with a simple Flask API that accepts input data and returns predictions. Deploy this to a free platform like Render or Railway. Heroku used to be the go-to choice, but these alternatives work just as well for learning purposes. Your first deployment will likely fail. The server might crash, or you’ll hit memory limits. Don’t panic – check the logs, adjust your configuration, and try again.

Add basic monitoring by logging prediction inputs and outputs to a simple CSV file or using a free monitoring tool. You don’t need sophisticated dashboards yet; you just need to know your model is working.

Expect this entire process to take two to three weeks if you’re learning part-time. Some days you’ll spend hours debugging why your Docker container won’t build. Other days, everything will click into place. The key is persistence. When you finally send a request to your deployed model and receive a prediction back, you’ll have completed something many data scientists never do – you’ve shipped a working product.

Here’s the truth that nobody tells you upfront: everyone struggles with MLOps at first. That data scientist at your dream company who effortlessly deploys models? They once spent hours debugging a Docker container that wouldn’t start. The MLOps engineer giving conference talks? They’ve crashed production systems and learned the hard way. You’re not behind, and you’re certainly not alone in feeling overwhelmed.

The key to mastering MLOps isn’t having perfect understanding before you begin. It’s starting small and building momentum through consistent practice. Think of it like learning to ride a bike—you don’t study physics textbooks first; you get on the bike, wobble a bit, and gradually find your balance.

Your immediate next step is refreshingly simple: pick one small ML project you’ve already built (or build a basic one this week) and focus solely on deploying it. Don’t worry about monitoring, CI/CD pipelines, or model versioning yet. Just get one model running somewhere other than your laptop. Choose a platform like Streamlit or Flask for simplicity, deploy to Heroku or Render, and celebrate that win.

From there, add one new MLOps concept each week. Next week, implement basic logging. The following week, add simple model versioning. Small, consistent steps compound faster than you’d imagine.

Remember: the MLOps landscape will keep evolving, and that’s perfectly fine. What matters is building your foundation now. Start today with that first deployment, embrace the inevitable errors as learning opportunities, and trust that each small step forward is progress. Your future self will thank you for beginning now rather than waiting for the “perfect” moment.