Monitor your AI models continuously by tracking performance metrics, data drift, and prediction accuracy in real-time rather than waiting for user complaints to surface problems. Traditional application monitoring tools that track uptime and response times miss the unique challenges AI systems face: models degrade silently as real-world data shifts away from training conditions, biases emerge unexpectedly in production, and accuracy drops without triggering conventional alerts.

Implement specialized observability platforms that capture model-specific signals like feature distributions, prediction confidence scores, and input data quality. These tools detect when your recommendation engine starts favoring certain demographics, when your fraud detection model’s precision suddenly drops, or when incoming data no longer matches the patterns your model learned during training.

Establish baseline metrics during model deployment that reflect business outcomes, not just technical performance. A chatbot’s response time matters less than whether it correctly interprets user intent. An image classifier’s throughput is secondary to its false positive rate in medical diagnoses. Define what success looks like for each AI system within your specific context.

Create automated feedback loops that connect model predictions to actual outcomes. Link your loan approval model’s decisions to default rates, connect your demand forecasting system to actual sales, or tie your customer churn predictions to retention data. This closed-loop monitoring reveals whether your AI systems deliver real value or simply generate plausible-looking outputs that miss the mark in practice.

What Is Observability AI?

Monitoring vs. Observability: Understanding the Difference

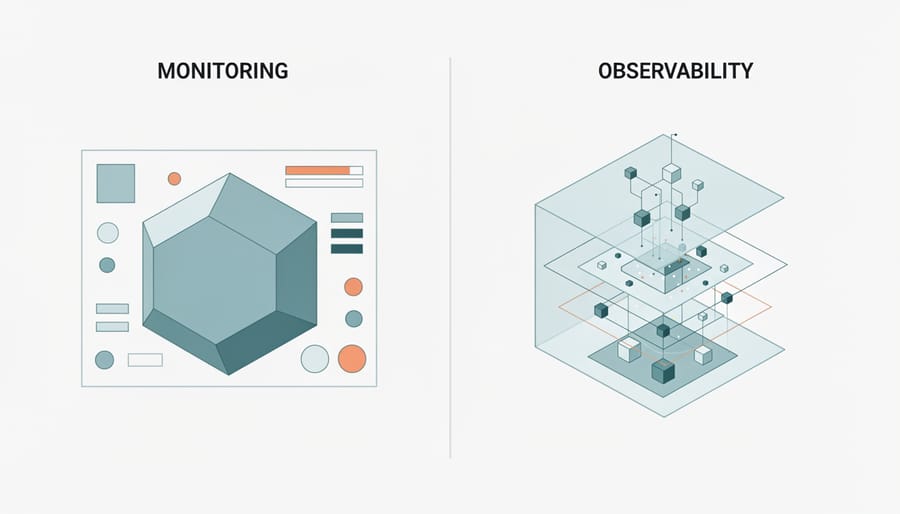

Think of monitoring like a car’s dashboard warning light. When the check engine light turns on, you know something is wrong—that’s monitoring telling you what broke. But to understand why the engine is failing, you need to pop the hood, check various systems, and trace the root cause. That deeper investigation is observability.

In traditional software systems, monitoring tracks predefined metrics: Is the server up? Are response times normal? It works great when you know exactly what to watch. You set thresholds, and when something crosses that line, an alert fires.

Observability takes a different approach. Instead of just tracking known problems, it gives you the tools to investigate unknown issues. It collects rich, contextual data about your system’s internal state, allowing you to ask questions you didn’t anticipate when you first set things up.

Here’s a practical example: Your AI model’s accuracy suddenly drops. Monitoring tells you the accuracy metric fell below 85 percent. That’s useful, but it doesn’t explain why. Observability lets you dig deeper—you can trace specific predictions, examine input data distributions, check if recent training data differed from production data, and identify which feature values changed.

For AI systems, this distinction becomes critical. Machine learning models fail in unpredictable ways. Data drift, bias amplification, and edge cases emerge unexpectedly. You can’t anticipate every failure mode upfront, which is exactly why observability matters more than ever in AI operations.

Why AI Models Need Special Observability

The Hidden Dangers: Model Drift and Data Drift

Imagine spending months perfecting an AI model that predicts customer purchases with 95% accuracy, only to watch it crumble to 60% within weeks of deployment. This isn’t science fiction—it’s the reality of model drift and data drift, two silent killers of AI systems in production.

Model drift occurs when your AI’s performance degrades over time, even though nothing changed in the code. Think of it like a weather forecaster trained only on summer data trying to predict winter storms. The model itself hasn’t broken, but the world it’s trying to understand has shifted beneath its feet.

Data drift happens when the incoming production data no longer resembles the data used during training AI models. A classic example: a fraud detection system trained in 2019 suddenly facing pandemic-era shopping behaviors in 2020. Online purchases skyrocketed, transaction patterns completely changed, and legitimate purchases started triggering false alarms because they looked nothing like the historical training data.

Consider the real case of a major retail company whose product recommendation engine started suggesting winter coats in July. The culprit? Their model was trained on data from physical stores, but after expanding online, customer browsing patterns shifted dramatically. Geographic diversity meant shoppers from different hemispheres needed different seasonal items simultaneously—something the original training data never captured.

The challenge is that these drifts happen gradually, like a ship slowly veering off course. Without proper observability tools monitoring your model’s behavior, you might not notice until you’ve sailed miles in the wrong direction. By then, customer trust erodes, revenue drops, and emergency retraining becomes necessary—a costly scramble that proper monitoring could have prevented.

When Good Models Go Bad: Real Production Failures

In 2016, a major e-commerce platform discovered its recommendation engine was suggesting winter coats to customers in tropical climates while promoting swimwear to shoppers experiencing snowstorms. The culprit? A subtle data drift that went unnoticed for weeks, costing millions in lost sales. This is just one example of why AI models fail in real-world scenarios.

Consider the case of a fraud detection system at a payment processor that suddenly started flagging legitimate transactions as suspicious. The model had been trained on pre-pandemic shopping patterns, but when consumer behavior shifted dramatically during COVID-19, the system couldn’t adapt. Thousands of genuine customers found their purchases blocked, leading to frustrated calls to customer service and damaged trust.

Another sobering example comes from a healthcare AI tool that began making inaccurate predictions about patient readmission risks. The model’s performance had gradually degraded over six months as hospital protocols changed, but without proper monitoring, nobody noticed until doctors started questioning the recommendations. By then, the system had already influenced hundreds of care decisions.

These failures share a common thread: they could have been caught early with proper observability. Warning signs like shifting input distributions, declining prediction confidence, and changing feature correlations were present but invisible to the teams managing these systems. The cost wasn’t just financial but also measured in broken customer trust and potential patient harm.

Essential Features of AI Observability Tools

Performance Tracking That Actually Matters

Not all metrics deserve equal attention when monitoring AI systems. Think of it like checking your car’s dashboard—while dozens of indicators exist, you really need to watch fuel, speed, and engine temperature. The same principle applies to AI observability.

Accuracy metrics come first because they tell you if your model still works. For a recommendation system, track click-through rates and conversion percentages. For image classification, monitor prediction confidence scores and error rates across different categories. A fraud detection model might maintain 95% accuracy overall, but if it suddenly drops to 70% for transactions from a specific region, you’ve spotted a critical problem.

Latency monitoring matters because real-world users won’t wait. Your model might be brilliant, but if it takes five seconds to return a product recommendation, customers abandon their carts. Track response times at different percentiles—the average might look fine while 10% of users experience unacceptable delays. E-commerce systems typically need sub-second responses, while batch processing jobs can tolerate minutes.

Throughput tracking shows how many predictions your system handles per second. During a flash sale or viral event, can your model scale to meet demand? Resource utilization rounds out the picture by revealing computational costs. GPU memory usage, CPU loads, and API call volumes directly impact your infrastructure bills.

The metrics that matter most depend on your use case. Customer-facing chatbots prioritize latency and accuracy. Batch processing systems focus on throughput and resource efficiency. Match your monitoring strategy to your specific business objectives.

Data Quality Monitoring: Your First Line of Defense

Think of data quality monitoring as a security checkpoint for your AI system—it’s where you catch problems before they cascade into costly mistakes. Just as airport security screens passengers before they board, your AI needs robust validation to inspect every piece of incoming data.

Input validation forms your first checkpoint. This means checking that data arrives in the expected format, with values falling within reasonable ranges. Imagine a loan approval model suddenly receiving negative income values or ages over 150 years—without validation, these impossible inputs could lead to absurd predictions. Simple checks catch these issues immediately, preventing garbage data from corrupting your model’s outputs.

Feature distribution tracking takes monitoring deeper. Your AI model learned patterns from training data with specific characteristics—perhaps customer ages typically ranged from 18 to 75, or purchase amounts averaged around $50. When production data shifts dramatically, like suddenly seeing mostly purchases over $500, your model operates outside its comfort zone. Tracking these distributions helps you spot drift before accuracy plummets.

Anomaly detection acts as your early warning system. Consider a recommendation engine for an e-commerce platform. If data pipeline monitoring reveals that 30% of incoming user sessions contain null product categories—when historically it was under 2%—you’ve identified a critical issue. Perhaps an upstream data source changed format, or a tracking pixel broke.

The impact is tangible: one retailer caught a data quality issue that would have caused their pricing model to suggest discounts on already-discounted items, potentially costing thousands in lost revenue. Quality monitoring transformed a near-disaster into a quick fix.

Explainability and Debugging Capabilities

Understanding why your AI model made a particular decision is crucial for building trust and fixing problems. Explainability and debugging capabilities give you the tools to peek inside the black box and see what’s really happening when your model processes data.

Think of it like a doctor explaining a diagnosis. You wouldn’t accept “the computer says so” without understanding the reasoning. The same applies to AI systems. When a loan application gets rejected or a fraud detection system flags a transaction, stakeholders need to know why.

Prediction analysis helps you trace individual decisions back to their sources. For instance, if your recommendation engine suggests an unusual product to a customer, you can examine which features influenced that choice—was it purchase history, browsing behavior, or seasonal patterns?

Feature importance tracking reveals which input variables carry the most weight in your model’s decisions. Imagine discovering that your hiring AI relies too heavily on zip codes rather than qualifications—that’s a problem you can only fix if you’re measuring feature importance.

Modern observability platforms integrate model interpretability tools like SHAP values and LIME explanations directly into their dashboards. These tools break down complex predictions into understandable components, showing you exactly how each input contributed to the final output. This transparency becomes invaluable when debugging unexpected behaviors or meeting regulatory requirements for AI accountability.

Popular AI Observability Tools You Should Know

Open-Source Solutions for Getting Started

If you’re just starting your AI observability journey, several open-source tools can help you monitor machine learning models without breaking the bank. These solutions offer practical entry points for understanding how your AI systems behave in production.

MLflow has become a go-to platform for many beginners because it handles the complete machine learning lifecycle. Beyond experiment tracking, it logs model parameters, metrics, and performance data automatically. You can compare different model versions side-by-side and quickly identify when accuracy starts declining. The main limitation? It requires some initial setup effort, and scaling to handle massive data volumes might need additional infrastructure.

Evidently AI specializes in detecting data drift and model degradation. Imagine you’ve deployed a customer churn prediction model, and suddenly your input data characteristics change because of a new marketing campaign. Evidently AI catches these shifts before they tank your model’s performance. It generates intuitive visual reports that even non-technical stakeholders can understand. However, it focuses primarily on monitoring rather than the full observability picture, so you might need complementary tools.

Prometheus, traditionally used for infrastructure monitoring, adapts surprisingly well to machine learning workloads. You can track custom metrics like prediction latency, request volumes, and error rates. When paired with Grafana for visualization, it creates powerful dashboards. The downside is that Prometheus wasn’t built specifically for ML, so tracking model-specific metrics like feature distributions requires extra configuration work.

These tools provide solid foundations, but remember they typically require more hands-on management than commercial alternatives.

Enterprise-Grade Platforms

When you’re ready to invest in a robust solution, commercial platforms offer comprehensive packages designed specifically for AI production environments. These enterprise-grade tools handle the heavy lifting so your team can focus on building better models rather than debugging mysterious failures.

Datadog ML Monitoring extends their popular infrastructure monitoring into the AI space, letting you track model performance alongside your application metrics and logs. Think of it as a unified dashboard where you can see if your recommendation engine started misbehaving at the exact moment your database experienced latency issues. The integration with existing DevOps workflows makes adoption smoother for teams already using Datadog.

Arize AI specializes entirely in machine learning observability, offering detailed drift detection, explainability features, and performance tracking. Their platform excels at comparing model versions side-by-side, which proves invaluable when you’re testing updates. You can quickly spot whether your new fraud detection model actually improves on the old one or just shifts problems around.

WhyLabs takes a privacy-first approach, generating statistical profiles of your data without requiring you to send raw information to their servers. This matters enormously in healthcare or finance where data sensitivity restricts what you can share externally.

What you’re paying for with these platforms includes automated alerting when models drift, pre-built dashboards that visualize complex metrics simply, seamless integration with popular ML frameworks, and crucially, support teams who understand production AI challenges. For organizations running multiple critical models, the investment typically pays for itself by preventing just one significant model failure.

Getting Started: Your First Steps Toward AI Observability

Start Small: The Minimum Viable Observability Setup

You don’t need a perfect observability system from day one. Think of it like learning to drive—you start with the basics before mastering advanced maneuvers.

Begin by tracking three essential metrics: prediction latency (how long your model takes to respond), prediction volume (how many requests you’re handling), and error rates (when things go wrong). These form your early warning system. Set up simple logging to capture each prediction’s input, output, and timestamp. This creates a paper trail you can follow when investigating issues.

Most cloud platforms offer built-in monitoring dashboards that require minimal setup. Start there. Configure alerts for obvious problems: if your model suddenly takes five seconds instead of 500 milliseconds to respond, you want to know immediately. If error rates spike above 5%, something needs attention.

During your first week, focus on answering one question: “Is my model working right now?” That’s it. Don’t overcomplicate things by tracking dozens of metrics you won’t use.

As you gain confidence, gradually expand your coverage. Add data quality checks in week two—monitor whether incoming data matches your training distribution. By week three, introduce performance metrics like accuracy or precision on a sample of predictions.

Think of this as building with LEGO blocks rather than constructing a skyscraper overnight. Each piece you add should solve a specific problem you’ve encountered. This practical, incremental approach prevents overwhelm while building a robust monitoring foundation that actually serves your needs.

Common Pitfalls and How to Avoid Them

Teams implementing AI observability often stumble over a few common mistakes that can derail their monitoring efforts. One frequent pitfall is treating AI observability like traditional software monitoring. A data science team at a major retailer learned this the hard way when they applied standard application monitoring tools to their recommendation engine, only to miss a gradual model degradation that cost them millions in lost sales. The lesson? AI models need specialized metrics like prediction drift and feature distribution changes, not just uptime checks.

Another mistake is collecting too much data without a clear plan. One fintech startup found themselves drowning in terabytes of model logs with no actionable insights. They spent more time managing storage costs than actually improving their models. The solution is to start with targeted metrics aligned to business outcomes, then expand gradually.

Teams also frequently underestimate the importance of establishing baselines early. Without knowing what normal looks like for your model’s behavior, you cannot spot anomalies effectively. A healthcare AI team discovered anomalous predictions weeks too late because they had no baseline metrics from their model’s initial deployment.

Finally, neglecting to involve domain experts in the observability setup leads to monitoring the wrong things. Your data scientists understand model behavior better than anyone—make them part of defining what to observe from day one.

As AI systems become the backbone of critical business operations—from personalized recommendations that drive revenue to fraud detection systems protecting customer assets—observability transitions from a nice-to-have feature to an absolute necessity. The reality is straightforward: if you can’t see what’s happening inside your AI systems, you can’t trust them with decisions that matter to your business and customers.

Think about it this way. Traditional software fails predictably—a broken function throws an error, a crashed server stops responding. But AI systems can fail silently, continuing to produce outputs while gradually degrading in accuracy or developing biases that harm real users. Without proper observability, these silent failures can go undetected for weeks or months, eroding customer trust and potentially causing significant financial or reputational damage.

The encouraging news is that implementing observability doesn’t require a complete overhaul of your existing infrastructure. Start small and practical. Begin by logging your model’s predictions alongside actual outcomes when available. Track basic performance metrics like response times and error rates. Set up simple alerts for unusual patterns in your prediction distributions. These foundational steps cost little in terms of time and resources but provide immediate visibility into your AI’s behavior.

As your AI systems grow more sophisticated and business-critical, your observability practices can evolve alongside them. The key is to start now, even if modestly, rather than waiting until a production incident forces your hand. Your future self—and your users—will thank you for taking this proactive approach to maintaining healthy, trustworthy AI systems.