The relentless cycle of consumption and waste threatens our planet’s delicate ecosystems, with global consumer spending now exceeding $50 trillion annually. Every purchase decision ripples through supply chains, contributing to deforestation, carbon emissions, and plastic pollution that will impact generations to come. Yet this crisis also presents an unprecedented opportunity for transformation.

Like popular consumer LLMs reshaping how we process information, our consumption patterns can evolve to protect rather than harm our environment. The choices we make today – from refusing single-use plastics to embracing circular economy principles – will determine whether we can balance human prosperity with ecological sustainability.

This critical intersection between consumer behavior and environmental protection demands immediate action from individuals, businesses, and policymakers alike. By understanding how our purchasing decisions reverberate through global supply chains and ecosystems, we can make informed choices that support both human needs and environmental health. The question is no longer whether we should change our consumption habits, but how quickly we can adapt to ensure a livable planet for future generations.

The Carbon Footprint of Your AI Chat

Training Costs: More Than Just Compute Power

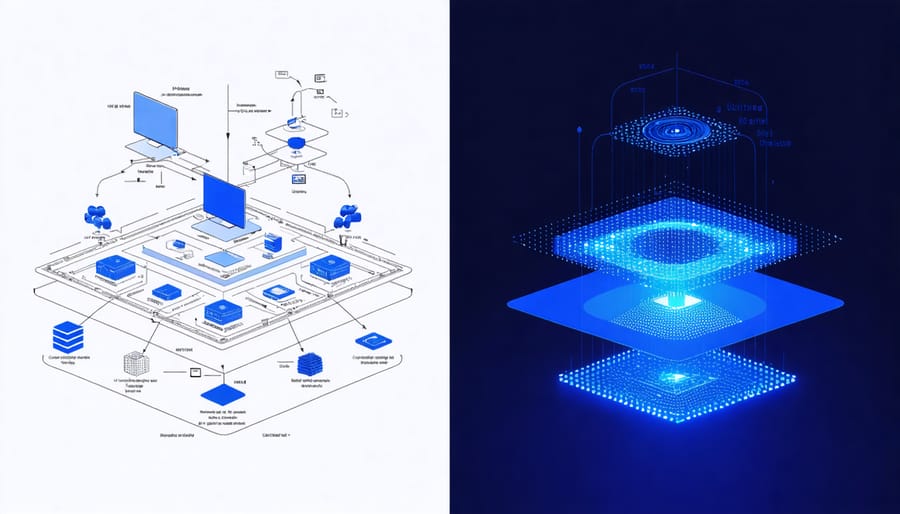

When discussing the computational costs of AI models, many focus solely on electricity consumption during training. However, the environmental footprint extends far beyond just powering the computers. The creation and maintenance of data centers require significant resources, from the manufacturing of specialized hardware to the sophisticated cooling systems that keep them running efficiently.

Consider this: training a single large language model can consume as much energy as 100 American households use in an entire year. The cooling systems alone typically account for 40% of a data center’s total energy consumption. Additionally, the hardware components – particularly specialized GPUs and TPUs – require rare earth minerals and complex manufacturing processes that contribute to environmental degradation.

Water usage is another often-overlooked factor. Data centers use millions of gallons of water annually for cooling purposes. In regions already facing water scarcity, this creates additional environmental stress. The production of semiconductor chips, essential for AI training, also requires substantial amounts of ultra-pure water.

The infrastructure supporting these training operations, including backup power systems and network equipment, adds another layer of environmental impact. When we factor in the regular hardware updates and replacements needed to maintain optimal performance, the true environmental cost becomes even more significant.

Daily Usage Impact: Every Prompt Counts

Every time we prompt an AI model like ChatGPT or use an AI image generator, we’re contributing to a significant environmental footprint. A single conversation with an AI assistant can consume as much energy as charging your smartphone, and when multiplied by millions of daily users, the impact becomes substantial.

To put this into perspective, training a large language model can emit as much carbon as five cars over their entire lifetimes. While daily usage consumes far less, the cumulative effect of billions of prompts processed worldwide adds up quickly. For instance, if an average user interacts with AI tools for 30 minutes daily, they could generate the equivalent carbon footprint of a 10-mile car journey each month.

However, this doesn’t mean we should stop using AI tools altogether. Instead, we can adopt more mindful usage habits. Consider batching your AI queries instead of making multiple separate requests. Before generating AI art or running multiple variations of the same prompt, ask yourself if it’s necessary. Use draft modes when available, as they typically consume less computational power.

The key is balance. By being conscious of our AI consumption and treating each prompt as a resource with environmental consequences, we can help minimize our digital carbon footprint while still benefiting from these powerful tools. Small changes in our daily AI usage habits can lead to significant collective impact on environmental sustainability.

Sustainable AI: Making Better Choices

Efficient Model Architectures

Recent developments in AI architecture have shown that bigger isn’t always better. While large language models like GPT-3 and BERT have dominated headlines, a new wave of efficient AI solutions is emerging to address environmental concerns. These smaller, optimized models can achieve comparable performance while significantly reducing energy consumption and computational resources.

Take DistilBERT, for example, which maintains 97% of BERT’s performance while using only 40% of its parameters. Similarly, MobileNET architectures have revolutionized mobile AI applications by delivering impressive results with minimal resource requirements. These developments prove that we can balance performance with environmental responsibility.

The key to these efficiency gains lies in techniques like knowledge distillation, pruning, and quantization. By removing redundant parameters and compressing information into smaller formats, these approaches create leaner models that require less power to run. This is particularly important for edge devices and smartphones, where energy efficiency directly impacts battery life and user experience.

For consumers, this means access to powerful AI capabilities without the massive environmental footprint of traditional large-scale models. Organizations can now choose more sustainable options without sacrificing functionality, making environmental consciousness a practical reality in AI deployment.

Green Computing Initiatives

The tech industry’s growing awareness of environmental impact has sparked significant innovations in green computing, particularly in AI and data centers. Major tech companies are increasingly powering their AI operations with renewable energy sources, marking a crucial shift toward sustainability. Google, for instance, matches 100% of its computing energy consumption with renewable energy purchases, while Microsoft aims to be carbon negative by 2030.

These initiatives extend beyond just power sources. Modern data centers are implementing advanced cooling systems that use outside air or liquid cooling, dramatically reducing energy consumption. Companies are also optimizing their AI algorithms to be more energy-efficient, developing models that require less computational power while maintaining performance.

Cloud providers are leading by example, with Amazon Web Services (AWS) pledging to run on 100% renewable energy by 2025. This commitment influences smaller companies and startups to make environmentally conscious choices when deploying their AI solutions.

The adoption of edge computing is another promising trend, as it reduces the need for data transmission to distant servers, thereby lowering energy consumption. Additionally, hardware manufacturers are developing more energy-efficient processors specifically designed for AI workloads, helping to minimize the carbon footprint of artificial intelligence operations.

These sustainable practices not only benefit the environment but also often result in cost savings, making green computing initiatives increasingly attractive to businesses of all sizes.

Consumer Best Practices

To minimize your AI-related carbon footprint, start by being mindful of your cloud computing usage. Choose eco-friendly data centers when possible and optimize your AI model training sessions to run during off-peak hours. Consider using smaller, more efficient models when they can achieve similar results to larger ones.

Practice good data hygiene by regularly cleaning up unused files and optimizing storage. Compress data when possible and archive what you don’t need immediate access to. This not only reduces storage requirements but also minimizes the energy needed for data maintenance.

Enable power-saving features on your devices and opt for edge computing when suitable. Running AI applications locally on energy-efficient devices can significantly reduce the carbon footprint compared to constant cloud processing.

Consider using pre-trained models instead of training new ones from scratch. This approach saves considerable computational resources and energy. When training is necessary, use transfer learning to build upon existing models rather than starting from zero.

Monitor your AI resource usage through available analytics tools and set personal sustainability goals. Many cloud providers now offer carbon footprint tracking features – use these to understand and improve your impact.

Finally, support companies and services that prioritize green AI initiatives and sustainable computing practices. Your choices as a consumer can influence the industry’s direction toward more environmentally conscious AI development.

The Future of Sustainable AI

Next-Generation Model Efficiency

The future of AI is becoming increasingly focused on sustainability, with developers and researchers working tirelessly to create more efficient models that minimize environmental impact. As next-generation AI technologies emerge, we’re seeing remarkable innovations in model architecture that promise to reduce energy consumption while maintaining or even improving performance.

One of the most promising developments is the rise of lightweight models that can run effectively on edge devices. These models require significantly less computational power than their predecessors, resulting in reduced energy consumption and a smaller carbon footprint. For instance, recent advances in model compression techniques have achieved up to 90% reduction in model size while retaining 95% of their original accuracy.

Researchers are also exploring novel approaches to neural network design, such as sparse architectures and dynamic pruning methods. These innovations allow AI models to adapt their resource usage based on the complexity of the task at hand, much like how our human brains don’t use full processing power for simple tasks.

The integration of energy-aware training algorithms is another groundbreaking development. These algorithms optimize not just for accuracy but also for energy efficiency during the training process. Some implementations have shown energy savings of up to 70% compared to traditional training methods.

Hardware manufacturers are partnering with AI developers to create specialized chips that maximize efficiency. These purpose-built processors can run AI models with significantly lower power consumption than general-purpose hardware, making sustainable AI more accessible to everyday consumers.

Looking ahead, we can expect to see more emphasis on federated learning and distributed computing approaches that spread computational loads across multiple devices, reducing the strain on any single system while maintaining high performance standards. This shift towards more efficient architectures isn’t just good for the environment – it’s essential for making AI more accessible and sustainable in the long term.

Industry Commitments and Standards

In recent years, major tech companies and industry leaders have made significant strides in addressing the environmental impact of AI and consumer technology. Companies like Google, Microsoft, and Amazon have pledged to achieve carbon neutrality or negative emissions within the next decade, setting new benchmarks for sustainable technology practices.

These commitments are backed by concrete initiatives, such as Microsoft’s $1 billion Climate Innovation Fund and Google’s achievement of matching 100% of its global electricity consumption with renewable energy purchases. Additionally, many companies are now adopting standardized reporting frameworks to measure and disclose their environmental impact, making it easier for consumers to make informed choices.

The tech industry has also established several voluntary standards and certifications. The Green Software Foundation, launched in 2021, provides guidelines for developing environmentally sustainable software. Meanwhile, the Energy Star certification program continues to evolve, now including criteria for AI-enabled devices and data centers.

Regulatory bodies worldwide are implementing stricter environmental standards for technology companies. The European Union’s Green Deal Digital Strategy requires tech companies to disclose their environmental impact and meet specific sustainability targets. Similar regulations are emerging in other regions, pushing the industry toward more sustainable practices.

Industry consortiums are working to develop common metrics for measuring AI’s environmental impact. The MLPerf consortium, for example, now includes energy efficiency benchmarks in its performance evaluations. These standardized measurements help developers and consumers understand the environmental cost of different AI solutions.

Companies are also increasingly transparent about their data center operations, publishing regular environmental impact reports and setting specific targets for power usage effectiveness (PUE). This transparency enables consumers to make environmentally conscious choices when selecting cloud services or technology providers.

These industry commitments and standards represent a crucial step toward sustainable AI development, though experts agree that continued progress and stricter enforcement mechanisms are necessary to achieve meaningful environmental impact reduction.

The relationship between consumerism and environmental impact is more complex than ever in our digital age. As we’ve explored throughout this article, our consumption patterns, particularly in technology and AI usage, have significant implications for our planet’s health. The energy demands of data centers, the electronic waste from frequent device upgrades, and the carbon footprint of cloud computing all contribute to environmental challenges.

However, there’s room for optimism. By making informed choices about our technology use, we can significantly reduce our environmental impact. Simple actions like optimizing our cloud storage, participating in e-waste recycling programs, and choosing energy-efficient devices can make a meaningful difference. Companies are increasingly adopting green computing practices, and consumers are becoming more conscious of their digital carbon footprint.

Moving forward, we must balance technological advancement with environmental responsibility. This means supporting companies that prioritize sustainable practices, extending the lifecycle of our devices, and being mindful of our digital consumption habits. As individuals, we can start by auditing our own technology use and making small but impactful changes in our daily routines.

The future of sustainable technology lies in our hands. By making conscious choices today, we can help ensure that technological progress doesn’t come at the expense of environmental health. Let’s commit to being more mindful consumers and advocates for sustainable technology practices.