Imagine teaching a robot to navigate a maze without giving it a map. Instead of programming every possible turn, you let it explore, rewarding successful moves and penalizing dead ends. Within minutes, the robot learns the optimal path through pure trial and error. This is the power of Q-learning neural networks, a breakthrough fusion of two transformative technologies that’s reshaping how machines learn to make decisions.

Q-learning neural networks combine deep learning’s pattern recognition capabilities with reinforcement learning’s decision-making framework. Traditional Q-learning uses tables to store every possible state-action combination, which becomes impossible when dealing with complex environments like video games or autonomous vehicles. Neural networks solve this limitation by approximating these values, enabling machines to handle millions of scenarios they’ve never encountered before.

Think of it as teaching a child to play chess. You don’t memorize every possible board position. Instead, you learn patterns and strategies that apply broadly. Similarly, Q-learning neural networks extract general principles from experience, making intelligent predictions about unfamiliar situations. This approach powered DeepMind’s AlphaGo to defeat world champions and enables self-driving cars to navigate unpredictable roads.

The technology matters because it bridges the gap between artificial intelligence theory and real-world application. Unlike supervised learning, which requires massive labeled datasets, Q-learning neural networks learn through interaction with their environment. They discover solutions humans might never imagine, optimizing everything from energy consumption in data centers to personalized medical treatments.

Whether you’re a student exploring machine learning fundamentals or a professional considering AI implementation, understanding Q-learning neural networks opens doors to one of the most dynamic fields in technology. This guide breaks down the concept into digestible pieces, revealing how this elegant combination of algorithms is transforming industries and pushing the boundaries of what machines can achieve.

The Rise of Machine Learning: From Simple Algorithms to Smart Systems

Why Neural Networks Changed Everything

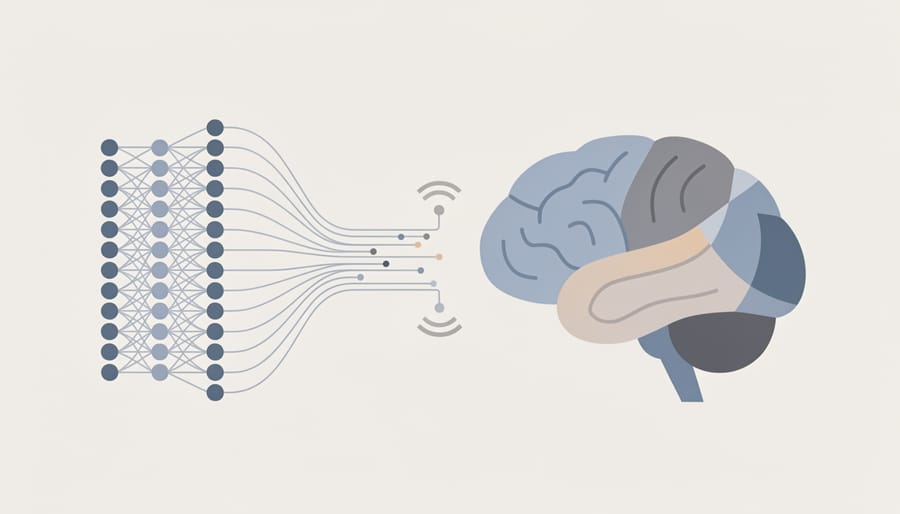

Think of your brain as a massive network of interconnected neurons, each one communicating with thousands of others to help you recognize faces, understand language, or catch a ball. Neural networks in computers work remarkably similarly, which is why they revolutionized artificial intelligence.

At their core, neural networks are mathematical systems inspired by biological brains. Instead of biological neurons, they use layers of interconnected nodes (or artificial neurons) that process information in waves. When you show a neural network a picture of a cat, the first layer might detect edges, the second layer combines those edges into shapes like ears and whiskers, and deeper layers recognize these patterns as distinctly feline features.

Here’s what made neural networks game-changing: they learn from examples rather than following rigid, hand-coded rules. Before neural networks dominated AI, programmers had to explicitly tell computers every single rule for recognizing patterns. Imagine trying to write rules for identifying all possible cat images—an nearly impossible task. Neural networks flip this approach. You show them thousands of cat pictures labeled “cat” and dog pictures labeled “dog,” and they figure out the distinguishing features themselves through a process that adjusts the strength of connections between nodes.

This learning process, where neural networks gradually improve their performance through experience, mirrors how neural networks learn to optimize their internal parameters. Each time the network makes a mistake, it adjusts these connections slightly, becoming more accurate with practice.

The breakthrough came when computing power and data availability reached critical thresholds in the 2010s. Suddenly, neural networks could tackle problems that had stumped AI researchers for decades—from beating world champions at complex games to understanding human speech with unprecedented accuracy.

What Makes Q-Learning Different (And Powerful)

The ‘Q’ Stands for Quality (But Not the Way You Think)

At the heart of Q-learning lies a surprisingly simple idea: what if we could assign a quality score to every action we might take in any given situation? That’s exactly what the Q-value does, and understanding it is your key to unlocking how these systems learn.

Think of Q-values like ratings in a video game guide. Imagine you’re playing a dungeon crawler, and at each room, you can go left, right, or straight. A perfect strategy guide would tell you: “In the blue room, going left gives you 85 points on average, right gives you 60 points, and straight gives you 40 points.” That’s essentially what Q-values represent—they’re predictions of future rewards for taking specific actions in specific states.

The Q-function works like a massive lookup table. For every situation the system encounters (called a state) and every possible action it could take, there’s a corresponding Q-value. Higher values mean better long-term outcomes. When a robot needs to decide whether to turn left or right, it simply checks which action has the higher Q-value for its current state and picks that one.

Here’s where it gets interesting: these values aren’t programmed in advance. The system learns them through experience, much like you’d learn which coffee shop makes the best latte by trying different ones. Each time the system takes an action and receives feedback (a reward or penalty), it updates the corresponding Q-value. Good outcomes increase the score, bad ones decrease it.

This trial-and-error process gradually builds a comprehensive quality map of the entire decision space, guiding the system toward smarter choices over time.

Rewards, Penalties, and Learning from Mistakes

At the heart of Q-learning lies a beautifully simple concept: learn by doing, then adjust based on what happens. Think of it like teaching a dog new tricks. When your dog sits on command and gets a treat, it learns that sitting equals reward. When it jumps on guests and gets scolded, it learns that jumping equals penalty. Q-learning neural networks follow this same fundamental principle.

The reinforcement learning loop begins when the system takes an action in its environment. Let’s say we’re training an AI to play a video game. The neural network observes the current game state, perhaps seeing an enemy approaching from the right. Based on its current knowledge stored in the Q-values, it decides to move left. This is the action step.

Next comes the crucial feedback moment. The environment responds to this action by providing a reward signal. If moving left helped the character avoid damage, the system receives a positive reward, maybe a score of +10. If the move caused the character to fall into a pit, it receives a negative reward, perhaps -50. These numerical values tell the system whether its decision was good or bad.

Here’s where the magic happens. The neural network doesn’t just remember that one specific situation. Instead, it updates its understanding of similar situations. The Q-values, those predicted future rewards we discussed earlier, get adjusted based on this new experience. If the network expected a reward of +5 but received +10, it increases its Q-value estimate for that state-action pair. This adjustment happens through backpropagation, where the neural network fine-tunes its internal weights.

This cycle repeats thousands or millions of times. Each iteration provides another data point, another lesson learned. Gradually, the system develops intuition about which actions lead to success and which lead to failure, building a comprehensive strategy through trial, error, and continuous refinement.

When Neural Networks Meet Q-Learning: A Perfect Partnership

The Problem Q-Learning Had (And How Neural Networks Solved It)

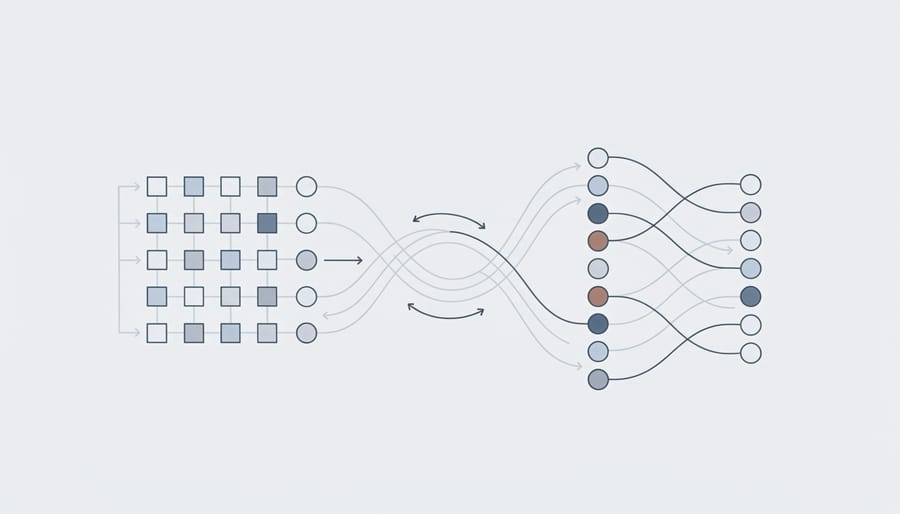

Traditional Q-learning works beautifully when dealing with simple problems, like teaching an agent to navigate a small grid world. Imagine a robot learning to move through a 10×10 room – you could create a table with just 100 positions and track which action works best in each spot. But what happens when you try to scale this up?

Here’s where the problem hit: real-world situations are rarely that simple. Consider teaching a computer to play a video game like Space Invaders. The screen alone contains thousands of pixels, each with different color values. If you tried creating a traditional Q-table for this, you’d need to store values for every possible combination of pixel arrangements – that’s an astronomically large number, far more than all the atoms in the universe. Your computer would run out of memory before you even started learning.

This scalability crisis plagued researchers throughout the 1990s and early 2000s. Q-learning could solve toy problems, but collapsed under the weight of anything resembling real-world complexity. The technique was sound in theory but practically useless for most interesting applications.

Then came the breakthrough that changed everything. Instead of storing a massive table, researchers realized they could use neural networks as function approximators. Think of it like this: rather than memorizing every single situation, the neural network learns the underlying patterns and generalizes from them. It’s similar to how you don’t need to memorize every possible chess position to play well – you learn principles and patterns that apply broadly.

This combination, enabled by computational breakthroughs in processing power, meant that Q-learning could finally tackle high-dimensional problems. The neural network takes in complex observations (like raw pixel data) and outputs Q-values for each possible action, all without needing impossible amounts of memory storage. This innovation transformed Q-learning from an academic curiosity into a practical powerhouse.

How a Q-Learning Neural Network Actually Works

Let’s imagine you’re teaching a robot to play a simple maze game where it needs to find treasure while avoiding traps. This is exactly how a Q-learning neural network learns, and walking through this example will help demystify the entire process.

When the game starts, the robot knows absolutely nothing. It doesn’t know which moves lead to treasure or which lead to traps. This is where Q-learning begins: with a completely blank slate. The robot starts making random moves, and here’s where the magic happens.

Every time the robot takes an action, like moving left or right, three important things occur. First, it observes its current situation (we call this the state). Second, it takes an action. Third, it receives feedback in the form of a reward: positive points for finding treasure, negative points for hitting traps, and small penalties for each step to encourage efficiency.

Here’s where the neural network enters the picture. Think of it as the robot’s brain, constantly learning from experience. The network takes in the current state of the game and outputs Q-values for each possible action. These Q-values are essentially predictions: “If I move left from here, how much reward will I likely get in the long run?”

Initially, these predictions are terrible because the network is just guessing. But with each move, something crucial happens. The robot compares what it predicted would happen with what actually happened. If it predicted moving right would give it 10 points but actually received 50 points for finding treasure, that’s valuable information.

The neural network then adjusts itself through a process called backpropagation, fine-tuning its internal connections to make better predictions next time. It’s learning to associate certain game situations with better or worse outcomes, building up knowledge move by move.

The clever part is that Q-learning doesn’t just consider immediate rewards. When the robot finds treasure after moving right, it remembers the entire sequence of moves that led there. The neural network learns that even moves that didn’t immediately pay off were actually good decisions because they eventually led to treasure.

After thousands of game attempts, patterns emerge. The network learns that certain positions are dangerous while others are promising. What started as random wandering becomes strategic navigation, all without anyone explicitly programming the solution.

Real-World Applications You’ve Probably Encountered

Gaming AI That Beats Human Champions

Q-learning neural networks have achieved some of the most impressive victories in artificial intelligence, particularly in the gaming world where they’ve outperformed human champions in games once thought impossible for machines to master.

The most famous example is DeepMind’s AlphaGo, which combines Q-learning principles with deep neural networks to play the ancient board game Go. In 2016, AlphaGo defeated Lee Sedol, one of the world’s top Go players, in a historic match that stunned the AI community. What made this achievement remarkable was Go’s complexity: with more possible board positions than atoms in the universe, the game requires intuition and strategic thinking that many believed computers couldn’t replicate. The system learned by playing millions of games against itself, gradually discovering winning strategies that sometimes surprised even expert players.

In video games, Q-learning neural networks have mastered classic Atari games like Breakout, Space Invaders, and Pong without being programmed with game-specific rules. These systems started as complete beginners, learning only from raw pixel inputs and game scores. Through repeated gameplay, they discovered advanced strategies, sometimes finding creative solutions humans hadn’t considered.

More recently, OpenAI’s Dota 2 bot and DeepMind’s StarCraft II agent demonstrated that these networks could handle real-time strategy games requiring split-second decisions, resource management, and adaptation to opponent strategies. These victories showcase how Q-learning neural networks can tackle problems with immense complexity, learning sophisticated behaviors through experience rather than explicit programming.

Robotics and Self-Driving Technology

Imagine a self-driving car approaching a busy intersection. It needs to decide whether to proceed, slow down, or stop, all while considering pedestrians, traffic lights, and other vehicles. This is where Q-learning neural networks become invaluable for robotics applications and autonomous vehicles.

In these systems, Q-learning helps machines learn optimal navigation strategies through trial and error. A robot or autonomous vehicle starts with no knowledge of its environment, but through repeated interactions, it learns which actions lead to successful outcomes. For example, a delivery robot learns that turning left at a specific corridor leads to its destination faster, while a self-driving car discovers the safest way to merge into highway traffic.

The neural network component enhances this learning by processing complex sensory data like camera feeds, lidar scans, and radar signals. Instead of memorizing every possible scenario, the network generalizes from past experiences to handle new situations. When a self-driving car encounters an unfamiliar road configuration, it can still make informed decisions based on similar situations it learned from previously.

This combination enables real-time decision-making in dynamic environments, making autonomous systems more adaptable and safer as they continue learning from each journey.

Personalization Engines and Recommendation Systems

Every time you open Netflix and see perfectly tailored movie suggestions, or when Spotify seems to read your mind with its playlist recommendations, you’re experiencing Q-learning neural networks at work. These intelligent systems have transformed how digital platforms interact with users, creating personalized experiences that feel almost magical.

Here’s how it works in practice: imagine you’re using a music streaming app. The recommendation engine observes your behavior—which songs you skip, which you replay, what time of day you listen to different genres. It treats each recommendation as an action that either earns a reward (you listen and enjoy) or a penalty (you skip immediately). Through continuous interaction, the neural network learns your unique preferences and adjusts its suggestions accordingly.

Shopping platforms like Amazon use similar technology to optimize product recommendations. The system doesn’t just look at what you’ve bought before; it learns from your browsing patterns, how long you view certain items, and even what you add to your cart but don’t purchase. Each interaction teaches the network something new about your shopping habits.

What makes these systems particularly powerful is their ability to balance exploration and exploitation—showing you familiar content you’ll likely enjoy while occasionally introducing new items that might surprise you. This keeps your experience fresh and engaging while maintaining high satisfaction rates. The result is a personalized digital environment that continuously adapts to your evolving tastes and preferences.

The Challenges (Because Nothing’s Perfect)

Training Time and Computational Costs

Training Q-learning neural networks requires significant computational resources, which can make them both expensive and time-consuming to develop. Understanding these requirements helps set realistic expectations for anyone looking to implement these systems.

The primary challenge lies in the iterative nature of reinforcement learning. Unlike supervised learning where you train on a fixed dataset, Q-learning agents must interact with their environment millions of times to learn effective strategies. Imagine teaching someone to play chess by having them play millions of games—that’s essentially what’s happening here, except a computer is both the student and practice partner.

Each interaction requires the neural network to process the current state, select an action, observe the outcome, and update its understanding. When you multiply this by millions of training episodes, the computational demands add up quickly. Training a basic Q-learning agent to master a simple video game might take several hours on a standard computer, while more complex tasks like robotic control or autonomous driving simulations can require days or weeks on powerful GPU clusters.

The costs extend beyond just processing power. Storing experience replay buffers, which hold millions of past experiences for the agent to learn from, demands substantial memory. Cloud computing services might charge hundreds or thousands of dollars for the resources needed to train sophisticated Q-learning systems. For researchers and hobbyists, this creates a barrier to entry that wasn’t as pronounced with traditional machine learning approaches working with static datasets.

The Exploration vs. Exploitation Dilemma

One of the most fascinating challenges in Q-learning, and reinforcement learning in general, is deciding when to explore new possibilities versus exploit what already works. Imagine you’ve discovered a restaurant you really enjoy. Do you keep going back to that same place because you know it’s good, or do you try new restaurants that might be even better but could also disappoint?

This is precisely the dilemma AI agents face during training. An agent using Q-learning must decide whether to take actions it believes will yield high rewards based on current knowledge (exploitation) or try different actions to potentially discover better strategies (exploration). Too much exploitation means the agent might miss superior solutions it hasn’t discovered yet. Too much exploration wastes time on clearly poor choices.

Consider a robot learning to navigate a warehouse. If it only exploits its current knowledge, it might repeatedly use a familiar path that takes five minutes, never discovering an alternate route that takes only three minutes. Conversely, if it constantly explores random paths, it wastes valuable time testing obviously inefficient routes.

The solution involves using techniques like epsilon-greedy strategies, where the agent exploits most of the time but occasionally explores randomly. This balance ensures continuous learning while maintaining good performance, allowing the neural network to refine its understanding and discover optimal strategies over time.

Where Q-Learning Neural Networks Are Headed Next

The future of Q-learning neural networks is unfolding in exciting directions that promise to reshape how machines learn and interact with the world. As researchers push the boundaries of what’s possible, several emerging trends are gaining momentum.

One major area of development is multi-agent reinforcement learning, where multiple AI systems learn to cooperate or compete with each other. Imagine autonomous vehicles negotiating traffic patterns together, or robotic teams coordinating warehouse operations. These systems use enhanced Q-learning architectures to understand not just their own actions, but how their decisions affect other agents in shared environments.

Transfer learning is another frontier that’s capturing attention. Current Q-learning systems often need to start from scratch when tackling new problems, but researchers are developing methods that allow knowledge gained in one domain to accelerate learning in another. Think of it like teaching an AI to play chess and then having it apply those strategic thinking skills to resource management games with far less training time.

The integration of Q-learning with other AI approaches is creating hybrid systems with remarkable capabilities. Combining Q-learning with natural language processing could enable robots that learn from both actions and verbal instructions. Meanwhile, merging it with computer vision advances is producing systems that can navigate complex visual environments with unprecedented efficiency.

Energy efficiency is becoming a critical focus as well. Scientists are designing lightweight Q-learning algorithms that can run on edge devices like smartphones and IoT sensors, bringing intelligent decision-making capabilities to everyday gadgets without requiring cloud connectivity.

Healthcare applications are particularly promising. Researchers are exploring how Q-learning networks could personalize treatment plans, optimize hospital resource allocation, and even assist in surgical planning by learning from thousands of past procedures.

As AI’s growing impact continues accelerating, Q-learning neural networks will likely become more explainable and transparent. This addresses a crucial need in regulated industries where understanding why an AI made specific decisions matters as much as the decision itself. The next decade promises breakthroughs that will make these systems more accessible, powerful, and integrated into our daily lives.

Q-learning neural networks represent far more than just another algorithm in the artificial intelligence toolkit—they embody a fundamental shift in how machines learn to solve problems. Rather than relying on explicit programming where developers painstakingly code every possible scenario and response, these systems learn through experience, trial and error, and the accumulation of knowledge over time. Much like how you learned to ride a bike by falling, adjusting, and eventually mastering balance, Q-learning neural networks discover optimal strategies by exploring their environment and learning from the consequences of their actions.

This experiential approach to machine learning opens doors that traditional programming simply cannot. We’ve seen this technology guide robots through complex environments, optimize warehouse logistics, create unbeatable game-playing agents, and even contribute to advances in healthcare and autonomous vehicles. The beauty lies in its adaptability—these networks don’t need to be reprogrammed for every new situation. Instead, they develop understanding through interaction, making them remarkably versatile problem-solvers.

As artificial intelligence continues its rapid evolution, Q-learning neural networks will undoubtedly play an increasingly significant role in shaping our technological future. From smarter personal assistants to more efficient energy grids, the applications are limited only by our imagination and the problems we choose to tackle.

If you’re excited about diving deeper into this fascinating field, Ask Alice offers a wealth of resources designed to guide you through the ever-expanding world of artificial intelligence and machine learning. Whether you’re taking your first steps into AI or looking to expand your existing knowledge, understanding Q-learning neural networks provides a solid foundation for exploring what’s possible when machines learn to learn. The future of intelligent systems is being written today, and now you’re better equipped to be part of that story.