Imagine asking a computer in 1956 to recognize a cat in a photograph. It would have failed spectacularly. Today, your smartphone does this effortlessly while you scroll through social media. This dramatic shift represents one of the most profound technological transformations in human history: the evolution of how artificial intelligence stores, organizes, and uses knowledge.

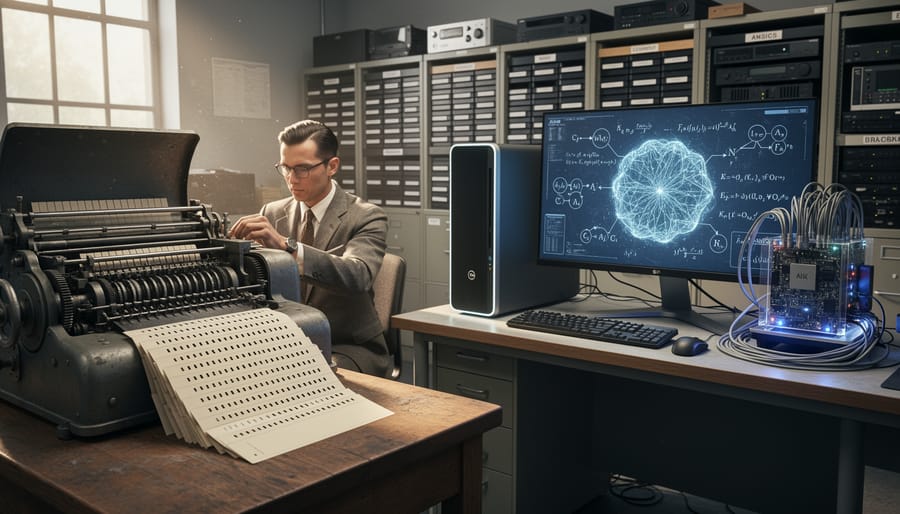

The journey from rule-based systems that could barely play checkers to neural networks that compose music and diagnose diseases reveals a fundamental revolution in machine thinking. Early AI researchers believed intelligence meant encoding human expertise into rigid logical rules. They were partially right but dramatically underestimated the complexity of human knowledge. A simple task like understanding that a hot stove burns required programmers to manually input every conceivable scenario, creating brittle systems that collapsed when facing unexpected situations.

The breakthrough came when researchers stopped trying to explicitly program knowledge and instead created systems that could learn patterns from data. This shift from telling machines what to know to teaching them how to learn transformed AI from an academic curiosity into a technology reshaping every industry.

Understanding this evolution matters because modern AI touches your daily life constantly. The autocomplete finishing your sentences, the fraud detection protecting your bank account, and the recommendation engine suggesting your next favorite show all rely on knowledge representation methods developed over seven decades of experimentation, failure, and breakthrough innovation.

The Beginning: When AI Spoke in Rules and Logic

The Birth of Symbolic Reasoning

The story of AI begins not with data or neurons, but with symbols and logic. In the 1950s and 1960s, pioneering researchers like Allen Newell, Herbert Simon, and John McCarthy believed that human intelligence could be replicated by manipulating symbols according to formal rules. Think of it like solving a puzzle where each piece represents a concept, and rules determine how pieces fit together.

These early symbolic AI systems worked by creating explicit representations of knowledge. For example, if you wanted to teach a computer about animals, you might encode rules like “IF an animal has feathers AND lays eggs THEN it is a bird.” The computer would then use logical reasoning to draw conclusions from these predefined rules.

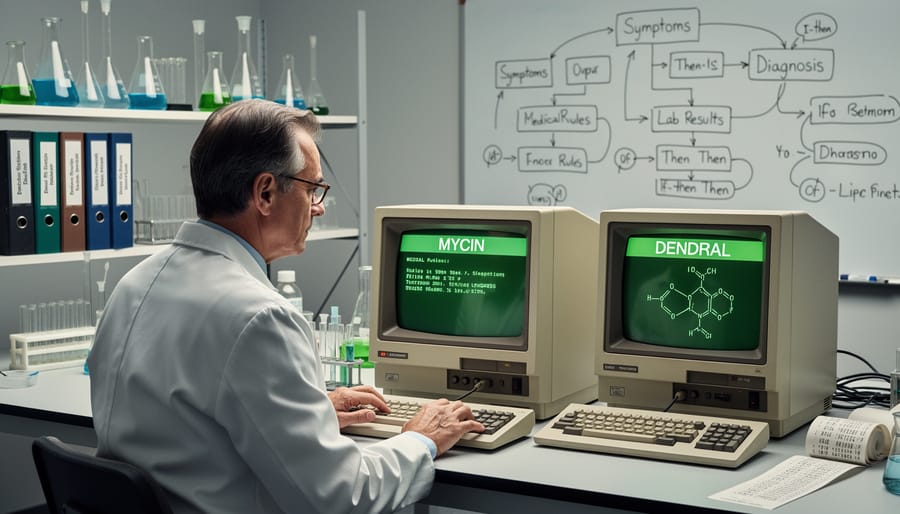

The most successful application of this approach came through expert systems in the 1970s and 1980s. MYCIN, developed at Stanford University, diagnosed bacterial infections by following a series of if-then rules created by medical experts. It would ask questions about symptoms, apply its rule-based knowledge, and recommend antibiotics with impressive accuracy.

Another notable example was DENDRAL, which helped chemists identify molecular structures. These systems proved that computers could solve complex problems in specialized domains, but they had a fundamental limitation: someone had to manually encode every piece of knowledge and every rule. As problems grew more complex, this approach became increasingly impractical, setting the stage for new methods of knowledge representation.

Why Rules Alone Weren’t Enough

By the 1980s, researchers had poured decades into building AI systems based on pure logic and rules. These expert systems could diagnose diseases, configure computer systems, and solve mathematical problems—but only within carefully controlled boundaries. The real world, it turned out, was far messier than anyone anticipated.

Consider MYCIN, a medical diagnosis system from Stanford University. It worked brilliantly for identifying bacterial infections, but ask it about a patient with multiple conditions, and it struggled. The system couldn’t handle unexpected situations or common-sense reasoning that any human doctor would grasp intuitively.

This challenge became known as the “frame problem”—how do you teach a computer which facts matter in any given situation? When you open a door, thousands of things in the room don’t change position, but a rule-based system would need explicit instructions about every single unchanged item. It was computationally impossible.

Then there was brittleness. Rule-based systems were like fragile glass sculptures—add one unexpected variable, and they shattered. A chess program could beat grandmasters but couldn’t recognize a picture of a chess piece. These limitations of expert systems became painfully obvious in real-world applications.

The 1987 stock market crash dealt a final blow. AI systems failed to predict or manage the crisis, and corporate funding dried up overnight. The AI community faced a hard truth: if machines were to truly understand our world, they needed more than logic—they needed to learn from experience.

The Knowledge Engineering Era: Building AI’s First Libraries

Expert Systems Take Center Stage

During the 1970s and 1980s, AI researchers made a crucial discovery: instead of trying to create machines with general intelligence, they could build systems that excelled in specific domains by capturing the knowledge of human experts. These expert systems marked a turning point in how AI stored and used information.

MYCIN, developed at Stanford University in the early 1970s, became one of the most celebrated examples. This groundbreaking system diagnosed blood infections and recommended antibiotics by encoding the knowledge of infectious disease specialists into hundreds of if-then rules. What made MYCIN remarkable was its ability to explain its reasoning process, showing doctors why it reached particular conclusions. In clinical tests, MYCIN often matched or exceeded the diagnostic accuracy of human experts, though it never entered routine clinical use due to ethical and legal concerns about medical AI.

Around the same time, DENDRAL revolutionized chemistry by helping scientists identify the molecular structure of unknown compounds. By analyzing mass spectrometry data and applying rules about chemical bonds and molecular behavior, DENDRAL could suggest possible structures that human chemists might take hours or days to determine. The system demonstrated how AI could serve as a powerful assistant in scientific discovery.

These expert systems represented knowledge as a collection of rules and facts stored in knowledge bases. While this approach worked well for specialized tasks, it had limitations. The systems struggled when faced with situations outside their programmed expertise, and updating their knowledge often required extensive manual effort from both domain experts and programmers. Nevertheless, expert systems proved that AI could deliver practical value in real-world applications.

The Quest for Common Sense: CYC and Beyond

In the late 1980s, computer scientist Douglas Lenat launched one of AI’s most ambitious undertakings: the CYC project. The idea was deceptively simple yet monumentally challenging. If computers struggled with common sense reasoning, why not just teach them everything humans know about everyday life?

CYC (short for “encyclopedia”) aimed to encode millions of common sense facts into a massive knowledge base. The system would learn that water flows downhill, people get wet when it rains, and you can’t be in two places at once. These seemed like obvious truths, but computers from the early AI research era had no way to understand them.

The promise was tremendous. Imagine a computer that could truly understand context, make reasonable assumptions, and navigate the world with human-like intuition. Search engines would grasp what you really meant. Virtual assistants would anticipate your needs. Robots would handle unexpected situations gracefully.

The reality proved far more difficult. After decades of work and millions of dollars invested, CYC struggled to deliver practical applications. The core problem was scale and complexity. Common sense knowledge turned out to be nearly infinite. For every fact entered, dozens of exceptions and edge cases emerged. How do you encode cultural nuances? What about metaphors, sarcasm, or context-dependent meanings?

Manual knowledge entry became a Sisyphean task. The team could never type fast enough to capture the richness of human understanding. Similar projects faced identical challenges, revealing a fundamental limitation: some knowledge might be better learned through experience than explicitly programmed.

While CYC didn’t revolutionize AI as hoped, it provided valuable lessons. The struggle highlighted why later approaches would emphasize machine learning over hand-coded knowledge, letting computers discover patterns from data rather than requiring humans to spell out every detail.

The Statistical Revolution: When AI Started Learning from Data

From Rules to Patterns

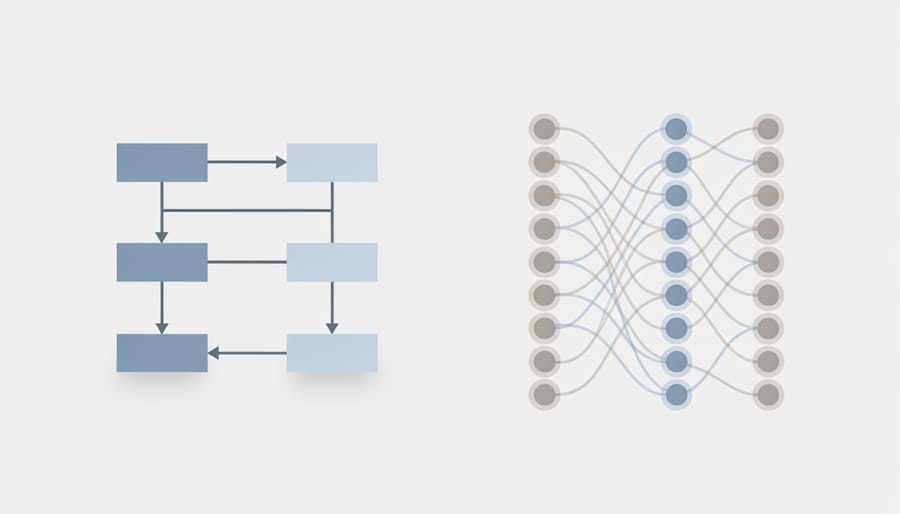

For decades, AI systems operated like massive rule books. Programmers painstakingly wrote instructions for every possible scenario, creating what we now call “expert systems.” If this happens, then do that. It was exhausting work, and the systems couldn’t handle anything their creators hadn’t explicitly programmed.

Then came a revolutionary idea: what if computers could learn patterns from examples instead?

Think about email spam filters as a perfect illustration. In the early days, someone would manually create rules like “if an email contains the word ‘prize’ or ‘free money,’ mark it as spam.” But spammers quickly adapted, finding creative ways around these rigid rules. Modern spam filters work differently. They analyze thousands of emails that users have marked as spam or legitimate, identifying subtle patterns in word choice, sender behavior, and message structure. The filter learns what spam looks like without anyone programming specific rules.

This paradigm shift transformed AI fundamentally. Instead of encoding knowledge manually, developers could feed systems large datasets and let them discover patterns independently. A medical diagnosis system no longer needed doctors to write endless if-then rules. Instead, it could learn from thousands of patient records, finding connections human experts might miss.

This approach, called machine learning, proved far more flexible and powerful. Systems could adapt to new situations, improve with experience, and handle the messy complexity of real-world data that rule-based systems struggled with.

Knowledge Graphs: The Best of Both Worlds

As AI researchers grappled with the limitations of both rigid rule-based systems and opaque neural networks, a promising middle ground emerged: knowledge graphs. Think of these as smart databases that don’t just store facts, but understand the relationships between them, creating a web of interconnected information that machines can navigate intelligently.

Knowledge graphs combine the best features of both worlds. Like traditional databases, they maintain structured, verifiable information that humans can inspect and update. But unlike simple lists of facts, they organize knowledge as a network of entities (people, places, things) connected by meaningful relationships. This structure allows AI systems to make logical inferences while machine learning algorithms can help identify patterns and suggest new connections.

Google’s Knowledge Graph, launched in 2012, perfectly illustrates this hybrid approach in action. When you search for “Leonardo da Vinci,” you don’t just get a list of web pages. Instead, Google instantly displays a rich information panel showing he was an Italian Renaissance polymath, born in 1452, known for paintings like the Mona Lisa, and connected to contemporaries like Michelangelo. The system understands that da Vinci is a person, the Mona Lisa is an artwork he created, and Renaissance Italy is a historical period and place.

This technology powers the contextual answers and information boxes you see in search results today, demonstrating how combining structured knowledge with machine learning creates AI systems that are both intelligent and understandable.

The Deep Learning Breakthrough: Knowledge Hidden in Layers

Word Embeddings and Vector Spaces

Modern AI systems represent knowledge in a fundamentally different way than their symbolic predecessors. Instead of rigid labels and rules, they use word embeddings, a technique that converts concepts into mathematical vectors, essentially lists of numbers that exist in multi-dimensional space.

Imagine plotting words on a graph. In a simple two-dimensional space, you might place “king” and “queen” close together because they share royal attributes. Word embeddings extend this idea to hundreds of dimensions, capturing nuanced relationships between concepts. Each word becomes a point in this vast space, and its position reflects its meaning based on how it’s used in real-world text.

The breakthrough came when researchers discovered these vectors enable mathematical reasoning. The famous example: if you take the vector for “king,” subtract “man,” and add “woman,” you land remarkably close to “queen.” This isn’t magic; it’s the AI recognizing patterns from analyzing millions of texts where these words appear in similar contexts.

Think of it like a cosmic map where similar concepts cluster together. “Paris” sits near “France” and “Berlin” near “Germany” at roughly the same distance, reflecting the capital-country relationship. “Swimming” and “running” are neighbors in the exercise region of this space.

This vector-based approach powers modern language models, enabling them to understand context, make analogies, and even translate between languages by mapping similar concepts across different linguistic spaces. It transformed AI from following explicit rules to discovering implicit patterns in how humans actually use language.

Transformers and Contextual Understanding

The breakthrough moment in AI’s evolution came with the introduction of attention mechanisms and transformer architecture in 2017. Prior to this innovation, AI systems struggled with understanding context in the same way humans do. When you read a sentence, you naturally understand which words relate to each other, even if they’re far apart. Early AI models couldn’t do this effectively.

Transformers changed everything by introducing a concept called “self-attention.” Think of it like highlighting the most important words in a paragraph. When processing the sentence “The animal didn’t cross the street because it was too tired,” the system learns that “it” refers to “animal” rather than “street” by calculating attention scores between all words simultaneously. This parallel processing, enabled by hardware advances in GPUs, made transformers dramatically faster than previous sequential models.

This architecture became the foundation for language models you likely use today. ChatGPT, Google’s Bard, and similar tools all rely on transformer technology. These systems can understand nuanced questions, maintain conversation context across multiple exchanges, and generate human-like responses because they’ve mastered contextual relationships between words.

The real-world impact has been transformative. Customer service chatbots now understand complex queries without rigid scripts. Translation tools capture idioms and cultural context rather than translating word-by-word. Writing assistants suggest contextually appropriate phrases based on your document’s overall meaning.

What makes transformers particularly powerful is their scalability. By training on massive text datasets, they develop rich representations of language, concepts, and even reasoning patterns. This ability to capture and utilize context marked a pivotal shift from brittle, rule-based systems to flexible AI that adapts to nuanced human communication.

Today’s Frontier: Large Language Models and Emergent Knowledge

How LLMs Store and Retrieve Knowledge

Unlike traditional databases that store facts in organized tables, modern generative AI models like GPT store knowledge in a surprisingly mysterious way. These models encode billions of facts within their neural network parameters—the numerical weights and connections that form during training.

Think of it like this: when you learn that Paris is the capital of France, your brain doesn’t file this fact in a specific labeled drawer. Instead, it creates patterns across millions of neurons. LLMs work similarly, distributing knowledge across their massive parameter networks rather than storing discrete facts in specific locations.

Recent research reveals that these models create what scientists call “knowledge graphs” embedded within their layers. When you ask GPT about the Eiffel Tower, the model doesn’t look up a stored entry. Instead, it activates specific patterns across multiple layers that collectively represent relationships between Paris, France, landmarks, and architecture.

Researchers are actively investigating how this works through techniques like “probing” experiments, where they examine which neurons fire for specific types of knowledge. They’ve discovered that factual knowledge often clusters in the middle layers of these models, while language patterns concentrate in earlier and later layers.

This distributed storage system explains both the impressive capabilities and limitations of LLMs. They can make surprising connections between concepts, but they also sometimes “hallucinate” facts because knowledge isn’t stored as verified entries but as probabilistic patterns learned from training data.

The Hallucination Problem and What It Tells Us

AI hallucinations—when systems confidently generate false or nonsensical information—represent one of the most revealing challenges in modern AI. These errors aren’t random glitches; they’re windows into how current systems actually “understand” knowledge.

Consider ChatGPT inventing fake legal citations or image generators creating impossible architecture. These mistakes happen because large language models don’t truly comprehend information the way humans do. Instead, they recognize patterns in training data and predict what comes next. When asked about something beyond their training or combining concepts in novel ways, they fill gaps with plausible-sounding fabrications.

This problem highlights a fundamental limitation: today’s AI systems lack genuine understanding and can’t reliably distinguish between what they know and what they’re guessing. However, this challenge is driving promising solutions. Researchers are developing hybrid systems that combine neural networks with structured knowledge bases, creating AI that can verify claims before presenting them. Others are exploring methods for AI to express uncertainty, essentially teaching systems to say “I don’t know.”

These hallucinations aren’t failures of progress—they’re signposts pointing toward the next evolution in knowledge representation, where systems will balance pattern recognition with verifiable understanding.

What This Means for You: Practical Takeaways

Understanding the evolution of AI knowledge representation isn’t just academic history—it directly impacts how you interact with AI tools today and shapes which technologies you should learn.

When you use a GPS navigation system or interact with automated customer service, you’re experiencing rule-based systems that trace back to early symbolic AI. These applications rely on explicit if-then logic: if traffic is detected on Route A, then suggest Route B. This makes them transparent and predictable, which explains why critical systems in healthcare diagnostics and financial compliance still use rule-based approaches. If you’re building applications where decisions need clear explanations, learning symbolic methods remains valuable.

Conversely, when Netflix recommends your next binge-worthy show or your smartphone recognizes your face, you’re experiencing modern neural networks and machine learning. These systems excel at pattern recognition but can’t always explain their reasoning. This trade-off between performance and interpretability is something every AI practitioner must consider when choosing technologies for their projects.

For beginners entering the AI field, understanding this evolution helps you make informed decisions. If you’re working with structured data in industries like banking or legal services, knowledge graphs and ontologies—descendants of semantic networks—provide powerful ways to organize information. Companies like Google use knowledge graphs to power their search results, connecting facts about people, places, and concepts.

Meanwhile, if you’re interested in computer vision, natural language processing, or recommendation systems, deep learning frameworks represent the current frontier. However, the newest developments combine both approaches. Hybrid systems that merge symbolic reasoning with neural networks are emerging, particularly in autonomous vehicles that need both pattern recognition and rule-following capabilities.

The key takeaway: different problems require different knowledge representation strategies. By understanding where these approaches came from and what they excel at, you can choose the right tool for your specific challenge rather than following trends blindly.

The journey of AI knowledge representation tells a remarkable story of human ingenuity and adaptation. From the rigid symbolic logic systems of the 1950s, where computers followed strict if-then rules, to today’s sophisticated neural networks that learn patterns from massive datasets, we’ve witnessed a fundamental transformation in how machines understand and process information.

Each era brought its own breakthroughs and limitations. Symbolic AI gave us expert systems that could diagnose diseases but struggled with everyday common sense. Semantic networks and frames made relationships more explicit but remained manually intensive. Knowledge graphs brought structure to the web’s chaos, while neural networks revolutionized how machines learn from raw data.

What’s particularly exciting is that this evolution hasn’t stopped. Modern AI increasingly combines approaches—hybrid systems merge the interpretability of symbolic reasoning with the learning power of neural networks. Emerging techniques like neuro-symbolic AI and transformer architectures continue pushing boundaries, promising machines that can both learn flexibly and reason logically.

Whether you’re drawn to the historical foundations of expert systems or fascinated by cutting-edge deep learning, there’s never been a better time to dive deeper. The field remains vibrant and rapidly evolving, with new developments emerging regularly. Your exploration of AI knowledge representation has just begun, and the future promises even more innovative ways for machines to understand our world.