Every breakthrough in artificial intelligence today stands on decades of data collection practices that evolved from punch cards to crowdsourced platforms. The story of data science history reveals how humanity transformed from manually recording census information in the 1890s to training neural networks on billions of labeled images. Understanding this journey matters because the methods we use to gather and annotate data directly determine what our AI systems can and cannot do.

The path from statistical analysis to modern machine learning wasn’t linear. In 1962, John Tukey first envisioned “data analysis” as a distinct field, yet it took another 35 years before the term “data science” emerged. Between these milestones, pioneers developed foundational techniques like data mining, pattern recognition, and statistical computing that would later power everything from recommendation engines to autonomous vehicles.

What changed everything was scale. The internet era brought unprecedented volumes of information, but raw data alone couldn’t train intelligent systems. This sparked the rise of data annotation, where human workers labeled millions of images, texts, and videos to teach machines how to recognize patterns. Projects like ImageNet, containing over 14 million annotated images, became the training grounds for deep learning revolutions.

Today’s AI capabilities exist because researchers solved not just algorithmic challenges but data challenges. From Amazon’s Mechanical Turk democratizing annotation work to specialized platforms ensuring quality control, the evolution of data collection methods directly shaped which AI applications became possible. This historical context illuminates why data preparation still consumes 80% of most machine learning projects.

The Early Days: When Data Scientists Were Human Computers

The Punch Card Era and First Datasets

Before computers had hard drives or even monitors, the pioneers of artificial intelligence faced a peculiar challenge: how do you teach a machine when your data comes on cardboard rectangles?

In the 1950s and 1960s, researchers at early AI research institutions relied on punch cards to store their datasets. These stiff cards, about the size of a dollar bill, contained rows of carefully punched holes that represented data. Each hole pattern encoded information like chess moves, mathematical equations, or simple pattern recognition examples.

Consider Arthur Samuel’s groundbreaking checkers program at IBM in 1959. To train his system, researchers manually punched thousands of game positions onto cards, one move at a time. A single training session might require carrying boxes containing hundreds of cards to the computer room. If you dropped the box and the cards scattered, you’d spend hours reorganizing them in the correct sequence.

Similarly, Frank Rosenblatt’s Perceptron project used punch cards to input simple visual patterns like letters and shapes. Research assistants would manually encode each training example by punching specific hole combinations that represented pixel-like grids. The process was painstakingly slow: creating a dataset that today takes seconds to download could require weeks of manual card preparation.

This physical constraint meant early AI datasets were remarkably small by modern standards, often containing just hundreds or thousands of examples rather than the millions we use today. Yet these humble punch card collections laid the foundation for how we think about organizing and formatting training data.

Why Early AI Hit a Wall

In the 1950s and 60s, researchers believed human-level AI was just around the corner. Early programs like ELIZA could mimic conversation, and chess-playing computers seemed to prove machines could think. But by the 1970s, progress ground to a halt.

The problem wasn’t brilliant ideas or powerful algorithms—it was data. Or rather, the lack of it.

Early AI systems needed massive amounts of information to learn from, but collecting and organizing data was painfully slow. Researchers manually entered examples on punch cards, one at a time. There were no digital cameras capturing millions of images, no internet generating endless text, and no smartphones tracking user behavior. Everything had to be coded by hand.

Without sufficient training examples, AI couldn’t recognize patterns or make accurate predictions. A speech recognition system might work in a quiet lab but fail completely with real-world accents and background noise. The gap between laboratory demos and practical applications became impossible to bridge.

Funding dried up as promised breakthroughs failed to materialize. This period, known as the first AI winter, lasted well into the 1980s. The lesson was clear: intelligent machines needed something researchers hadn’t anticipated—mountains of real-world data to learn from.

The Digital Revolution: When Databases Changed the Game

From Floppy Disks to Digital Libraries

In the 1980s and early 1990s, machine learning researchers faced a frustrating bottleneck. While they had promising algorithms, they lacked the data storage capacity to test them properly. A typical floppy disk held just 1.44 megabytes, barely enough for a handful of low-resolution images. This forced scientists to work with tiny datasets, sometimes containing fewer than a hundred examples.

The shift began with hard drives and CD-ROMs in the mid-1990s, which could store hundreds of megabytes. Suddenly, researchers could compile datasets with thousands of samples instead of dozens. But the real transformation came with hardware advances in the 2000s, when affordable gigabyte and terabyte storage became commonplace.

This explosion in storage capacity fundamentally changed what was possible. Instead of hand-selecting a few dozen representative images, researchers could now collect millions. The famous ImageNet dataset, created in 2009, contained over 14 million images across 20,000 categories. This would have been physically impossible just a decade earlier.

Digital storage also enabled version control and easy sharing. Scientists could distribute massive datasets across research institutions via the internet, creating collaborative efforts that would have required shipping countless boxes of physical media in the past. This democratization of data access accelerated progress, allowing smaller research teams to experiment with sophisticated machine learning models without rebuilding datasets from scratch.

The Birth of Structured Datasets

In the 1980s and early 1990s, researchers faced a common frustration: everyone was working with different data, making it nearly impossible to compare results or build upon each other’s work. Imagine trying to test whether your new algorithm actually worked better than existing ones, but you couldn’t because everyone used different datasets with different formats.

This challenge led to a breakthrough moment in 1987 when researchers at the University of California, Irvine created the UCI Machine Learning Repository. Think of it as the first organized library for machine learning data. Instead of researchers spending months collecting their own data, they could now access standardized datasets that everyone agreed to use as benchmarks. The famous Iris flower dataset, which helps beginners learn classification by identifying flower species based on petal measurements, became one of the earliest examples that countless students still use today.

This standardization transformed AI research in practical ways. When scientists published papers claiming their algorithms were better, others could actually verify those claims using the same data. It was like everyone finally agreeing to use the same ruler for measurement. The repository started with just a handful of datasets but grew to include hundreds, covering everything from credit approval to handwriting recognition.

These shared datasets accelerated progress because researchers could focus on developing better algorithms instead of constantly reinventing the wheel with data collection. The collaborative foundation laid by UCI set the stage for even more ambitious projects that would follow in the decades ahead.

The Internet Era: Crowdsourcing Transforms Data Annotation

ImageNet: The Dataset That Launched Deep Learning

In 2009, computer scientist Fei-Fei Li and her team at Stanford University unveiled something that would change artificial intelligence forever: ImageNet. This wasn’t just another dataset. It was a colossal library containing 14 million hand-labeled images spanning over 20,000 categories, from everyday objects like coffee mugs to exotic animals and architectural marvels.

Creating ImageNet required an extraordinary human effort. Li and her team turned to Amazon Mechanical Turk, a crowdsourcing platform where thousands of workers worldwide helped classify and verify images. Each photograph needed multiple people to confirm its label, ensuring accuracy. This meticulous process took years, but the payoff would be revolutionary.

Before ImageNet, computer vision systems struggled with basic recognition tasks. They could identify objects only under perfect conditions and within limited categories. Researchers lacked the massive, diverse datasets needed to train more sophisticated models.

Everything changed in 2012 at the annual ImageNet Large Scale Visual Recognition Challenge. A team from the University of Toronto, led by Geoffrey Hinton, submitted a deep learning model called AlexNet. It didn’t just win—it crushed the competition, reducing error rates by an unprecedented margin. This breakthrough in computer vision proved that neural networks, given enough quality data, could outperform traditional approaches.

ImageNet demonstrated a fundamental truth: artificial intelligence needed fuel, and that fuel was data—lots of it, carefully labeled and thoughtfully organized. The dataset became the gold standard for training vision models, sparking innovations that now power facial recognition, autonomous vehicles, medical imaging diagnostics, and countless applications we use daily. ImageNet didn’t just provide data; it provided the foundation for modern AI’s visual understanding capabilities.

How Crowdsourcing Democratized AI Development

For years, building AI systems seemed reserved for tech giants with deep pockets. Training a machine learning model required thousands or millions of labeled examples, and hiring professional annotators was prohibitively expensive. A breakthrough came when crowdsourcing platforms transformed how we gather training data, opening the door for university researchers, startups, and individual developers to participate in AI development.

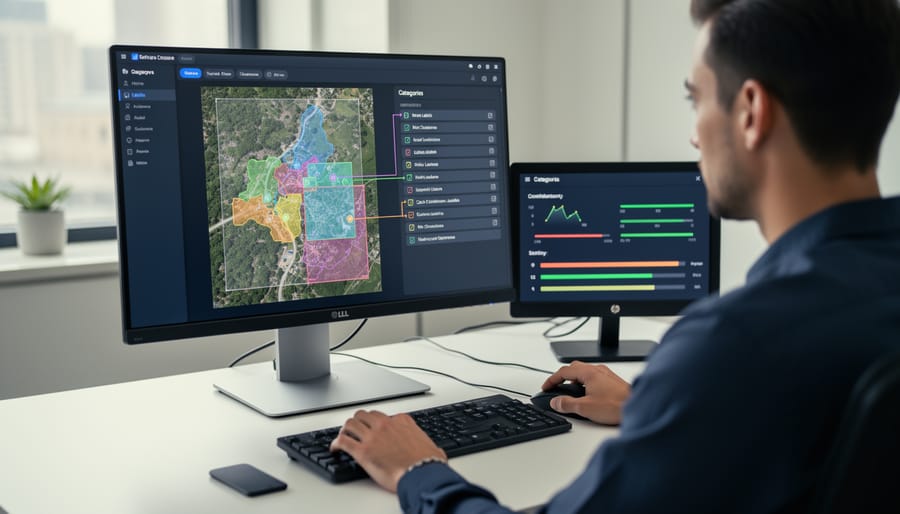

Amazon Mechanical Turk, launched in 2005, pioneered this shift by connecting requesters with a global workforce willing to complete small tasks for modest payments. Suddenly, a graduate student could label 10,000 images for a few hundred dollars instead of tens of thousands. Other platforms like Figure Eight and Labelbox soon followed, each refining the crowdsourcing model with better quality controls and user-friendly interfaces.

This democratization had profound effects. The famous ImageNet dataset, which revolutionized computer vision in 2012, relied heavily on crowdsourced labor to annotate 14 million images. Without affordable annotation platforms, this project would have been financially impossible for an academic team.

However, the model wasn’t without challenges. Questions arose about fair wages, worker conditions, and quality control. Some tasks paid mere cents, sparking debates about ethical AI development. Despite these concerns, crowdsourcing fundamentally changed who could build AI systems. Small teams with innovative ideas could now compete with established players, accelerating innovation across the field and making AI development more accessible than ever before.

Modern Methods: Smart Tools and Semi-Automated Annotation

When AI Helps Annotate AI Training Data

Today, artificial intelligence has reached a fascinating milestone: AI now helps create the very data needed to train the next generation of AI. This circular relationship represents a significant shift from the purely manual annotation methods of the past.

Consider medical imaging, where radiologists once had to manually mark every tumor boundary in thousands of scans. Now, pre-trained computer vision models can suggest initial annotations, highlighting potential areas of concern. The human expert then reviews and refines these suggestions, cutting annotation time by up to 70% while maintaining accuracy. It’s like having an intelligent assistant that does the rough draft, leaving professionals to focus on quality control and edge cases.

In autonomous vehicle development, companies use existing models to pre-label objects in dashcam footage. A model trained on millions of images can automatically identify cars, pedestrians, and traffic signs in new video feeds. Human annotators then verify and correct these labels, catching errors the AI might make with unusual scenarios like a person on a skateboard or a partially obscured stop sign.

Natural language processing has seen similar advances. When building chatbots or sentiment analysis tools, developers now use large language models to suggest text classifications or entity labels. These AI assistants can process thousands of customer reviews in minutes, flagging sentiment and key topics, while humans validate the most important or ambiguous cases.

This collaborative approach solves a critical challenge: we need massive amounts of labeled data to train powerful AI systems, but manual annotation is expensive and time-consuming. By combining AI efficiency with human judgment, we’re creating higher-quality datasets faster than ever before, accelerating the entire field of machine learning.

Synthetic Data: Creating Training Examples from Scratch

Imagine needing millions of labeled images to train an AI, but lacking the time or budget for human annotators. This challenge led to one of data science’s most creative solutions: generating training data artificially.

Synthetic data emerged as a game-changer when companies realized they could create realistic training examples from scratch. Instead of photographing thousands of self-driving car scenarios, engineers could simulate them. A virtual world could generate endless variations of rainy nights, foggy mornings, or busy intersections, complete with perfect labels for every pedestrian, vehicle, and traffic sign.

The breakthrough came with Generative Adversarial Networks, or GANs, introduced in 2014. Think of GANs as two AI systems playing a game: one creates fake data while the other tries to spot the fakes. Through this competition, the generator becomes incredibly skilled at producing realistic images, text, or even medical scans that fool both the detector and human eyes.

Companies now use several approaches to create synthetic datasets. Video game engines generate photorealistic street scenes for autonomous vehicles. Procedural generation creates variations of existing images by adjusting lighting, angles, or backgrounds. Medical researchers synthesize patient data that maintains statistical accuracy while protecting privacy.

The advantages are compelling. Synthetic data eliminates privacy concerns since no real people are involved. It’s faster and cheaper than manual annotation. Teams can generate rare scenarios that would be difficult or dangerous to capture in reality, like industrial accidents or extreme weather conditions.

However, synthetic data isn’t perfect. If the simulations don’t match real-world complexity, AI trained on them may struggle with actual scenarios. The solution? Smart teams combine synthetic data with real examples, using artificial generation to supplement rather than replace human-annotated datasets. This hybrid approach has become standard practice in modern AI development.

The Ongoing Challenges: Quality, Bias, and Ethics

The Hidden Humans Behind AI

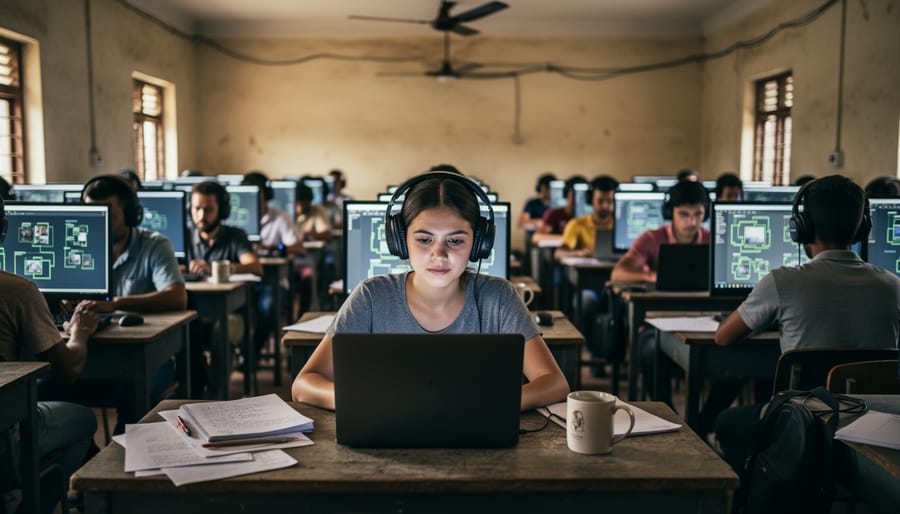

Behind every “smart” AI system lies an often-invisible workforce of human data annotators who teach machines to recognize patterns. These workers spend hours labeling images, transcribing audio, and categorizing text—the essential groundwork that makes machine learning possible.

This global workforce numbers in the millions, with major concentrations in countries like India, Kenya, Venezuela, and the Philippines. Workers might earn just a few dollars per hour identifying objects in photos, drawing bounding boxes around pedestrians for self-driving car training, or flagging inappropriate content. While platforms like Amazon Mechanical Turk democratized access to this work, they also created a gig economy with minimal protections.

The labor conditions have sparked significant controversy. Many annotators work without benefits, job security, or clear career progression. They may review disturbing content without adequate mental health support, or work under intense productivity pressures that sacrifice accuracy for speed. Time magazine’s 2023 investigation revealed workers in Kenya earning less than two dollars per hour to filter traumatic content for ChatGPT.

This raises uncomfortable questions about AI’s human cost. The technology industry has long celebrated automation while relying on low-wage human labor to make it function. Some companies are responding with better pay and working conditions, recognizing that data quality depends on worker wellbeing.

Understanding this hidden workforce reminds us that artificial intelligence isn’t purely artificial—it’s built on very real human effort, expertise, and sometimes exploitation.

When Bad Data Creates Biased AI

Flawed data has led to AI systems that perpetuate real-world discrimination. In 2015, Amazon built a recruiting tool that systematically downgraded resumes from women. Why? The training data consisted primarily of resumes from male applicants, teaching the AI that men were preferable candidates. The company scrapped the project in 2018.

Similarly, facial recognition systems have shown alarming accuracy gaps. A 2018 MIT study found that commercial systems had error rates below 1% for light-skinned men but up to 35% for dark-skinned women. The root cause? Training datasets overwhelmingly featured lighter-skinned faces, leaving the AI ill-equipped to recognize diverse populations.

These failures highlight the ethical implications of biased data collection. Today, researchers are fighting back with solutions like diverse annotation teams, bias audits during development, and datasets intentionally balanced for gender, race, and age. Organizations now publish dataset documentation cards that detail collection methods and potential limitations. While perfect fairness remains elusive, these transparency measures represent crucial steps toward building AI systems that serve everyone equally.

From punch cards in the 1890 census to AI systems that can generate images from text, the story of data science reveals a fundamental truth: how we collect and organize data has always determined what our machines can learn. Each breakthrough in artificial intelligence, from early expert systems to today’s large language models, emerged only when someone figured out how to gather and structure the right information at the right scale.

The evolution is clear. Herman Hollerith’s tabulating machines could only process what census workers carefully encoded on cards. Modern deep learning models can only recognize what human annotators have painstakingly labeled in training datasets. Data collection and annotation have consistently been both the bottleneck slowing progress and the key unlocking new capabilities.

Looking forward, the landscape is shifting. Self-supervised learning techniques now allow models to learn from unlabeled data by predicting hidden parts of images or text. Few-shot learning enables systems to generalize from just a handful of examples. These emerging approaches might finally reduce our dependence on massive annotated datasets, potentially democratizing AI development for organizations that can’t afford millions in labeling costs.

Yet even as automation advances, human insight remains irreplaceable. The questions we ask, the patterns we choose to capture, and the ethical frameworks we apply to data collection will continue shaping what artificial intelligence becomes.