Monitor your AI models in production by implementing real-time performance tracking, data drift detection, and automated alerting systems. Deploy dashboards that track prediction accuracy, response times, and resource usage to catch degradation before it impacts users. Set baseline metrics during AI model training, then configure alerts when production performance deviates by more than 10-15% from these benchmarks.

Establish data quality checks that validate incoming requests against training data distributions. Build automated pipelines that flag when input features shift beyond expected ranges, such as when customer behavior patterns change seasonally or user demographics evolve. This prevents your model from making predictions on data it wasn’t designed to handle.

Track both technical metrics like latency and memory consumption alongside business outcomes such as conversion rates or user satisfaction scores. A model might maintain high technical accuracy while delivering poor business results if the predictions don’t align with current market conditions.

Here’s why this matters: A recommendation engine at a major retailer once failed silently for three weeks, suggesting winter coats during summer because nobody monitored seasonal data drift. The company lost millions in potential revenue. Another healthcare AI system gradually degraded over six months as patient demographics shifted, but without monitoring, clinicians unknowingly relied on increasingly unreliable predictions.

Traditional software monitoring focuses on uptime and errors. AI monitoring requires a different approach because models fail gradually and silently. Your application might return results with perfect response times while the underlying predictions become increasingly wrong. Unlike conventional code that breaks noticeably, AI systems degrade quietly as the world around them changes, making proactive monitoring essential for maintaining reliable, trustworthy AI applications in production environments.

What Happens When AI Models Go Unmonitored

In 2020, a major healthcare provider discovered their AI-powered diagnostic tool had been silently degrading for months. The model, initially achieving 94% accuracy in detecting certain conditions, had dropped to just 68% accuracy without anyone noticing. Why? The hospital had recently upgraded their imaging equipment, and the new machines produced slightly different image characteristics than the training data. Without proper monitoring, this drift went undetected until patient complaints prompted a manual audit.

This isn’t an isolated incident. When AI models operate without oversight, the consequences ripple across organizations and communities.

Consider the case of a leading e-commerce company whose recommendation engine suddenly started suggesting wildly irrelevant products. Customers began abandoning their shopping carts at unprecedented rates, costing the company millions in lost revenue before engineers traced the issue to corrupted data inputs. The model was technically functioning, but it was being fed information that no longer matched real-world patterns.

Financial institutions have faced similar challenges with credit-scoring models. One bank discovered their lending algorithm had gradually developed biased patterns, disproportionately rejecting qualified applicants from certain demographics. The model had learned from biased feedback loops in approval data, reinforcing unfair patterns over time. This highlights why ethical machine learning requires continuous monitoring, not just initial testing.

The business impact extends beyond immediate losses. Customer trust erodes when AI systems deliver poor experiences. Regulatory penalties loom for companies whose models violate fairness requirements. And competitive advantages vanish when models become stale while competitors iterate and improve.

Perhaps most concerning is the silent nature of these failures. Unlike traditional software that crashes or throws error messages, AI models continue operating while quietly degrading. They produce outputs that look reasonable on the surface but are increasingly unreliable. Without monitoring systems in place, organizations remain blind to these deteriorating conditions until significant damage occurs.

Understanding AI Model Monitoring: The Basics Made Simple

The Difference Between Traditional Software Monitoring and AI Monitoring

Monitoring AI systems differs fundamentally from tracking traditional software because of how these technologies operate under the hood. When you monitor a conventional application like a shopping website, you’re primarily checking if it’s up or down, how fast pages load, and whether transactions complete successfully. The logic remains constant—the same input always produces the same output.

AI models, however, behave more like living systems. They make predictions based on patterns learned from data, which means their performance can drift over time without any code changes. Imagine a spam filter trained in 2022 that has never seen AI-generated phishing emails. The code hasn’t broken, but the model becomes less effective because the world around it has evolved.

Traditional monitoring asks, “Is the system working?” AI monitoring must also ask, “Is the model still making good decisions?” This requires tracking metrics that don’t exist in conventional software. For instance, you need to watch for data drift, where incoming requests start looking different from training data. A fraud detection model trained on pre-pandemic shopping patterns might suddenly see everyone buying online, causing its assumptions to misalign with reality.

Additionally, AI models can fail silently. A broken website shows error messages, but a degrading model might still return predictions—they’re just increasingly wrong. This makes proactive monitoring essential rather than optional, requiring specialized tools that understand model behavior, not just system health.

Key Metrics You Actually Need to Track

When your AI model goes into production, you need to know if it’s still working as expected. Think of it like monitoring your car’s dashboard while driving—you want to catch problems before they become disasters. Here are the essential metrics that matter most.

Model drift is your first warning sign that something’s changing. Imagine you built a model to predict customer purchases based on 2023 shopping patterns. By 2024, customer behavior might shift due to economic changes or new trends. This disconnect between training data and current reality is called drift. You’ll notice it when prediction accuracy starts dropping, even though nothing changed in your code.

Data quality metrics keep track of what’s flowing into your model. Are you suddenly getting missing values where there weren’t any before? Has the range of your input data changed dramatically? For example, if your model expects customer ages between 18 and 80, but suddenly receives entries showing 150, you’ve got a data quality problem. These issues can poison your predictions without you realizing it.

Performance metrics tell you how well your model actually performs in the real world. This goes beyond simple accuracy—you need to track precision, recall, and latency. If your fraud detection model takes 30 seconds to flag a suspicious transaction, it’s technically working but practically useless. These metrics often connect with model interpretability tools to help you understand why performance changes occur.

Start by tracking these three metric categories weekly, then adjust frequency based on your model’s behavior and business criticality.

The Core Components of AI Observability

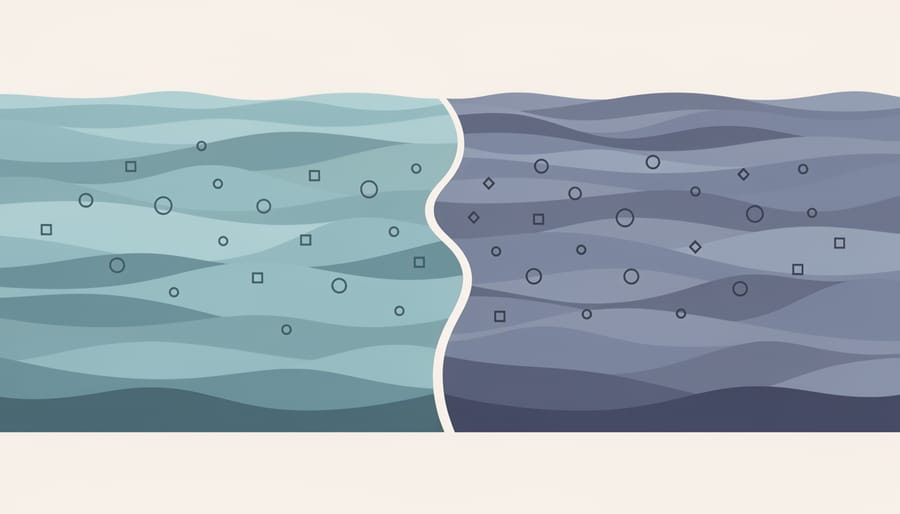

Data Drift: When Your Model Sees Something New

Imagine teaching someone to recognize cats, showing them only pictures of tabby cats. One day, they encounter a hairless Sphynx and confidently declare it’s not a cat. That’s essentially data drift—when the data your AI model encounters in the real world starts looking different from what it learned during training.

Data drift happens more often than you’d think. A fraud detection model trained on pre-pandemic shopping patterns suddenly faces entirely new online purchasing behaviors. A recommendation system built on summer user activity performs poorly come winter. An image recognition model trained on professional photos struggles with smartphone snapshots. The model isn’t broken; the world simply changed.

There are two main types to watch for. Feature drift occurs when the input data characteristics shift—like when your customer demographics gradually skew younger or older. Label drift happens when the relationship between inputs and outputs changes—such as when words like “viral” or “cloud” take on completely different meanings over time.

This is particularly challenging for AI-powered data pipelines processing real-time information, where drift can happen gradually or overnight. Seasonal changes, market shifts, new competitors, regulatory updates, and evolving user preferences all contribute to drift.

The tricky part? Your model’s confidence scores might remain high even as accuracy plummets. It’s confidently wrong, which makes monitoring for drift essential rather than optional.

Model Drift: The Silent Performance Killer

Imagine spending months perfecting a fraud detection model that catches 95% of suspicious transactions. You deploy it proudly, and it works beautifully. Six months later, however, complaints flood in about missed fraudulent charges. What happened? Your model didn’t break, it drifted.

Model drift occurs when your AI model’s predictions become less accurate over time, even though the input data looks similar. Think of it like a compass slowly losing its magnetic calibration. The compass still points somewhere, but not quite north anymore.

Here’s where it gets tricky: model drift differs from data drift, though they’re related. Data drift happens when the incoming data changes. For example, if your fraud model trained on credit card transactions suddenly receives cryptocurrency transactions, that’s data drift. The world around your model changed.

Model drift, however, is more subtle. The relationships your model learned between features and outcomes have shifted. Perhaps fraudsters evolved their tactics, or customer behavior changed after a recession. Your model’s internal logic, once accurate, no longer matches reality. The same inputs now mean different things.

Why does this matter? Because model drift is invisible without proper monitoring. Your model continues making predictions with the same confidence, but those predictions are increasingly wrong. It’s like driving with a GPS using outdated maps – you’ll confidently arrive at the wrong destination.

Regular monitoring catches this silent performance killer before it damages your business, reputation, or customer trust.

Essential Features in AI Monitoring Tools

Automated Alerting: Your Early Warning System

Automated alerting acts as your AI system’s smoke detector, notifying you the moment something goes wrong before it spirals into a full-blown crisis. Instead of manually checking dashboards around the clock, automated alerts proactively flag issues based on predefined thresholds and conditions you’ve set.

Think of it like this: if your recommendation model’s accuracy suddenly drops from 92% to 78%, you want to know immediately, not when customers start complaining about irrelevant suggestions. Automated alerts make this possible by continuously monitoring key metrics and sending notifications through channels like email, Slack, or PagerDuty when anomalies occur.

What should trigger alerts? Start with critical metrics like prediction accuracy drops, unusual inference latency spikes, data drift beyond acceptable ranges, error rate increases, or resource utilization reaching dangerous levels. For example, if your fraud detection model starts flagging 300% more transactions than usual, that’s either a genuine crisis or a model malfunction requiring immediate attention.

The key is balancing sensitivity with practicality. Too many alerts create alarm fatigue where teams start ignoring notifications. Too few means catching problems too late. Start conservative with alerts for severe issues, then refine as you understand your system’s normal behavior patterns.

Visualization Dashboards That Actually Make Sense

A great monitoring dashboard tells a story at a glance. Instead of overwhelming you with dozens of scattered metrics, effective dashboards organize information into clear visual sections that answer specific questions: Is my model performing accurately? Are predictions happening on time? Is the input data shifting?

Think of your dashboard like a car’s instrument panel. Just as drivers need to see speed, fuel, and engine temperature without reading a manual, your AI dashboard should surface the most critical signals immediately. Start with your model’s core metrics—accuracy, precision, or whatever matters most for your use case—displayed prominently with historical trends to spot degradation over time.

Include data drift indicators that visualize how incoming data compares to your training data. Color-coded alerts work well here: green for normal operation, yellow for minor drift, red for significant changes requiring attention. Add prediction latency charts to catch performance slowdowns before users complain.

The best dashboards also show real-world impact. If you’re monitoring a recommendation system, display business metrics like click-through rates alongside technical metrics. This connection helps everyone understand why monitoring matters and makes it easier to justify resources when issues arise. Remember, if your team can’t understand your dashboard in under a minute, it needs simplification.

Integration Capabilities: Playing Well With Others

No AI monitoring tool operates in isolation. Think of it like a security camera system – it’s only truly valuable when it integrates with your alarm system, mobile alerts, and security service. The same principle applies to AI monitoring platforms.

Modern AI systems exist within complex technical ecosystems. Your monitoring solution needs to connect seamlessly with your existing infrastructure: data pipelines that feed your models, cloud platforms hosting your deployments, alerting systems that notify your team of issues, and dashboards where stakeholders track performance. Without these connections, you’re creating data silos that slow down problem resolution.

Key integration points include version control systems like Git (to track which model version caused an issue), data warehouses (to correlate model behavior with training data), incident management platforms like PagerDuty or Slack (for real-time alerts), and business intelligence tools (to measure AI impact on business metrics). For example, when your fraud detection model suddenly flags 50% more transactions as suspicious, integrated monitoring can immediately pull up the recent code changes, alert the on-call engineer via Slack, and display the business impact on your revenue dashboard.

The best monitoring tools offer pre-built connectors and APIs, making integration straightforward rather than a months-long engineering project.

Popular AI Monitoring and Observability Tools Explained

Open-Source Options for Getting Started

Getting started with AI monitoring doesn’t require a hefty budget. Several powerful open-source tools offer excellent capabilities for beginners ready to monitor their first machine learning models in production.

Prometheus combined with Grafana creates a robust foundation for tracking basic model metrics. Prometheus excels at collecting and storing time-series data like prediction counts, response times, and error rates, while Grafana transforms this data into intuitive dashboards. This combination works beautifully for teams already familiar with infrastructure monitoring who want to extend their setup to include AI systems. Imagine watching your model’s prediction latency spike during peak hours—you’ll spot it immediately on a Grafana dashboard.

For Python-focused projects, WhyLabs offers an open-source library called whylogs that specializes in data quality monitoring. It generates lightweight statistical profiles of your data without storing raw information, making it privacy-friendly and efficient. This tool shines when you need to detect data drift—those subtle changes in incoming data that can silently degrade your model’s accuracy over time.

MLflow provides comprehensive experiment tracking and model registry features, making it ideal for data science teams managing multiple models. Think of it as your model’s lifecycle manager, tracking everything from training experiments to production deployments.

Each tool addresses different monitoring needs, so consider starting with the one that matches your immediate challenge. Many teams begin with Prometheus and Grafana for basic operational metrics, then add specialized tools like whylogs as their monitoring requirements grow more sophisticated.

Commercial Platforms for Growing Teams

As your AI projects mature and your team expands, free tools may start showing their limitations. This is where commercial platforms step in, offering capabilities specifically designed for production environments at scale.

Commercial solutions like Arize AI, Fiddler, and WhyLabs provide several advantages beyond basic monitoring. First, they offer pre-built integrations with popular machine learning frameworks, meaning you can start monitoring your models in hours rather than weeks. Think of it as buying a fully-equipped kitchen versus assembling one from scratch—both work, but one gets you cooking much faster.

These platforms excel at managing multiple models across different teams. Imagine a retail company running separate models for inventory forecasting, customer recommendations, and fraud detection. Commercial tools provide centralized dashboards where different teams can monitor their specific models while management gets a bird’s-eye view of all AI operations.

The real value comes through advanced features like automated drift detection with custom thresholds, root cause analysis tools that pinpoint why model performance degraded, and collaborative workflows that let data scientists and ML engineers work together seamlessly. Many platforms also include explainability features, helping you understand not just what your model predicted, but why—crucial for regulated industries like healthcare or finance.

Most commercial vendors offer tiered pricing based on prediction volume or number of models, making them accessible to mid-sized teams before reaching enterprise scale. Many provide free trials, letting you test whether the investment matches your team’s needs before committing your budget.

Choosing the Right Tool for Your Needs

Selecting the right monitoring tool depends on three key factors. First, consider your project size. Small experimental projects may only need basic logging and open-source tools like MLflow or Weights & Biases, while enterprise deployments require comprehensive platforms like Datadog or New Relic. Second, evaluate your budget. Many tools offer free tiers perfect for students and individual developers—great for learning without financial commitment. Teams with resources can invest in premium solutions offering advanced features like automated drift detection and custom dashboards. Third, assess your technical expertise. If you’re just starting, choose tools with intuitive interfaces and strong documentation. Platforms like Azure Machine Learning Studio provide visual monitoring without requiring deep coding knowledge. More experienced practitioners might prefer customizable solutions like Prometheus paired with Grafana. Start simple, monitor what matters most to your specific use case, and scale your toolset as your needs grow. Remember, the best monitoring solution is one you’ll actually use consistently.

Getting Started: Your First Steps in AI Monitoring

Start Small: The Minimum Viable Monitoring Setup

You don’t need a complex infrastructure to start monitoring your AI models effectively. Think of it like learning to cook—you don’t buy a professional kitchen before making your first meal. The same principle applies to AI monitoring.

Begin with these three essential elements that provide immediate value without overwhelming your setup. First, track your model’s prediction accuracy over time. Create a simple dashboard that shows how often your model’s predictions match actual outcomes. For example, if you’ve deployed a recommendation system, measure how many users click on suggested items each day. A sudden drop from 40% to 25% click-through rate signals something needs attention.

Second, monitor input data quality. Set up basic alerts for unusual patterns in incoming data. Imagine your fraud detection model suddenly receives transaction amounts in different currency formats—this simple inconsistency could throw off your entire system. A quick check comparing new data distributions against your training data catches these issues early.

Third, measure response times. Users expect AI features to work quickly. Track how long your model takes to generate predictions, and set a threshold alert. If your chatbot normally responds in 2 seconds but suddenly takes 10, you’ll know immediately.

These three metrics—accuracy, data quality, and speed—form your monitoring foundation. You can implement them using basic logging tools and spreadsheets before investing in specialized platforms. Start here, prove the value, then expand your monitoring as your AI system grows.

Common Pitfalls and How to Avoid Them

Even experienced teams fall into predictable traps when starting their AI monitoring journey. The most common mistake? Monitoring everything at once. Picture a dashboard with hundreds of metrics flashing simultaneously—overwhelming, right? Instead, start small. Focus on three to five critical metrics that directly impact your users, like prediction latency or accuracy on core use cases. You can always expand later.

Another frequent pitfall is relying solely on accuracy metrics. Imagine a fraud detection model that’s 99% accurate but misses every actual fraud case because fraud only represents 1% of transactions. Without monitoring precision, recall, and real-world outcomes, you’d think everything was fine while problems escalated. Always pair performance metrics with business impact measurements.

Many teams also set static thresholds without considering natural variations. A customer service chatbot might show lower accuracy scores on Monday mornings when unusual weekend issues pour in, triggering false alarms. Use dynamic baselines that account for time-based patterns and seasonal trends instead of rigid cutoffs.

Perhaps the biggest mistake is treating AI monitoring as a one-time setup. Models drift, user behavior changes, and new edge cases emerge constantly. Think of monitoring like tending a garden rather than building a fence—it requires ongoing attention. Schedule regular reviews of your monitoring strategy, update alert thresholds based on observed patterns, and continuously validate that you’re tracking what truly matters for your specific application.

Monitoring your AI systems isn’t just a technical nicety—it’s the foundation of building AI applications you can trust and improve over time. Throughout this guide, we’ve explored how AI monitoring extends beyond traditional software observability, addressing unique challenges like data drift, model performance degradation, and prediction bias that can silently erode your system’s value.

The good news? You don’t need to implement everything at once. Start small and iterate. Begin by establishing baseline metrics for your model’s performance, even if it’s just tracking accuracy or prediction latency in a simple dashboard. As you gain confidence, layer in data quality checks, then expand to drift detection and fairness monitoring. Remember, the teams successfully running AI in production didn’t build comprehensive monitoring overnight—they started with one metric and grew from there.

Think of monitoring as your AI system’s health checkup. Just as you wouldn’t deploy an application without logging or error tracking, your machine learning models deserve the same level of attention. The consequences of neglecting this—from biased hiring algorithms to chatbots that frustrate customers—are too significant to ignore.

Your next step is straightforward: choose one model currently in production or development, identify its most critical success metric, and set up a simple alert when that metric deviates from expected ranges. Whether you use an open-source tool or build something custom, taking that first step transforms you from someone who deploys models to someone who maintains reliable AI systems.

The AI landscape will continue evolving, but the fundamentals of good monitoring remain constant: know what matters, measure it consistently, and act when things drift. You’re now equipped to build AI systems that don’t just work today, but continue delivering value tomorrow.