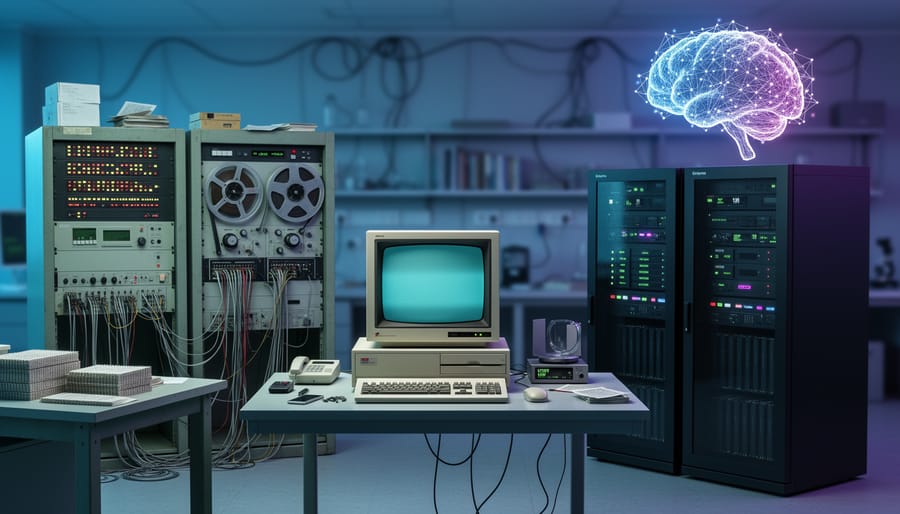

From the moment computer scientists first dreamed of thinking machines in the 1950s to today’s ChatGPT conversations and self-driving cars, artificial intelligence has transformed from theoretical mathematics into technology that touches billions of lives daily. This journey wasn’t a straight line of progress—it was a roller coaster of breakthrough moments, devastating failures nicknamed “AI winters,” and fundamental shifts in how we teach machines to understand our world.

At the heart of AI’s evolution lies a deceptively simple challenge: how do you make a computer “know” something? In the 1960s, researchers believed the answer was writing down everything as logical rules. By the 1980s, they tried capturing human expertise in elaborate knowledge bases. Today’s systems learn patterns from massive datasets without anyone explicitly programming rules at all. Each approach represents not just a technical shift, but a complete reimagining of what intelligence means and how to build it.

Understanding this timeline reveals why your smartphone can recognize your face but sometimes misunderstands simple questions, or why AI can beat world champions at chess yet struggle with tasks a toddler finds easy. The evolution of AI isn’t just about increasingly powerful computers—it’s about humanity’s evolving understanding of knowledge itself.

This article walks you through AI’s pivotal moments, from the symbolic reasoning systems that dominated early decades to the neural networks powering today’s revolution. You’ll discover how each era’s approach to representing knowledge shaped everything from medical diagnosis systems to the recommendation algorithms suggesting your next movie. Whether you’re curious about AI’s past or trying to understand where it’s headed, this timeline connects the dots between yesterday’s ambitious experiments and tomorrow’s possibilities.

The Birth of Machine Thinking: 1950s-1960s

Logic and Symbols Take Center Stage

In 1956, something remarkable happened that would shape the next two decades of AI research. Allen Newell and Herbert Simon created the Logic Theorist, a program that could prove mathematical theorems by manipulating symbols according to formal rules. This wasn’t just another computer program—it was the first system to mimic aspects of human reasoning, and it successfully proved 38 of the first 52 theorems in Bertrand Russell’s Principia Mathematica.

The Logic Theorist worked by treating mathematical problems like puzzles. It started with known axioms (basic truths) and applied logical rules to transform them step by step until reaching the desired proof. Think of it like solving a Rubik’s cube: you have a starting position, a goal, and a set of valid moves to get there.

This success convinced researchers that human intelligence could be captured through formal logic and symbol manipulation—the symbolic AI foundations that dominated early AI development. The reasoning seemed sound: if humans use logic to solve problems, and we can encode logic into machines, then machines should be able to think like humans.

Following this breakthrough, researchers developed increasingly sophisticated theorem provers. The General Problem Solver (1959) attempted to solve any problem that could be expressed in symbolic logic. These systems worked well in controlled, rule-based environments like chess or mathematics.

Here’s a simple example of how these systems reasoned:

Given: All humans are mortal. Socrates is human.

The system would apply logical rules to conclude: Therefore, Socrates is mortal.

This approach seemed promising because it produced verifiable, step-by-step reasoning. However, researchers would soon discover that real-world problems rarely fit into such neat logical boxes.

The Promise and Limitations

The earliest AI systems emerging from pioneering AI research institutions in the 1950s and 60s showed remarkable promise in narrow domains. Programs like the Logic Theorist could prove mathematical theorems, while ELIZA convinced users they were conversing with a human therapist through clever pattern matching. These successes fueled tremendous optimism about creating machines that could truly think.

However, these systems faced significant limitations that would shape AI’s evolution for decades. They excelled only in controlled environments with clear rules. A chess-playing program couldn’t apply its logic to checkers, let alone real-world problems. Knowledge had to be explicitly programmed by hand, making systems brittle and inflexible. ELIZA’s conversations, while impressive initially, quickly revealed their superficiality when pushed beyond simple scripts.

The real challenge centered on knowledge representation: how could machines understand context, handle ambiguity, and apply learning across different situations? Early systems couldn’t distinguish between a bat (the animal) and a bat (the sports equipment) without explicit programming for each scenario. They lacked common sense, struggled with uncertainty, and required enormous human effort to encode even basic knowledge.

These limitations weren’t mere technical hurdles. They represented fundamental questions about intelligence itself. How do humans organize knowledge? How do we learn from experience? These questions would drive AI’s evolution through boom-and-bust cycles, pushing researchers to develop new approaches that could overcome these early constraints and move closer to truly intelligent systems.

Organizing Knowledge Like Humans Do: 1970s-1980s

Semantic Networks and Frames

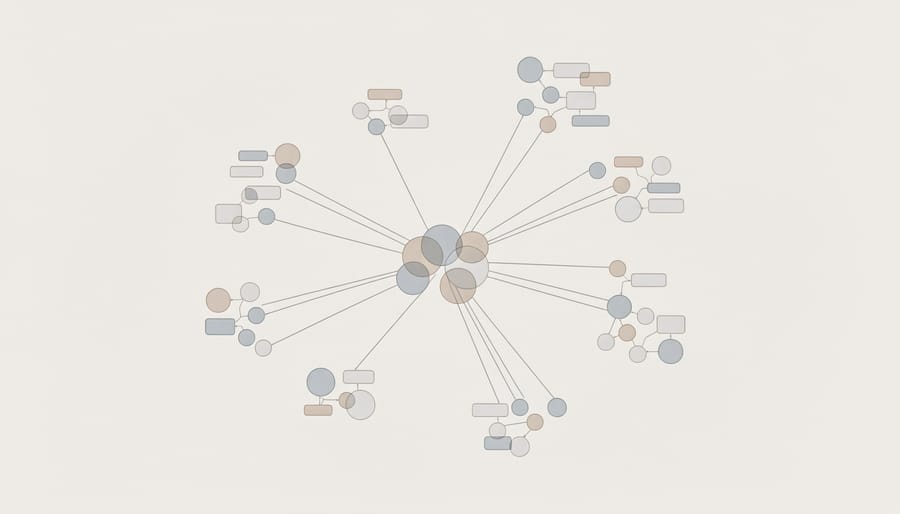

In the 1970s, AI researchers realized that simply storing facts wasn’t enough—computers needed to understand how concepts relate to each other, just like our minds do. Think about how you remember things: when someone mentions “restaurant,” your brain automatically connects it to related concepts like food, menus, waiters, and tables. This web of associations helps you understand context and make inferences. AI researchers wanted to replicate this human-like thinking.

Semantic networks emerged as a solution, visualizing knowledge as a map of interconnected nodes. Picture a subway map where each station represents a concept, and the lines between them show relationships. For example, a node labeled “bird” might connect to “can fly,” “has wings,” and “robin” through different relationship types. When the system needs to answer “Can a robin fly?” it simply follows the connections: robin IS-A bird, and birds CAN fly.

Building on this foundation, researchers developed frames in the late 1970s—think of them as detailed templates for understanding specific situations. Imagine a “birthday party” frame as a form with standard fields: location, guests, cake, presents, and activities. When you hear about a birthday party, your brain automatically fills in expected details even if they’re not explicitly mentioned. AI frames worked similarly, allowing computers to make reasonable assumptions and understand context.

These approaches powered early question-answering systems and helped computers interpret stories by filling in implied information. A system reading “Sarah went to a restaurant” could infer she probably ordered food, even without being told directly. This represented a significant leap from rigid rule-based systems, moving AI toward more flexible, human-like reasoning that could handle ambiguity and make logical connections between related concepts.

Expert Systems Revolution

By the 1970s, AI researchers had learned a crucial lesson from earlier setbacks: intelligence wasn’t just about clever algorithms or powerful reasoning engines. It was about knowledge itself. This realization sparked what became known as the expert systems revolution, where AI finally moved from research labs into solving real-world problems.

Expert systems worked on a simple but powerful principle: if you could capture the knowledge of human experts and represent it in a way computers could use, machines could make intelligent decisions in specialized fields. Instead of trying to create general intelligence, these systems focused on narrow domains where deep expertise mattered most.

DENDRAL, developed at Stanford University in the mid-1960s, pioneered this approach by helping chemists identify molecular structures. Scientists would feed the system mass spectrometry data, and DENDRAL would use its encoded chemical knowledge to determine what molecules were present. What made it remarkable was that it performed at the level of expert chemists, demonstrating that machines could genuinely assist in scientific discovery.

MYCIN took this concept even further in the medical field during the 1970s. This system diagnosed bacterial infections and recommended antibiotics by asking doctors a series of questions about patient symptoms. MYCIN stored its medical knowledge as hundreds of “if-then” rules. For example: if the patient has a fever and the infection site is blood, then consider certain bacteria as likely culprits. In evaluations, MYCIN actually outperformed many junior doctors, correctly diagnosing infections 69% of the time compared to human experts’ 65%.

These successes proved that AI could deliver practical value. Companies began developing expert systems for everything from financial planning to equipment maintenance, marking AI’s first significant commercial wave.

The Knowledge Engineering Bottleneck: 1980s-1990s

When Hand-Coding Knowledge Became Impossible

By the mid-1980s, expert systems had become commercially successful, powering everything from medical diagnosis tools to financial planning software. But beneath the surface, AI researchers were encountering a fundamental problem: the world contains far too much knowledge to code by hand.

Consider what it takes to understand a simple sentence like “The trophy wouldn’t fit in the suitcase because it was too big.” Humans instantly know that “it” refers to the trophy, not the suitcase. We make this inference using common sense knowledge about physical objects and spatial relationships. An expert system would need explicit rules programmed for countless similar scenarios, and developers quickly realized this approach couldn’t scale.

The limitations became painfully clear during the AI winter of the late 1980s and early 1990s. Expert systems were brittle, breaking down whenever they encountered situations outside their narrow domains. Adding new knowledge meant manually writing more rules, which often conflicted with existing ones, creating a tangled web of logical contradictions.

Enter the CYC project, launched in 1984 by computer scientist Douglas Lenat. His team set out to solve the common sense problem once and for all by manually encoding everything a person knows about everyday life. They aimed to input millions of facts and rules: objects fall when dropped, people get angry when insulted, water flows downhill, stores close at night.

Thirty years and tens of millions of dollars later, CYC had accumulated over 25 million facts. Yet it still struggled with tasks that four-year-olds find trivial. The project demonstrated a harsh truth: human knowledge is vast, interconnected, and context-dependent in ways that defy systematic hand-coding.

This realization sparked a fundamental shift in AI thinking. If machines couldn’t be programmed with all necessary knowledge, perhaps they could learn it themselves from experience and data. This insight would prove transformative, though the technology to make it practical wouldn’t arrive until decades later.

The failure of hand-coded knowledge systems wasn’t just a setback. It redirected AI research toward pattern recognition, statistical methods, and eventually machine learning, setting the stage for the neural network revolution that would finally deliver on AI’s original promise.

Learning to Learn: 1990s-2000s

Statistical Methods Enter the Scene

By the 1980s and 1990s, AI researchers faced a harsh reality: their rule-based expert systems were too rigid. They couldn’t handle uncertainty or learn from new information. What if it rained lightly instead of heavily? What if a medical symptom appeared in only 70% of cases? The traditional systems simply couldn’t cope.

Enter statistical methods, which revolutionized machine knowledge representation by introducing probability and data-driven learning. Instead of programming every rule manually, researchers began teaching computers to learn patterns from examples.

Think of spam filters as a perfect example. Rather than listing every possible spam characteristic, modern filters analyze thousands of emails to learn what spam looks like. They assign probabilities: “This email is 95% likely to be spam based on similar patterns I’ve seen before.” The system becomes smarter with every email it processes.

This shift brought several game-changing advantages. Bayesian networks allowed AI to reason with uncertainty, much like humans do. Machine learning algorithms could discover patterns humans might miss entirely. Systems became adaptive, improving their knowledge automatically as they encountered new data.

The approach wasn’t without challenges. Statistical methods required massive amounts of training data and significant computing power. Early implementations struggled with the computational demands. However, as data collection improved and processors became more powerful throughout the 2000s, these methods proved transformative.

This probabilistic revolution laid the groundwork for modern AI breakthroughs, from voice assistants understanding accents to recommendation systems predicting your preferences with uncanny accuracy.

The Rise of Ontologies and the Semantic Web

As the internet exploded in the late 1990s and early 2000s, a new problem emerged: computers could display information beautifully for humans, but they couldn’t understand what that information actually meant. A webpage about “Paris” could refer to the French capital, Paris Hilton, or the mythological prince of Troy, and machines had no way to tell the difference.

This challenge sparked the development of the Semantic Web, a vision where data on the internet would be structured so machines could interpret and connect information intelligently. Think of it as giving the web a brain that could understand relationships between concepts, not just display text and images.

To make this happen, researchers developed standardized languages for describing knowledge. Resource Description Framework (RDF) emerged as a way to express information in simple subject-predicate-object statements, like “Paris is-capital-of France.” Web Ontology Language (OWL) took this further, allowing developers to create detailed vocabularies that defined concepts and their relationships in specific domains.

These technologies enabled practical applications we use today. When you search for “Albert Einstein” and Google immediately shows you his birth date, notable works, and related scientists without you clicking anywhere, that’s structured data at work. E-commerce sites use these ontologies to help you find products by connecting related features and categories.

While the grand vision of a fully semantic web hasn’t been completely realized, these knowledge representation tools became foundational building blocks. They taught AI systems how to organize information in ways that made relationships and context explicit, paving the way for smarter search engines and ultimately influencing how modern AI assistants understand and respond to our questions.

Deep Learning Rewrites the Rules: 2010s-Present

Word Embeddings and Vector Spaces

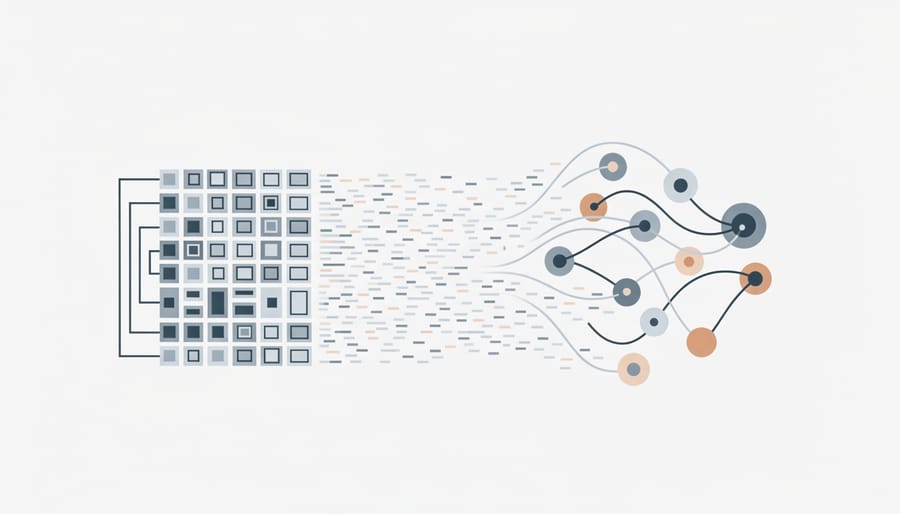

Around 2013, AI researchers discovered a breakthrough method for teaching machines what words actually mean. Instead of treating language as pure text, they developed techniques to represent words as coordinates in mathematical space—a concept called word embeddings.

Think of it like mapping a city. Just as you can describe any location using latitude and longitude, word embeddings assign each word a unique position in a high-dimensional space—sometimes using hundreds of coordinates. Words with similar meanings naturally cluster together, while unrelated words stay far apart.

The most famous example came from word2vec, a Google project that stunned researchers with its ability to capture meaningful relationships. The system learned that king minus man plus woman equals queen. This wasn’t programmed—the AI discovered this pattern by analyzing millions of text examples and noticing how words appeared in similar contexts.

Here’s how it works in practice: The system reads countless sentences and notices that “king” and “queen” appear in similar situations, just with different gendered pronouns. It learns that “Paris” relates to “France” the same way “Tokyo” relates to “Japan.” These patterns become mathematical relationships that computers can calculate.

This approach solved a critical problem. Earlier AI systems treated each word as completely separate, unable to recognize that “car” and “automobile” mean essentially the same thing. Word embeddings gave machines something closer to understanding—not true comprehension, but a numerical representation of meaning that could power new applications.

This vector-based approach became foundational for modern AI. Search engines use it to understand what you’re really asking. Translation tools map relationships between languages. Recommendation systems suggest products based on semantic similarity. By converting meaning into mathematics, researchers finally gave AI systems a way to work with concepts rather than just matching keywords.

Transformers and Knowledge Graphs Combined

Today’s most powerful AI systems represent a fascinating marriage of two approaches that once seemed incompatible. Modern transformer models like GPT-4, BERT, and ChatGPT combine the pattern-recognition prowess of neural networks with structured ways of organizing information that echo the early breakthroughs in symbolic AI.

Knowledge graphs, which organize information as interconnected nodes and relationships, are increasingly being integrated with transformer architectures. Think of knowledge graphs as structured maps of facts: “Paris is the capital of France” or “Einstein developed the theory of relativity.” These graphs provide explicit, verifiable knowledge that neural networks can reference and reason over.

What makes transformers revolutionary is their attention mechanism, which allows them to understand context and relationships between words. When BERT reads a sentence, it doesn’t just process words sequentially. Instead, it examines how each word relates to every other word, building an internal representation of meaning. This creates something resembling a dynamic knowledge graph within the network itself.

Recent innovations combine both worlds. Systems like Google’s Knowledge Graph-enhanced search use traditional structured databases alongside neural networks. The knowledge graph provides factual accuracy, while the neural network handles natural language understanding and generation.

Consider how ChatGPT answers a question about historical events. It draws on patterns learned during training, but researchers are now enhancing such models with explicit knowledge graphs to reduce errors and improve factual reliability. Microsoft’s Bing AI, for example, combines GPT models with structured knowledge bases to provide more accurate, verifiable responses.

This hybrid approach addresses a critical limitation: neural networks alone can hallucinate false information, while pure symbolic systems struggle with ambiguity and context. Together, they create AI systems that are both knowledgeable and conversationally fluent, representing the current pinnacle of knowledge representation evolution.

What This Evolution Means for You

How Today’s AI Uses These Lessons

The evolution of knowledge representation directly powers the AI tools you use every day, often in ways you might not realize.

When you ask ChatGPT a question, you’re benefiting from decades of progress in how machines understand and organize information. Early AI systems could only answer questions they were explicitly programmed to handle. ChatGPT, however, uses transformer-based neural networks that learned patterns from massive amounts of text, allowing it to grasp context, nuance, and relationships between concepts. It doesn’t just match keywords—it understands that “bank” means something different when discussing rivers versus finance.

Google Search has transformed dramatically from simple keyword matching to semantic understanding. Modern search algorithms use knowledge graphs, which represent information as interconnected networks of entities and relationships. When you search for “Tom Hanks movies,” Google doesn’t just find pages containing those words. It understands Tom Hanks as an actor, recognizes his filmography, and can show you related information about his co-stars, directors, and awards. This semantic approach emerged from combining symbolic AI’s structured knowledge with statistical learning methods.

Netflix and Spotify recommendations demonstrate how hybrid knowledge representation works in practice. These systems combine rule-based logic (if someone watches action movies, suggest similar titles) with neural networks that detect subtle patterns in viewing habits across millions of users. The result feels almost magical—recommendations that seem to know your taste better than you do.

Virtual assistants like Siri and Alexa blend multiple representation techniques simultaneously. They use natural language processing to understand your words, knowledge bases to retrieve factual information, and machine learning to improve over time. When you ask about weather, they’re accessing structured databases, parsing your location, and presenting information conversationally.

These technologies are quietly reshaping our digital world, making interactions more intuitive and personalized. The journey from LISP programs to today’s sophisticated AI wasn’t just about faster computers—it was about discovering better ways to help machines represent and reason about the knowledge that makes them useful.

From the rigid, rule-based systems of the 1950s to today’s flexible neural networks, artificial intelligence has traveled an extraordinary path. What began as simple if-then logic trees—where computers could only follow explicitly programmed instructions—has blossomed into systems that learn patterns from experience, much like we do.

This evolution wasn’t just about building faster computers. It was fundamentally about solving a puzzle: how do we help machines represent and use knowledge? Early symbolic AI excelled at chess and mathematical proofs because rules were clear-cut. But these systems stumbled when facing the messy, ambiguous real world—like recognizing a cat in different lighting or understanding sarcasm in conversation.

The shift to neural networks and deep learning changed everything. Instead of programming every rule, we now let AI discover patterns in data. This approach powers the voice assistants on your phone, the recommendation systems you use daily, and the image recognition tools that organize your photos automatically.

But here’s the exciting part: we’re not abandoning decades of symbolic AI research. The future lies in hybrid approaches that combine the best of both worlds. Imagine systems that learn like neural networks but can also explain their reasoning using symbolic logic—AI that’s both intuitive and transparent.

As you’ve seen throughout this timeline, AI progress often came from rethinking fundamental assumptions about knowledge itself. Each breakthrough—from expert systems to transformers—represented a new answer to that core question: how should machines understand their world?

Whether you’re a student just starting your AI journey or a professional keeping pace with the field, understanding this evolution helps you appreciate not just where AI is heading, but why certain approaches work better for specific problems. The story continues, and you’re now part of it.