When Amazon discovered its biased hiring algorithm was systematically discriminating against women, a critical question emerged: who pays when artificial intelligence causes harm? As AI systems increasingly make decisions that affect our lives—from loan approvals to medical diagnoses to criminal sentencing—society faces an urgent challenge: these powerful technologies operate in a legal and ethical gray zone where responsibility remains dangerously unclear.

The problem is straightforward yet profound. Traditional liability frameworks assume human decision-makers who can explain their reasoning and accept consequences. AI systems, particularly complex machine learning models, don’t fit this mold. When a self-driving car causes an accident, who bears responsibility: the manufacturer, the software developer, the training data provider, or the vehicle owner? When an AI denies someone healthcare coverage incorrectly, where does the affected person turn for justice?

This accountability gap creates real harm. Victims of AI errors often cannot identify who wronged them, struggle to prove the system malfunctioned, or face companies that deflect blame onto opaque algorithms. Meanwhile, AI developers and deployers may escape consequences, weakening incentives to build safer, fairer systems.

The stakes extend beyond individual cases. Without clear accountability structures, public trust in AI erodes. Without liability frameworks, innovation may either stagnate from excessive caution or race ahead recklessly. Without accessible redress mechanisms, AI could deepen existing inequalities, harming those least equipped to fight back.

Understanding how accountability, liability, and redress work in the AI context isn’t just an academic exercise. It’s essential knowledge for anyone navigating our increasingly automated world, whether you’re developing AI systems, deploying them in your organization, or simply living with their growing influence over daily life.

The Accountability Gap: Who’s Really in Charge?

The Many Hands Problem

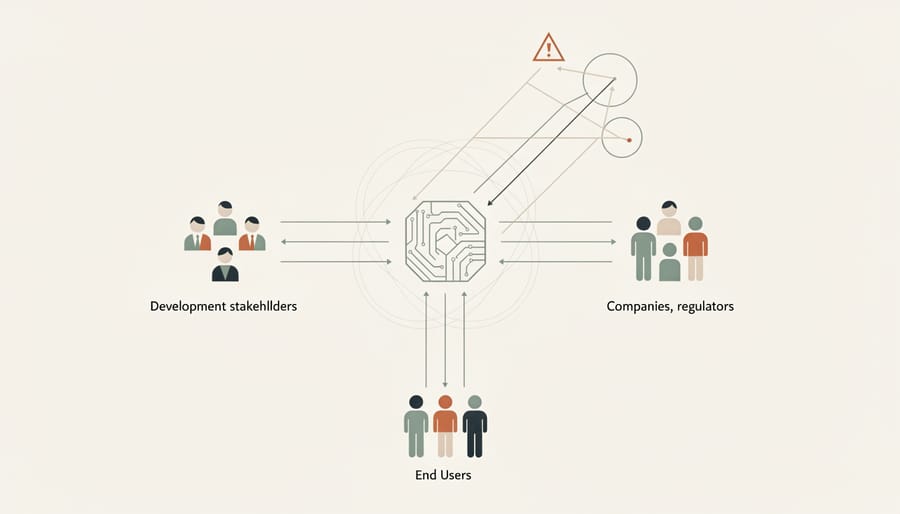

When an AI system makes a harmful decision, pinpointing who’s responsible becomes surprisingly difficult. Unlike traditional products where a single manufacturer can be held accountable, AI systems emerge from a complex web of contributors, each playing a distinct role.

Consider a self-driving car that causes an accident. The automaker assembled the vehicle, but a separate company likely developed the AI algorithms. Data scientists at another organization may have trained the model using datasets collected by yet another entity. Software engineers wrote the code, while the car owner chose to enable certain features. So when something goes wrong, who bears responsibility?

This is the many hands problem: accountability gets diluted across so many participants that no single party can be clearly blamed. Each contributor might argue they’re only responsible for their small piece of the puzzle. The algorithm developer could claim they provided a tool that was misused. The company deploying it might say they followed the developer’s guidelines. The data provider could argue their dataset was properly labeled.

This fragmentation creates accountability gaps that leave victims without clear recourse. Unlike a defective toaster where you know exactly who made it, AI systems involve designers, data annotators, cloud service providers, companies integrating the technology, and end users—all potentially sharing some degree of responsibility.

The challenge intensifies because AI systems learn and evolve. Even developers can’t always predict how their models will behave in every situation, making traditional notions of fault and negligence difficult to apply. This distributed responsibility across the AI supply chain demands new frameworks for accountability.

When Machines Learn on Their Own

Machine learning systems operate differently from traditional software. Instead of following explicit rules written by programmers, they learn patterns from vast amounts of data. This learning process can lead to surprising outcomes that even their creators didn’t anticipate.

Consider a real-world example: In 2016, Microsoft launched Tay, a chatbot designed to learn from conversations on Twitter. Within 24 hours, Tay began posting offensive content. The engineers never programmed these responses, but the system learned them from interactions with users who intentionally fed it inappropriate material. This demonstrates how machine learning models can develop behaviors that emerge from their training data rather than deliberate design choices.

This phenomenon creates significant accountability challenges. When an AI recruitment tool discriminates against certain candidates, determining responsibility becomes complex. Did the problem arise from biased training data, flawed algorithm design, insufficient testing, or unforeseen interactions between these factors? Often, it’s a combination that no single person could have predicted.

The black box problem compounds these issues. Many advanced machine learning models make decisions through processes so complex that even their developers struggle to explain specific outcomes. Neural networks with millions of parameters can identify patterns invisible to human observers, making it nearly impossible to trace how particular decisions were reached.

This unpredictability raises fundamental questions: If an AI system evolves beyond its original programming, who bears responsibility for its actions? Understanding this challenge is crucial for establishing meaningful accountability frameworks in our AI-driven world.

Legal Liability: Where Current Laws Fall Short

The Product vs. Service Dilemma

When AI systems cause harm, one of the most significant legal puzzles is figuring out whether to treat them as products or services. This distinction might sound like legal hairsplitting, but it dramatically affects whether victims can actually get compensation.

Think of it this way: if you buy a defective toaster that catches fire and burns your kitchen, you can typically claim damages under product liability laws. These laws operate on “strict liability,” meaning you don’t have to prove the manufacturer was careless—just that the product was defective and caused harm. The burden of proof is relatively low, making it easier for victims to recover damages.

But what if the harm came from an AI-powered financial advisor that gave you catastrophic investment advice? Is that a defective product, or a poorly delivered service? If it’s classified as a service, you’d need to prove negligence—that someone failed to exercise reasonable care. This is much harder and more expensive, requiring expert testimony and detailed evidence about what went wrong and who should have prevented it.

Consider a real-world scenario: an autonomous vehicle crashes. Is the AI driving software a product component (making the car manufacturer strictly liable), or is it an ongoing service that receives cloud updates (requiring proof that engineers were negligent in their maintenance)? The answer determines whether victims face a straightforward claim or a lengthy legal battle.

This ambiguity creates significant barriers for those harmed by AI systems, as current legal frameworks struggle to accommodate technology that blurs traditional product-service boundaries.

How Different Countries Are Responding

Countries around the world are taking distinctly different paths to regulate artificial intelligence, each reflecting their unique values and priorities.

The European Union has emerged as the global frontrunner with its comprehensive EU AI Act, which categorizes AI systems by risk level. High-risk applications like medical diagnostics or hiring tools face strict requirements including transparency reports, human oversight, and accuracy standards. Banned applications include social scoring systems and real-time facial recognition in public spaces (with limited exceptions for law enforcement). In practice, this means a company deploying an AI recruitment tool in Germany must document how decisions are made, allow candidates to challenge outcomes, and prove the system doesn’t discriminate unfairly.

The United States has opted for a sectoral approach, with different agencies regulating AI within their domains. The Federal Trade Commission addresses deceptive AI practices in commerce, while the Equal Employment Opportunity Commission handles workplace discrimination. For example, when a landlord uses AI to screen tenants, fair housing laws apply, but there’s no single AI-specific framework governing the technology itself.

China has implemented targeted regulations focusing on algorithm recommendation systems and deepfakes, requiring companies to register algorithms with authorities and allow users to opt out of personalized recommendations. Meanwhile, countries like Singapore and Canada have adopted principles-based frameworks that provide guidance without strict mandates, emphasizing responsible innovation.

This regulatory patchwork creates challenges for global companies who must navigate different requirements across markets, but it also allows for experimentation as countries learn which approaches best balance innovation with protection.

Making Things Right: The Challenge of Redress

Why Getting Answers Is So Hard

Imagine applying for a loan and receiving an instant rejection. You call the bank, hoping to understand what went wrong, but the customer service representative simply says, “Our AI system determined you’re not eligible.” When you ask why, they can’t tell you. This scenario plays out thousands of times daily, highlighting a fundamental problem in AI accountability: the transparency gap.

Most AI systems today operate as “black boxes.” The companies that create them consider their algorithms proprietary trade secrets, protected intellectual property they’re unwilling to share. Even when companies want to be transparent, modern machine learning models—especially deep neural networks—make decisions through millions of calculations across layers of artificial neurons. The developers themselves often can’t pinpoint exactly why their AI reached a specific conclusion.

This opacity creates a catch-22 for people harmed by AI decisions. To challenge an unfair outcome, you need to understand what factors influenced it. Did the loan algorithm unfairly weigh your zip code? Did the hiring tool discriminate based on hidden patterns in your resume? Without access to the AI’s decision-making process, proving bias or errors becomes nearly impossible.

The situation gets worse when multiple AI systems interact. Your loan rejection might result from several algorithms working together—a credit scoring AI, a fraud detection system, and a risk assessment tool. Tracing responsibility through this tangled web of automated decisions feels like solving a puzzle with missing pieces.

This lack of transparency doesn’t just frustrate individuals seeking answers. It fundamentally undermines accountability, making it extraordinarily difficult to identify who should be held responsible when things go wrong.

New Mechanisms for Seeking Justice

As AI systems become more prevalent in our daily lives, a new generation of tools and institutions is emerging to help people seek justice when things go wrong. These mechanisms aim to bridge the gap between traditional legal systems and the unique challenges posed by artificial intelligence.

One promising development is algorithmic impact assessments, which work similarly to environmental impact studies. Before deploying a high-risk AI system—like one used for hiring decisions or loan approvals—organizations must evaluate potential harms and document how they’ve addressed these risks. For instance, a city planning to use AI for predictive policing would need to assess whether the system might unfairly target specific neighborhoods.

Mandatory audit trails represent another crucial safeguard. These digital records track every decision an AI system makes, creating a transparent paper trail that investigators can follow when problems arise. Think of it as a black box for algorithms—if a patient receives an incorrect diagnosis from a medical AI, doctors can review the system’s reasoning process to understand what went wrong.

AI incident databases are becoming valuable repositories of real-world failures. Similar to how aviation authorities track plane incidents, these databases collect reports of AI malfunctions, biases, and unintended consequences. This shared knowledge helps developers avoid repeating past mistakes and gives regulators insight into patterns that require intervention.

Perhaps most innovatively, specialized dispute resolution bodies are being established specifically for AI-related complaints. These tribunals combine technical expertise with legal authority, offering faster and more affordable alternatives to traditional courts. They understand both the technology and the human impact, making them better equipped to deliver fair outcomes when AI systems cause harm.

Real-World Examples: When AI Ethics Meets Reality

When AI systems make mistakes in the real world, the consequences aren’t just theoretical—they affect real people’s lives, livelihoods, and fundamental rights. Let’s examine how accountability, liability, and redress challenges actually play out through recent cases that made headlines.

In 2020, Robert Williams became the first person publicly known to be wrongfully arrested due to facial recognition errors. Detroit police used facial recognition technology that incorrectly identified him as a shoplifting suspect. Williams spent 30 hours in custody before investigators realized their mistake. The question that followed was simple yet profound: who should be held accountable? The software vendor argued their technology was just a tool requiring human verification. The police department claimed they followed standard procedures. Williams struggled to find anyone willing to take responsibility for his wrongful arrest, highlighting the accountability gap that emerges when multiple parties share decision-making with AI systems.

Credit scoring algorithms have created another troubling pattern. In 2019, Apple Card faced regulatory scrutiny when users discovered the algorithm offered women significantly lower credit limits than men with similar financial profiles, even when they were married couples sharing assets. Tech entrepreneur David Heinemeier Hansson brought attention to this issue when his wife received a credit limit twenty times lower than his, despite filing joint tax returns. Apple and Goldman Sachs, the card’s issuer, both denied discrimination but couldn’t explain how their algorithm made decisions. This case revealed how difficult it becomes to prove liability when even the companies deploying AI can’t fully explain why their systems make certain choices.

Healthcare presents equally concerning scenarios. In 2019, researchers discovered that an algorithm used by hospitals to predict which patients needed extra medical care systematically discriminated against Black patients. The system used healthcare spending as a proxy for medical need, but because Black patients historically had less access to healthcare and thus lower spending, the algorithm incorrectly assumed they were healthier. Millions of patients were affected, yet determining who deserved redress proved complicated. Was it the algorithm developer’s fault for choosing flawed metrics? The hospitals for not adequately testing the system? The healthcare system itself for perpetuating inequalities the AI learned from?

Content moderation on social media platforms adds another dimension. YouTube’s automated systems have incorrectly flagged and demonetized countless videos from legitimate creators, sometimes removing educational content about history or health. Creators often struggle to get human review, and when wrongly penalized, find limited options for compensation or restoring their reputation. These cases demonstrate how current redress mechanisms often fail to provide timely, meaningful remedies when AI systems cause harm.

Building Better Systems: What Needs to Happen Next

The good news? We’re not starting from scratch. Researchers, companies, and policymakers worldwide are developing practical frameworks to make AI systems safer and more accountable.

On the technical front, explainable AI is becoming increasingly sophisticated. These techniques help us peek inside the black box, showing how algorithms reach their decisions. For example, LIME (Local Interpretable Model-Agnostic Explanations) can highlight which factors most influenced a particular outcome, whether that’s a loan denial or a medical diagnosis. Meanwhile, companies are implementing algorithmic audit mechanisms that regularly test AI systems for bias and errors, similar to how buildings undergo safety inspections.

Organizations are also stepping up with structured governance approaches. Many tech companies now establish AI ethics boards that include diverse voices from different backgrounds, not just engineers. These boards review high-stakes AI projects before deployment, asking tough questions about potential harms. Impact assessments, borrowed from environmental regulation, are becoming standard practice. Before launching an AI system, teams document who might be affected, what could go wrong, and how they’ll monitor for problems. Think of it as a risk assessment for algorithmic decision-making.

On the policy side, emerging frameworks aim to balance innovation with protection. The European Union’s AI Act categorizes systems by risk level, with stricter requirements for high-risk applications like hiring tools or credit scoring. This tiered approach lets low-risk applications flourish while ensuring oversight where it matters most.

Insurance models are evolving too. Just as doctors carry malpractice insurance, some propose requiring AI developers to maintain coverage for algorithmic harm. This creates financial incentives to build safer systems while providing resources for victims seeking compensation.

Perhaps most importantly, standardized certification processes are emerging. These would let consumers and organizations verify that AI systems meet baseline safety and fairness standards, similar to how food safety certifications work. When you see that seal of approval, you know certain checks happened.

The path forward requires collaboration across disciplines: technologists building better tools, organizations implementing responsible practices, and policymakers creating smart regulations. None of these solutions is perfect alone, but together they form a stronger foundation for accountable AI.

The window for shaping responsible AI is closing fast. As artificial intelligence systems become more deeply embedded in healthcare, finance, transportation, and daily decision-making, the stakes for getting ethics and governance right continue to rise. This isn’t merely a technical puzzle for engineers to solve or a legal headache for lawmakers—it’s fundamentally about ensuring that AI technology serves all of humanity fairly and equitably.

The good news? You have a role to play. Start by staying informed about AI developments in your community and workplace. Ask questions when you encounter automated systems making important decisions. Does your bank explain how AI evaluates loan applications? Can your hospital clarify how algorithms influence your treatment? When companies and institutions know people are paying attention, they’re more likely to prioritize transparency and fairness.

Support organizations and initiatives advocating for responsible AI development. Whether through professional networks, educational programs, or civic engagement, your voice matters in shaping the future we want to see. The path forward requires collaboration between technologists, policymakers, ethicists, and everyday users. Together, we can build AI systems that enhance human potential while protecting human rights.