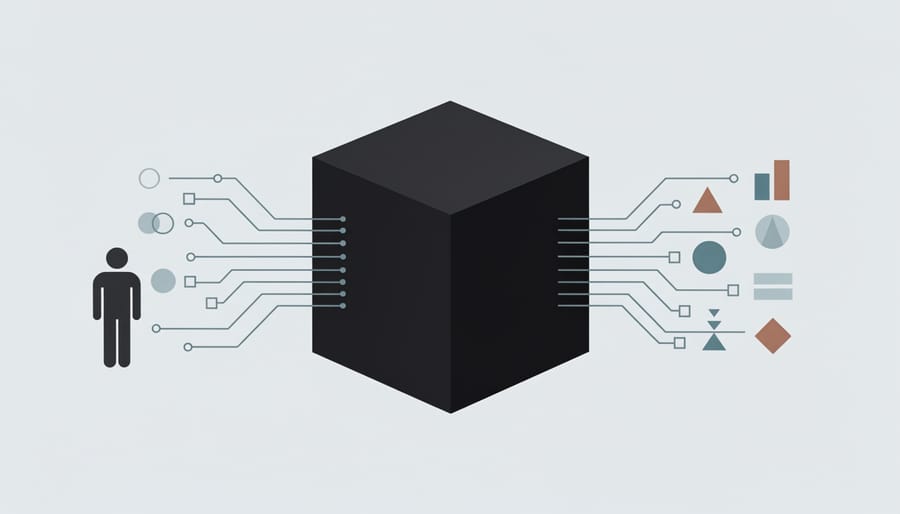

Every time your phone suggests the next word in a text message, denies a loan application, or recommends a video, artificial intelligence makes decisions that shape your daily life. But here’s the unsettling part: most of us have no idea how these systems arrive at their conclusions. They operate as black boxes, processing our data and influencing outcomes while keeping their decision-making logic hidden from view.

Transparency in artificial intelligence means opening those black boxes to reveal how AI systems work, what data they use, and why they make specific decisions. Think of it as the difference between a doctor explaining their diagnosis versus simply handing you a prescription with no explanation. When AI systems are transparent, developers, users, and regulators can examine the logic behind automated decisions, identify potential biases, and hold these systems accountable for their impacts on real people.

This matters more than you might think. AI systems now determine who gets hired, which neighborhoods receive resources, who qualifies for medical treatment, and even who walks free from jail. When a resume-screening algorithm rejects your application, or a predictive policing system targets your community, you deserve to know why. Without transparency, discriminatory patterns can persist undetected, errors go uncorrected, and those affected have no means to challenge unfair outcomes.

The challenge is that making AI transparent isn’t straightforward. Modern machine learning models, especially deep neural networks, are extraordinarily complex. Even their creators sometimes struggle to explain exactly how they reach specific conclusions. Balancing the need for openness with concerns about privacy, security, and competitive advantage creates additional complications.

Understanding AI transparency empowers you to ask better questions about the automated systems shaping your world and demand accountability from those who deploy them.

What AI Transparency Actually Means

The Black Box Problem

Imagine asking a friend for restaurant recommendations, and they reply with the perfect suggestion—but when you ask why they chose it, they simply shrug and say, “I just know.” That’s essentially what happens with many AI systems today. They’re black boxes: complex systems that produce results without revealing how they reached those conclusions.

Here’s how this happens. Modern AI systems, particularly those using deep learning, process information through millions of interconnected calculations. When you upload a photo and AI identifies a cat, that decision traveled through countless mathematical operations happening simultaneously across multiple layers of neural networks. The AI didn’t follow a simple if-then rule like “if it has whiskers and pointy ears, it’s a cat.” Instead, it recognized patterns across millions of data points in ways that even its creators can’t fully trace back or explain in plain language.

This creates a genuine challenge. When a bank’s AI denies your loan application or a hospital’s system recommends a specific treatment, you deserve to know why. But the AI itself can’t provide a straightforward explanation—it made the decision based on statistical patterns it detected during training, weighing thousands of factors in ways that don’t translate into human reasoning.

The black box problem isn’t about AI keeping secrets intentionally. It’s about the fundamental gap between how machines process information and how humans understand decisions. This gap makes it difficult to verify whether AI systems are making fair, accurate, and ethical choices.

Transparency vs. Explainability: What’s the Difference?

While these terms often appear together, they refer to distinct concepts that work hand-in-hand. Think of transparency as showing your work, while explainability is helping others understand it.

Transparency means making AI systems open to inspection. It’s about revealing what data was used to train a model, who developed it, and what its limitations are. Imagine a company disclosing that their hiring algorithm was trained on data from the past ten years of successful employees.

Explainability, on the other hand, focuses on making the decision-making process understandable. It answers the question: “Why did the AI make this specific decision?” For instance, when a loan application gets rejected, explainability would show that the decision was based on debt-to-income ratio and credit history, not on protected characteristics.

Here’s a practical example: A transparent medical AI would disclose its training data sources and accuracy rates. An explainable medical AI would tell doctors which specific symptoms or test results led to a particular diagnosis recommendation. Ideally, AI systems should be both transparent and explainable, giving users complete visibility into how decisions affecting their lives are made.

Where AI Transparency Becomes Critical

Healthcare Decisions That Affect Lives

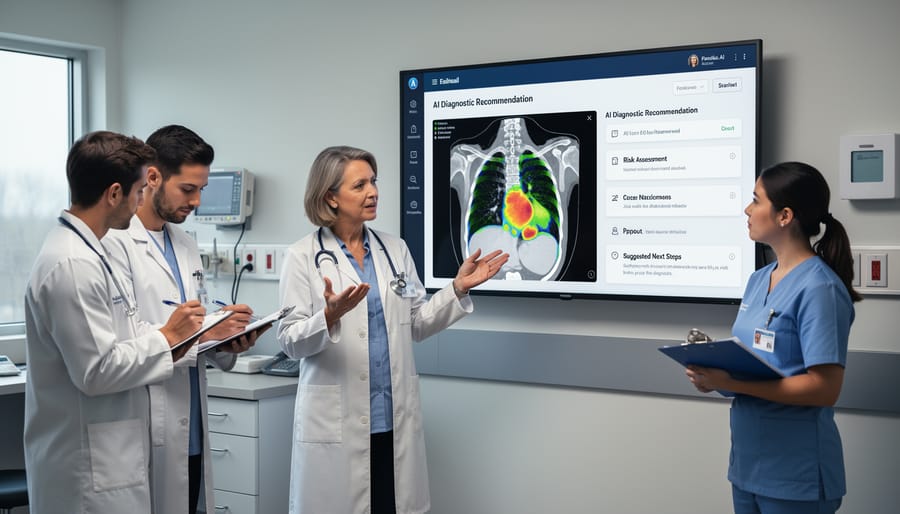

Imagine a radiologist examining a chest X-ray when an AI system flags a suspicious shadow as potential lung cancer. The algorithm recommends immediate biopsy, but here’s the problem: the doctor can’t see why the AI reached this conclusion. Should they follow the recommendation blindly, or trust their own assessment?

This scenario plays out daily in hospitals worldwide. AI in medical diagnosis has become remarkably accurate, sometimes outperforming human doctors in detecting conditions like diabetic retinopathy or skin cancer. However, when these systems operate as black boxes, physicians face an impossible choice.

Transparent AI systems, by contrast, show their reasoning. They might highlight specific features in an image, reference similar cases from training data, or provide confidence scores for their predictions. This visibility allows doctors to verify the AI’s logic, catch potential errors, and combine machine intelligence with human expertise and patient context.

The stakes couldn’t be higher. A misdiagnosis can mean unnecessary surgery or missed treatment windows. That’s why medical professionals don’t just need AI that works; they need AI they can understand, question, and ultimately trust with their patients’ lives.

Financial Systems and Fair Lending

When you apply for a loan or credit card, there’s a good chance AI is making decisions about your financial future. These algorithms analyze hundreds of data points—from your payment history to your employment patterns—to determine your creditworthiness in seconds. But here’s the problem: when you’re rejected, you often receive vague explanations like “insufficient credit history” without understanding the specific factors that led to that decision.

This lack of clarity isn’t just frustrating; it can perpetuate inequality. Studies have revealed instances where AI lending systems unintentionally discriminated against certain demographics, even without explicitly considering race or gender. The algorithms learned biased patterns from historical data that reflected past discriminatory practices.

Financial transparency matters because it empowers you to improve your situation. If an AI denies your loan application because you switched jobs recently, that’s actionable information—you know to wait a few months and reapply. Without transparency, you’re left guessing, unable to address the actual concerns.

Regulators are increasingly demanding explainable AI in finance. The European Union’s regulations, for example, grant consumers the right to understand automated decisions affecting them. This shift recognizes a fundamental principle: when AI controls access to financial opportunities, people deserve to know the rules of the game they’re playing.

Criminal Justice and Bias Prevention

Artificial intelligence is increasingly making decisions that profoundly impact people’s lives through criminal justice applications. From predictive policing systems that determine where officers patrol to algorithms that recommend sentencing lengths, these tools carry serious consequences. The ethical stakes couldn’t be higher when AI influences who gets arrested, detained, or imprisoned.

The problem? Many of these systems operate as black boxes. When an algorithm flags someone as high-risk for reoffending, judges and defendants often cannot see why that decision was made. This opacity becomes particularly troubling when studies reveal that some criminal justice AI systems exhibit racial and socioeconomic bias, perpetuating historical inequalities rather than promoting fairness.

Consider a real-world scenario: an algorithm recommends a longer sentence based on zip code, employment history, and family background. Without transparency, no one can challenge whether these factors unfairly disadvantage certain communities. The person being sentenced deserves to understand how the system reached its conclusion, and society needs assurance that justice is actually being served.

Transparency serves as a critical safeguard here. When we can examine how these algorithms work, we can identify bias, challenge unfair outcomes, and hold systems accountable. This means requiring explainable AI models in criminal justice, conducting regular audits for discriminatory patterns, and ensuring affected individuals have meaningful opportunities to contest algorithmic decisions. The fundamental principle remains simple: systems with such profound power over human freedom must be open to scrutiny.

The Ethical Stakes: Why This Matters to You

Accountability: Who’s Responsible When AI Goes Wrong?

When an AI system denies someone a loan, misdiagnoses a medical condition, or causes a vehicle accident, who takes responsibility? This question becomes increasingly urgent as AI systems make more consequential decisions.

Consider the 2018 incident when an Uber self-driving car struck and killed a pedestrian in Arizona. Investigators struggled to determine fault: Was it the safety driver, the software engineers, the company executives, or the AI itself? Without transparency into how the system processed information that night, assigning accountability when AI fails becomes nearly impossible.

Transparency serves as the foundation for accountability. When we can see inside an AI’s decision-making process, we can identify where things went wrong and who should answer for it. Did developers train the system on biased data? Did executives rush deployment without adequate testing? Did users misapply the technology?

Without transparent AI systems, companies can hide behind complexity, claiming their algorithms are too sophisticated to explain. This shields them from accountability while leaving affected individuals without recourse. Transparency transforms AI from an impenetrable black box into a system where responsibilities can be traced, mistakes can be understood, and improvements can be made. It ensures someone can always answer the question: “Why did this happen?”

Trust: Can You Rely on What You Don’t Understand?

Think about the last time you trusted a recommendation from Netflix or followed directions from Google Maps. You probably didn’t question how these systems reached their conclusions, but what happens when AI makes decisions that truly matter—like whether you qualify for a loan or which medical treatment you should receive?

This is where transparency becomes critical. When people understand how AI systems work, even at a basic level, they’re more likely to trust them. Consider two scenarios: a banking app that simply denies your credit application versus one that explains the key factors influencing the decision, like your credit history and income-to-debt ratio. The second approach builds trust because you can see the reasoning, verify the information, and potentially improve your situation.

However, there’s a paradox here. Research shows that consumers want transparency, yet they often don’t actually read the explanations provided. A fitness tracker might detail exactly how it calculates your sleep quality, but most users simply glance at the score. This creates a challenge: how do we build trustworthy AI systems when users want assurance without necessarily wanting complexity? The answer lies in offering layered transparency—simple summaries for quick understanding, with deeper explanations available for those who want them.

Fairness: Hidden Biases in Invisible Systems

When AI systems operate as black boxes, discriminatory patterns can flourish undetected, affecting real people’s lives in profound ways. These hidden biases in AI become particularly dangerous when we can’t examine how decisions are made.

Consider hiring algorithms: A major tech company discovered their resume-screening AI systematically downgraded applications from women because it learned from historical data reflecting past gender imbalances. Without transparency into the system’s decision-making process, this bias went unnoticed for years, perpetuating workplace inequality.

Housing algorithms present similar concerns. Some rental screening tools have been found to disadvantage applicants from certain zip codes, effectively creating digital redlining. Because landlords couldn’t see how these systems reached their conclusions, discriminatory patterns remained invisible until investigative journalists uncovered them.

The problem isn’t just that bias exists—it’s that opaque systems make it nearly impossible to detect, challenge, or correct unfair outcomes. When we can’t peer inside these decision-making processes, we can’t ensure they’re treating everyone fairly. Transparency acts as sunlight, revealing patterns that would otherwise remain hidden in the algorithmic shadows.

How Developers Are Making AI More Transparent

Explainable AI (XAI) Techniques

As AI systems grow more complex, researchers have developed special techniques to peer inside these “black boxes” and understand their decision-making processes. Think of these tools as translators that convert AI’s complex logic into explanations humans can actually understand.

LIME, which stands for Local Interpretable Model-agnostic Explanations, works like a spotlight. It examines individual predictions by asking “What factors influenced this specific decision?” For example, when a loan application gets rejected, LIME can reveal which factors—like credit score or income—weighed most heavily in that particular case.

SHAP (SHapley Additive exPlanations) takes a broader approach, measuring how much each feature contributes to a prediction. Imagine you’re baking a cake and want to know how much each ingredient affects the final taste. SHAP does something similar for AI decisions, assigning importance scores to different inputs.

These techniques are becoming essential tools across industries. In healthcare, they help doctors understand why an AI system flagged a certain scan as concerning. In hiring, they reveal what factors an AI considers when screening resumes, helping companies identify potential biases.

The beauty of XAI techniques is that they don’t require you to be a data scientist to benefit from them—they’re designed to make AI’s reasoning accessible to everyone affected by its decisions.

Model Cards and Documentation

Just as packaged foods display nutrition labels telling you what’s inside, AI models now have something similar: model cards. These standardized documents serve as transparency reports, revealing crucial information about how an AI system was built and what it can—and cannot—do.

Think of a model card as an AI system’s resume and disclaimer combined. It typically includes details about the training data used (was it scraped from the internet or carefully curated?), the model’s intended purpose (diagnosing medical images versus generating cat pictures), known limitations (struggles with certain accents or lighting conditions), and potential biases discovered during testing.

For example, a facial recognition model’s card might reveal that it was trained primarily on well-lit photos of adults, meaning it performs poorly on children or in low-light settings. This information helps users understand when the system is appropriate to use and when it might fail.

Major tech companies like Google and Microsoft have adopted model cards, making them publicly available for their AI products. This practice empowers everyone—from developers integrating AI into apps to regulators establishing safety standards—to make informed decisions. By documenting an AI model’s strengths, weaknesses, and quirks upfront, model cards transform mysterious black boxes into understandable tools with clear instructions and warnings attached.

Auditable AI Systems

Imagine if every decision an AI system made came with a detailed receipt—that’s essentially what auditable AI systems provide. These systems automatically create comprehensive logs that track every step of their decision-making process, from the data they analyzed to the specific factors that influenced their final output.

Think of it like a black box on an airplane. When something goes wrong—or even when things go right—investigators can review exactly what happened and why. Major financial institutions are now implementing these systems for loan approval processes. If someone gets denied credit, auditors can trace back through the AI’s reasoning to ensure no discriminatory patterns emerged.

Tech giants like Google and Microsoft have developed audit trail systems that timestamp decisions, record which data points were weighted most heavily, and flag unusual patterns. This becomes particularly valuable in healthcare, where doctors need to understand why an AI suggested a specific diagnosis before acting on that recommendation.

The beauty of auditable systems is their preventive power. When organizations know every AI decision can be reviewed, they’re more motivated to ensure their systems work fairly from the start. It’s accountability through transparency—creating a permanent record that protects both the people affected by AI decisions and the organizations deploying these powerful tools.

The Tradeoffs: Why Perfect Transparency Is Complicated

Performance vs. Interpretability

Here’s a challenge that keeps AI developers up at night: the most accurate AI models often act like brilliant but secretive consultants who give you the right answer but won’t explain their thinking.

Deep neural networks, for instance, can identify diseases in medical scans with remarkable precision, sometimes outperforming human doctors. Yet these same systems process information through millions of interconnected calculations, making it nearly impossible to trace exactly why they flagged a particular shadow as concerning. It’s like having a master detective who solves cases flawlessly but can’t tell you how they connected the dots.

On the flip side, simpler models like decision trees are wonderfully transparent. You can follow their logic step-by-step, seeing exactly which factors influenced each decision. However, they typically don’t match the accuracy of their more complex counterparts.

This creates a genuine dilemma in high-stakes situations. Would you prefer a black-box AI that’s 95% accurate in detecting fraud, or a transparent system that’s 85% accurate but shows its work? In healthcare, finance, and criminal justice, this isn’t just a technical trade-off; it’s a question that affects real lives.

The good news? Researchers are developing hybrid approaches that aim to capture both accuracy and explainability, though finding that sweet spot remains an ongoing challenge.

Proprietary Secrets and Competitive Advantage

While transparency sounds ideal in theory, companies developing AI systems face a genuine dilemma: revealing how their algorithms work could mean giving away the secret sauce that sets them apart from competitors. Think of it like a chef being asked to share their signature recipe in detail—doing so might compromise what makes their restaurant special.

Major tech companies invest billions in developing sophisticated AI systems. When Google creates a breakthrough search algorithm or when a financial firm develops a cutting-edge fraud detection system, these innovations represent years of research and competitive advantage. Full transparency could allow competitors to replicate these systems quickly, potentially undermining the original investment.

This tension between openness and proprietary protection creates real challenges. A company might want to explain how their AI hiring tool works to prove it’s fair, but revealing the exact formula could enable other companies to copy it or even game the system. Some businesses argue they can demonstrate their AI is trustworthy through third-party audits and outcome-based transparency without exposing underlying code.

The key question becomes: how much transparency is enough to ensure accountability without destroying innovation incentives? Finding this balance remains one of the most debated aspects of responsible AI development.

What You Can Do Right Now

Questions to Ask About AI Systems You Use

Before using any AI-powered product or service, arm yourself with these essential questions to evaluate its transparency:

How does this AI make decisions? Request clear explanations of the system’s logic. A transparent AI provider should offer accessible documentation about how their system processes information and reaches conclusions.

What data does it collect about me? Understanding data collection practices is fundamental. Ask what information the system gathers, how long it’s stored, and whether it’s shared with third parties.

Can I see why the AI made a specific recommendation? Whether it’s a content suggestion or a loan decision, you deserve to know the reasoning. Look for systems that provide explanations for individual outcomes.

Who built this AI, and what were their goals? Knowing the developers’ intentions helps you assess potential biases or conflicts of interest.

How is the system tested for fairness? Inquire about bias testing, diverse dataset usage, and measures taken to ensure equitable treatment across different user groups.

What happens when the AI makes mistakes? Transparent systems have clear processes for reporting errors, appealing decisions, and implementing corrections.

Is there human oversight? Determine whether humans monitor the system’s performance and can intervene when necessary.

These questions empower you to make informed choices about the AI systems you trust with your data and decisions.

Resources for Learning More

Ready to explore AI transparency further? Here are some carefully selected resources to continue your learning journey.

Start with the Partnership on AI’s transparency guidelines, which offer practical frameworks for understanding how organizations implement transparent AI systems. The Stanford Human-Centered AI Institute provides accessible research papers that break down complex transparency concepts into digestible insights.

For those interested in policy and regulation, the European Commission’s AI Act documentation explains how governments are approaching transparency requirements. MIT Technology Review regularly publishes reader-friendly articles examining real-world transparency challenges and solutions.

The AI Ethics Lab offers free courses that dive into transparency alongside other critical ethical considerations. Their interactive modules use relatable examples to illustrate key concepts.

For hands-on learners, Google’s Model Cards toolkit demonstrates how transparency works in practice by showing how to document AI system capabilities and limitations. The AI Incident Database catalogues real cases where lack of transparency led to problems, providing valuable lessons from actual implementations.

OpenAI’s blog frequently discusses their approach to transparency, offering insider perspectives on the challenges technology companies face when making AI systems more understandable and accountable to users.

AI transparency isn’t just another checkbox on a technical specification sheet—it’s about ensuring that the technology shaping our world remains accountable to the people it serves. As artificial intelligence becomes embedded in decisions affecting healthcare, education, employment, and justice, our ability to understand and question these systems becomes a fundamental human right.

Think of transparency as the bridge between technological innovation and public trust. Without it, we’re essentially asking society to accept life-altering decisions from systems they can’t understand, question, or challenge. This isn’t sustainable, and more importantly, it isn’t right.

The good news? We’re seeing momentum toward more responsible AI development. Organizations worldwide are adopting ethical AI frameworks, regulators are establishing clearer guidelines, and developers are creating tools that make AI behavior more interpretable. These aren’t just feel-good initiatives—they’re practical steps toward technology that genuinely serves humanity.

Looking ahead, the future of AI depends on maintaining this dialogue between innovation and accountability. As these systems grow more sophisticated, our commitment to transparency must grow equally strong. The conversation doesn’t end here, and your engagement matters. Stay curious, ask questions when AI affects your life, and demand clarity from the systems that influence your world. After all, technology built for people should be understandable by people. The future of ethical AI isn’t just in the hands of developers and policymakers—it’s in yours too.