When a biased hiring algorithm screens out qualified candidates based on gender, when a facial recognition system wrongly identifies an innocent person as a criminal, or when an automated loan approval system denies credit without clear explanation—who takes responsibility? These aren’t hypothetical scenarios. They’re happening now, affecting real people’s careers, freedom, and financial futures. Yet when things go wrong, accountability often vanishes into a maze of developers, deployers, data providers, and corporate entities, each pointing fingers elsewhere.

AI accountability means establishing clear lines of responsibility when artificial intelligence systems cause harm. It’s the difference between a victim receiving redress and being told “the algorithm decided” with no recourse. As AI systems increasingly make decisions that shape our lives—determining who gets hired, receives medical treatment, or qualifies for loans—the question of who answers for mistakes becomes urgent.

This isn’t just a philosophical debate. Organizations worldwide are grappling with practical questions: Should the company that built the AI model be liable, or the one that deployed it? What happens when training data itself is flawed? How can someone challenge an automated decision they don’t understand? Without clear accountability frameworks, we risk creating a world where powerful AI systems operate beyond meaningful oversight, and those harmed have nowhere to turn.

Understanding AI accountability requires examining three interconnected elements: defining what responsibility means in automated decision-making, identifying who should be held accountable across the AI development chain, and establishing practical mechanisms for redress when systems fail. Together, these components form the foundation for fair, trustworthy AI deployment.

What AI Accountability Actually Means

Why Traditional Rules Don’t Work for AI

Traditional accountability frameworks were built for a world of human decision-makers, but AI systems operate fundamentally differently, creating gaps that existing rules struggle to address.

The first major challenge is what experts call the “black box problem.” Imagine trying to understand why your GPS suddenly rerouted you through a residential neighborhood instead of the highway. Even the engineers who built the system might not be able to pinpoint exactly why the algorithm made that specific choice at that moment. Modern AI systems, particularly those using deep learning, make decisions through millions of calculations across neural networks. Unlike a simple rule-based program where you can trace each step, these systems learn patterns from data in ways that even their creators cannot fully explain. When a loan application gets denied or a job candidate gets filtered out by AI, the affected person deserves to know why—but the system itself may not be able to provide a clear, human-understandable explanation.

The second challenge involves distributed responsibility. When something goes wrong with AI, who should be held accountable? Consider a self-driving car accident. Is it the fault of the company that manufactured the vehicle, the team that trained the AI model, the engineers who wrote the code, the city that provided inadequate road markings, or the human who was supposed to be monitoring the system? Traditional liability frameworks assume a clear chain of responsibility, but AI involves multiple parties across development, deployment, and use.

Finally, AI operates at a speed and scale that traditional oversight cannot match. A single algorithm can make millions of decisions per day, affecting thousands of people simultaneously. By the time regulators identify a problem, the harm may already be widespread, affecting communities across different countries and demographics in ways that would take human decision-makers years to accomplish.

The Three Pillars: Accountability, Liability, and Redress

Accountability: Tracking Who’s Responsible

When an AI system makes a mistake, figuring out who should answer for it isn’t straightforward. Unlike traditional products where liability chains are well-established, AI accountability involves multiple players at different stages, each contributing to how the system ultimately behaves.

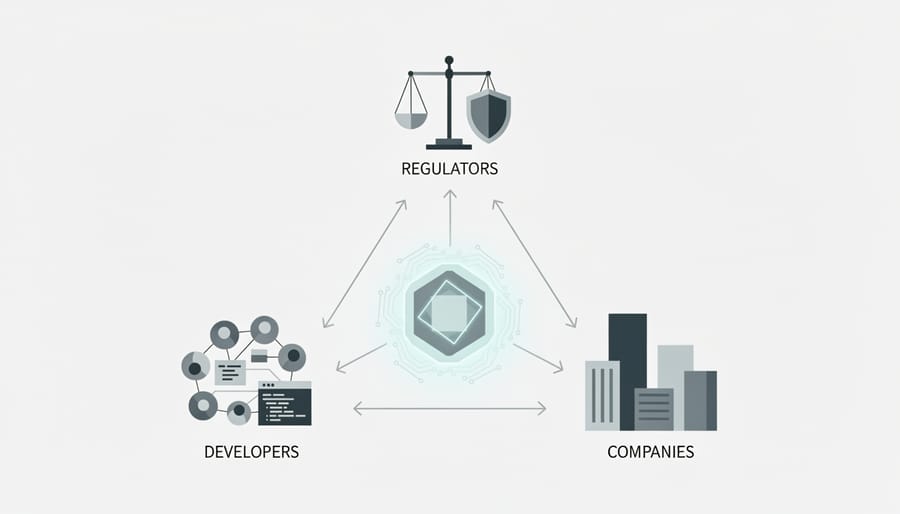

Think of AI accountability as a relay race where the baton passes through several hands. First, there are the developers who write the algorithms and make critical design choices. Then come the data scientists who train the model with specific datasets. Next are the companies that deploy these systems, often customizing them for particular uses. Finally, there are the end-users who interact with the AI and make decisions based on its outputs.

Consider the case of a hiring algorithm that systematically rejected qualified female candidates. Who’s responsible? The engineers who built the base model? The company that trained it on historically biased hiring data? The HR department that chose to use it without adequate testing? Or the managers who blindly followed its recommendations? In reality, accountability distributed across this entire chain.

Real-world examples reveal this complexity. When Amazon discovered its recruiting tool showed bias against women, they took responsibility by shutting it down. This demonstrates corporate accountability. However, the bias originated from training data reflecting past hiring patterns, highlighting how design choices echo through the system’s lifecycle.

Medical AI provides another compelling example. When an algorithm misdiagnoses a patient, the accountability puzzle includes the software developers, the hospital that purchased the system, and the doctors who relied on its assessment. Legal frameworks increasingly recognize this shared responsibility, requiring transparency about how these systems work and establishing clear oversight protocols.

The emerging consensus suggests AI itself cannot be held accountable because it lacks consciousness and intent. Instead, accountability rests with humans who create, deploy, and use these systems. This means developers must document their processes, companies must validate AI performance across diverse scenarios, and users must maintain appropriate oversight. The key is creating clear accountability trails that track decisions from conception through deployment, ensuring someone can always answer when things go wrong.

Liability: When Things Go Wrong, Who’s at Fault?

When a self-driving car crashes, a medical diagnosis algorithm recommends the wrong treatment, or a hiring AI discriminates against qualified candidates, the immediate question becomes: who pays? More importantly, who should be held legally responsible? These aren’t merely theoretical concerns. Real people face real consequences, and our existing legal frameworks are struggling to keep up.

Traditional product liability law was designed for physical goods with clear manufacturers. If your toaster catches fire, you sue the company that made it. But AI systems blur these lines dramatically. Consider an autonomous vehicle accident. Is the car manufacturer liable? The software developer? The company that trained the AI model? The service that provided the training data? Or perhaps the owner who failed to install an update?

Current legal approaches typically fall into two categories. Product liability treats AI as a defective product, holding manufacturers responsible for design flaws or inadequate warnings. This works reasonably well for embedded AI in physical products like vehicles or medical devices. Service liability, on the other hand, applies when AI operates more like a tool or service, similar to how we treat professional negligence. A hospital using an AI diagnostic tool, for instance, might face liability for how they implemented and relied on that system.

The challenge intensifies with AI’s unique characteristics. Unlike traditional products, AI systems learn and evolve after deployment. An algorithm might perform perfectly during testing but produce harmful results months later after learning from new data. Who’s responsible for that drift?

Europe is pioneering AI-specific liability frameworks through its proposed AI Liability Directive, which would ease the burden of proof for victims. Instead of having to understand complex algorithms, injured parties could access certain information about how the AI made its decision. Some experts propose strict liability for high-risk AI applications, meaning companies are responsible regardless of fault, similar to how we handle certain hazardous activities.

The emerging consensus suggests a shared responsibility model. Developers bear responsibility for robust testing and documentation. Deployers must ensure proper implementation and monitoring. Users should understand system limitations. This layered approach recognizes that AI accountability rarely points to a single culprit, but rather requires multiple stakeholders taking appropriate precautions at each stage of the AI lifecycle.

Redress: Making Things Right for Those Harmed

When AI systems cause harm, the question isn’t just who’s responsible, but how to make things right for those affected. Redress mechanisms aim to compensate victims, correct faulty systems, and prevent future incidents. However, obtaining redress for AI-caused harm presents unique challenges that traditional legal frameworks struggle to address.

Monetary compensation remains the most straightforward form of redress. When a hiring algorithm discriminates against qualified candidates, when a medical AI misdiagnoses a condition, or when an autonomous vehicle causes an accident, financial damages can help offset tangible losses like lost wages, medical bills, or property damage. Several countries are developing specialized AI liability insurance requirements, similar to auto insurance, ensuring funds are available when harm occurs.

Beyond money, algorithmic corrections address the root cause. If an AI system consistently produces biased outcomes, redress might involve retraining the model with more representative data, adjusting decision thresholds, or implementing additional safeguards. When Amazon discovered its recruiting algorithm discriminated against women, the appropriate redress wasn’t just compensating affected applicants but fundamentally redesigning the system and reviewing hiring practices.

Systemic reforms represent the broadest form of redress. These might include mandatory audits, transparency requirements, or industry-wide standards that prevent similar harms across multiple organizations. After facial recognition systems demonstrated higher error rates for people of color, advocacy led to moratoria on certain uses and stricter accuracy requirements.

The challenge lies in proving AI-caused harm. Unlike a car accident with clear physical evidence, demonstrating that an algorithm denied you a loan unfairly or that an AI system influenced a negative outcome requires access to proprietary systems, technical expertise, and often the cooperation of companies with competing interests. Many victims never realize AI played a role in decisions affecting them.

This evidence gap has sparked calls for explainability rights, allowing affected individuals to understand how AI systems reached decisions about them, and audit rights, enabling independent experts to examine algorithms for flaws. Without these mechanisms, redress remains frustratingly out of reach for many harmed by AI systems.

Real-World Examples Where Accountability Failed

When accountability structures fail in AI systems, real people face real consequences. Let’s examine several cases where gaps in responsibility led to significant harm.

In 2018, Robert Williams became the first known person wrongfully arrested due to facial recognition error in Detroit. Police relied on a flawed match from surveillance footage, leading to Williams’ arrest in front of his family and detention for 30 hours. The AI system had misidentified him, but no one questioned the technology’s accuracy before making the arrest. What should have happened: Officers should have treated the AI match as one piece of evidence requiring human verification, not definitive proof. The department needed clear protocols requiring additional corroborating evidence before making arrests based on algorithmic outputs.

Amazon’s AI recruiting tool, used between 2014 and 2017, systematically discriminated against women. The system learned from historical hiring data that predominantly featured male candidates, teaching itself that male applicants were preferable. It downgraded resumes containing words like “women’s” and penalized graduates from all-women’s colleges. This algorithmic bias could have affected countless careers before Amazon scrapped the program. What should have happened: Regular bias audits should have been conducted before deployment, with diverse testing datasets and ongoing monitoring for discriminatory patterns. Clear accountability chains would have ensured someone took responsibility for vetting the system’s fairness.

In healthcare, IBM’s Watson for Oncology recommended unsafe cancer treatments in multiple cases. Internal documents revealed the AI suggested giving a patient with severe bleeding a drug that could cause fatal hemorrhaging. The system was trained on hypothetical cases rather than real patient data, creating dangerous gaps in its recommendations. What should have happened: Medical AI should undergo rigorous clinical trials similar to pharmaceutical testing, with clear liability frameworks holding developers and healthcare providers jointly accountable for validating recommendations before patient care.

The 2018 Uber autonomous vehicle accidents in Tempe, Arizona, killed pedestrian Elaine Herzberg when the self-driving system failed to properly classify her crossing the street. Investigation revealed the backup safety driver was watching a video on their phone, highlighting blurred lines of responsibility between human operators and autonomous systems. What should have happened: Clear accountability frameworks should have defined whether Uber, the safety driver, or the AI developers bore primary responsibility, with transparent testing standards required before public road deployment.

Who Should Be Held Accountable? The Key Players

AI Developers and Data Scientists

AI developers and data scientists sit at the foundation of AI accountability because they make crucial decisions during system design and development. Think of them as architects who must build with both innovation and safety in mind.

Their responsibilities begin with algorithm design choices. When creating a machine learning model, developers decide which data to use, how to weight different factors, and what outcomes to optimize for. A credit scoring algorithm, for example, might inadvertently discriminate if developers don’t carefully consider how historical lending biases appear in training data.

Bias testing represents another critical duty. Before deploying any AI system, developers must rigorously test for unfair outcomes across different demographic groups. This means running the algorithm through diverse scenarios and examining whether it treats everyone equitably. A facial recognition system tested primarily on one ethnic group might fail dramatically when used more broadly.

Documentation practices round out their accountability obligations. Developers should maintain clear records explaining how their algorithms work, what data was used, known limitations, and testing results. This transparency becomes essential when problems arise and stakeholders need to understand what went wrong.

Many organizations now require developers to complete fairness impact assessments and maintain model cards that detail system capabilities and constraints, creating an accountability trail from conception through deployment.

Companies Deploying AI Systems

When companies deploy AI systems, they shoulder significant responsibility for how these technologies affect real people. Unlike individual researchers or third-party auditors, companies make the final call on whether an AI system goes live, who uses it, and how it operates in the real world.

Consider a retail company implementing AI-powered hiring software. That company must ensure the system doesn’t discriminate against qualified candidates, even if they purchased the software from an external vendor. This responsibility includes thorough testing before launch, continuous monitoring during operation, and swift action when problems emerge.

Transparency forms another crucial pillar of corporate accountability. Companies should clearly inform users when AI makes decisions affecting them, whether that’s a loan application, content recommendation, or insurance premium calculation. Many organizations now publish AI ethics statements or impact assessments, explaining their systems’ purposes and limitations.

Corporate oversight also means establishing clear chains of command. When something goes wrong, someone within the company must have authority to pause or shut down problematic systems. Forward-thinking businesses create dedicated AI ethics teams, conduct regular audits, and maintain channels for users to report concerns or appeal automated decisions. This proactive approach not only protects customers but also helps companies avoid costly legal battles and reputational damage down the line.

Regulators and Policymakers

Governments play a crucial role in establishing guardrails that ensure AI systems serve the public good without causing unjustified harm. While innovation moves quickly, regulatory frameworks provide the structural foundation for accountability by setting clear standards and enforcement mechanisms.

Consider the European Union’s AI Act, which categorizes AI systems by risk level and imposes stricter requirements on high-risk applications like facial recognition or credit scoring. This approach recognizes that not all AI needs the same level of oversight—a music recommendation algorithm requires less scrutiny than one making medical diagnoses.

Effective regulation balances protection with innovation. For example, requiring companies to document their AI development processes creates transparency without stifling creativity. Regulators also mandate impact assessments before deploying sensitive applications, similar to how environmental studies precede construction projects.

Enforcement matters too. Agencies need technical expertise to audit AI systems and investigate complaints. Some countries have established dedicated AI oversight bodies with authority to impose penalties for violations. Without proper enforcement, even well-designed rules become mere suggestions, leaving vulnerable populations unprotected when automated systems make consequential decisions about their lives.

Current Approaches to AI Accountability

Algorithmic Impact Assessments

Before an AI system makes its first real-world decision, it should undergo rigorous evaluation to catch potential problems. Think of Algorithmic Impact Assessments (AIAs) as safety inspections for AI—similar to how buildings need permits before construction or drugs require clinical trials before reaching pharmacies.

These assessments examine how an AI system might affect different groups of people. For example, when a city considers deploying AI-powered surveillance in public spaces, an AIA would evaluate privacy risks, potential discrimination against certain communities, and whether the system aligns with civil liberties. This pre-deployment checkpoint creates a documented trail of accountability.

The process typically involves stress-testing the algorithm with diverse datasets, consulting affected communities, and creating mitigation plans for identified risks. Canada’s Directive on Automated Decision-Making pioneered this approach in government services, requiring agencies to assess impact levels before deployment.

AIAs establish clear responsibility by documenting who reviewed the system, what risks were found, and what safeguards were implemented. This documentation becomes crucial if problems emerge later—it shows whether organizations acted diligently or ignored warning signs. By identifying issues early, AIAs help prevent harm rather than just responding to it after the fact.

Explainable AI and Transparency Requirements

Imagine asking an AI system why your loan application was rejected, only to hear “the algorithm decided.” This black-box problem makes accountability nearly impossible. How can we hold AI responsible if we can’t understand how it reaches decisions?

The push for Explainable AI (XAI) addresses this challenge head-on. These systems are designed to provide clear, understandable reasons for their outputs. For instance, a healthcare AI diagnosing patients might highlight which symptoms influenced its recommendation, allowing doctors to verify the logic and catch potential errors.

Transparency requirements are gaining legal teeth worldwide. The EU’s AI Act mandates that high-risk AI systems provide explanations that humans can understand. Similarly, organizations are incorporating ethical decision-making frameworks that prioritize interpretability.

Consider credit scoring: traditional AI might simply output a number, but explainable systems show which factors (payment history, credit utilization, length of credit) contributed most to the score. This transparency enables applicants to understand rejections and provides a foundation for challenging unfair decisions.

The trade-off? Sometimes the most accurate AI models are the least explainable. Striking the right balance between performance and interpretability remains an active area of research and policy debate.

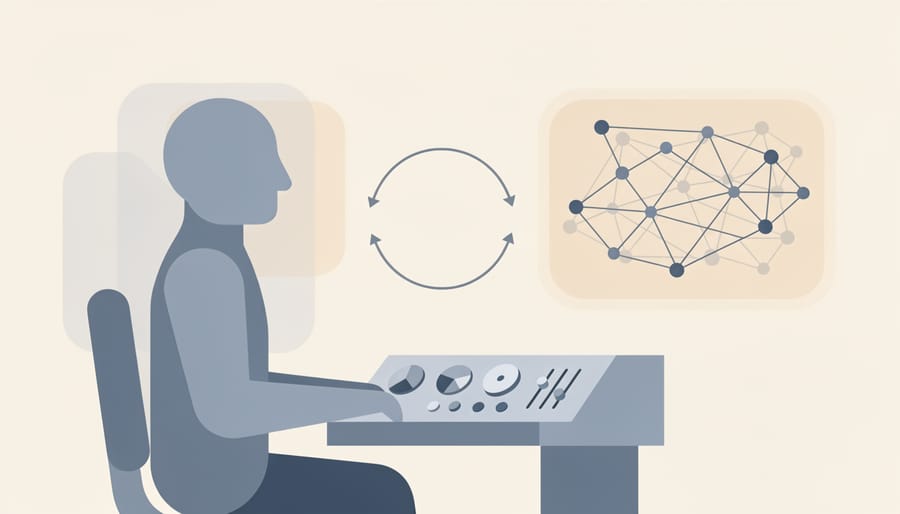

Human-in-the-Loop Systems

Human-in-the-loop systems represent a practical middle ground in AI accountability, keeping humans actively involved in critical decision points. Rather than letting AI systems operate autonomously, these designs require human verification before final actions are taken.

Consider content moderation on social media platforms. Instead of AI automatically removing posts, many platforms use a two-step approach: AI flags potentially problematic content, then human moderators make the final call. This creates a clear accountability chain. If something goes wrong, there’s a specific person who reviewed and approved the decision, not just an algorithm making choices in a black box.

The beauty of this approach lies in its flexibility. For low-stakes decisions like product recommendations, AI can operate more independently. But for high-stakes scenarios involving hiring, loan approvals, or medical diagnoses, human oversight becomes essential. A bank’s AI might screen loan applications and score them, but a human loan officer reviews borderline cases and makes final determinations.

This model doesn’t eliminate AI’s efficiency gains. It strategically deploys human judgment where it matters most. The trade-off is worthwhile: slightly slower processes in exchange for clearer responsibility and better outcomes. When errors occur, organizations can trace decisions back to specific individuals who had the authority and information to intervene, making accountability concrete rather than diffuse.

What This Means for You

Understanding AI accountability isn’t just theoretical—it directly affects your daily life, from loan applications to job searches to healthcare decisions. Here’s how you can take action when you encounter AI systems making decisions about you.

Start by asking the right questions. When a company uses AI to make decisions that impact you, ask: “Was AI involved in this decision?” If the answer is yes, follow up with: “Can you explain how the AI reached this conclusion?” and “What data did the system use?” You have the right to understand decisions that affect your life, and many jurisdictions now legally require companies to provide explanations for automated decisions.

Watch for red flags that signal poor AI governance. Be cautious if a company refuses to disclose AI involvement, can’t explain how their system works in simple terms, or won’t tell you what data they’re using. Other warning signs include no clear process for appealing AI decisions, no human review option, or claims that their system is “completely objective” or “bias-free”—remember, AI reflects the data and choices of its creators.

When advocating for accountability, document everything. Save communications, record dates and times of interactions, and note any explanations given. If you believe an AI system has treated you unfairly, escalate through official channels: file complaints with consumer protection agencies, contact industry regulators, or reach out to advocacy organizations focused on algorithmic justice.

As a consumer, vote with your choices. Support companies that practice transparency about their AI systems and maintain clear accountability structures. Ask your elected representatives to support AI accountability legislation. Join community discussions about AI governance in your workplace, school, or local government.

Remember, demanding accountability isn’t about rejecting AI—it’s about ensuring these powerful tools serve everyone fairly. Every question you ask, every complaint you file, and every conversation you start contributes to building a more accountable AI ecosystem. Your voice matters in shaping how AI systems operate in society.

The window for establishing robust AI accountability frameworks is narrowing rapidly. As artificial intelligence systems become more sophisticated and deeply integrated into critical infrastructure, healthcare, criminal justice, and financial services, the cost of getting accountability wrong grows exponentially. We’re at a pivotal moment where the decisions made today about liability, transparency, and oversight will shape the AI landscape for decades to come.

Consider that autonomous systems are already making decisions that affect millions of people daily, yet many operate in regulatory gray zones with unclear chains of responsibility. Waiting until AI becomes even more embedded in society means we’ll be trying to retrofit accountability onto systems that weren’t designed with it in mind, a far more difficult and expensive proposition than building it in from the start.

The encouraging news is that change doesn’t rest solely with policymakers and tech giants. As consumers, employees, and citizens, we each have a role in shaping responsible AI development. This means asking questions about the AI systems we encounter, supporting companies that prioritize transparency and ethical practices, and advocating for stronger protections in our communities.

Stay informed about AI developments and emerging accountability standards. Follow reputable sources covering AI ethics, participate in public consultations when regulatory bodies seek input, and don’t hesitate to demand answers when AI systems impact your life. The future of AI accountability depends on an engaged, educated public that refuses to accept “the algorithm decided” as a sufficient explanation.