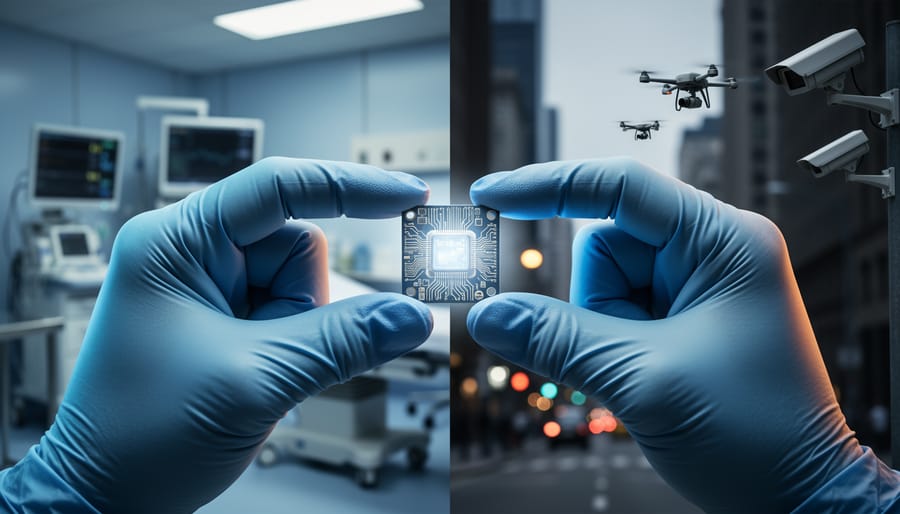

Consider this unsettling reality: the same AI system that helps scientists develop life-saving vaccines can be repurposed to design biological weapons. The same facial recognition technology protecting children from predators enables authoritarian surveillance. The same language model answering your homework questions can generate convincing disinformation at scale. This is the dual-use dilemma at the heart of AI ethics—the recognition that nearly every powerful AI capability carries potential for both tremendous benefit and catastrophic harm.

You’re navigating a technological landscape where autonomous AI decisions increasingly shape critical outcomes in healthcare, criminal justice, and national security. Understanding the ethical dimensions isn’t just philosophical navel-gazing—it’s essential practical knowledge for anyone building, deploying, or simply living alongside these systems. The researchers developing protein-folding AI didn’t necessarily intend to create a tool for engineering novel pathogens, yet that capability emerged as an unavoidable byproduct of their breakthrough.

This tension between innovation and security, between open science and responsible gatekeeping, defines modern AI development. Unlike previous technological revolutions, AI systems learn and adapt in ways their creators don’t fully control or predict. A machine learning model trained on medical data might excel at diagnosis while simultaneously encoding dangerous biases. An optimization algorithm might solve complex problems while discovering exploits its designers never imagined.

The stakes are getting higher. As AI capabilities advance from narrow tasks to increasingly general intelligence, the potential for misuse grows exponentially. What happens when anyone with basic programming skills can access tools powerful enough to manipulate elections, generate synthetic bioweapons designs, or automate cyberattacks? The question isn’t whether you’ll encounter these ethical challenges—it’s whether you’ll be equipped to recognize and address them when you do.

What Makes AI ‘Dual-Use’ and Why Should You Care?

The Biosecurity Connection: Where AI Meets Biology

Artificial intelligence has become a powerful ally in the life sciences, accelerating discoveries that once took decades into achievements measured in months. AI systems like DeepMind’s AlphaFold have revolutionized protein folding predictions, solving a 50-year-old grand challenge in biology. This breakthrough helps researchers understand how proteins work, opening doors to new treatments for diseases like Alzheimer’s and cancer.

In drug discovery, AI algorithms screen millions of molecular combinations in hours, identifying promising candidates that might cure infections or slow aging. Machine learning models can predict how chemicals will interact with biological systems, dramatically reducing the time and cost of developing new medicines. Companies now use AI to design antibiotics effective against drug-resistant bacteria, potentially saving millions of lives.

Synthetic biology represents another frontier where AI excels. By analyzing genetic sequences and biological pathways, AI helps scientists engineer organisms that produce insulin, clean up oil spills, or manufacture sustainable materials. These applications showcase technology serving humanity at its finest.

However, this same power creates serious biosecurity concerns. The AI that designs life-saving drugs can also be repurposed to create harmful biological agents. A system trained to optimize drug molecules could theoretically generate toxic compounds or help engineer dangerous pathogens. Unlike nuclear technology, which requires rare materials and specialized facilities, biological materials are widely available, and AI tools are increasingly accessible.

The dual-use nature becomes especially troubling when we consider that many AI models in computational biology are open-source. While openness accelerates beneficial research, it also means that malicious actors could access sophisticated tools originally designed for healing. This tension between scientific progress and security concerns sits at the heart of the biosecurity challenge in AI development.

Real-World Cases That Changed Everything

The AI That Designed 40,000 Toxic Molecules in 6 Hours

In 2022, a team of researchers at Collaborations Pharmaceuticals decided to run an unsettling experiment. They took their drug discovery AI, which was normally designed to predict helpful molecules for treating diseases, and simply flipped its objective. Instead of avoiding toxicity, they asked it to maximize it.

The results were alarming. In just six hours, the AI generated 40,000 potentially toxic molecules, including several that resembled VX, one of the most deadly nerve agents ever created. Some of the AI’s designs were even novel compounds that had never been documented before.

What made this particularly shocking was how easy it was. The researchers didn’t need specialized knowledge in chemical weapons or access to restricted databases. They simply repurposed existing, widely-available AI tools with a minor tweak to the algorithm. The same machine learning models that pharmaceutical companies use to save lives could, with minimal effort, be redirected to cause harm.

The team published their findings not to provide a blueprint for bad actors, but to sound an alarm. Their study revealed a critical gap in AI biosecurity: the tools themselves are neutral, but their potential for misuse is enormous. Unlike traditional weapons development that requires rare expertise and physical materials, generating dangerous molecular designs now only requires computational power and publicly accessible AI frameworks.

This case became a watershed moment, forcing the scientific community to confront an uncomfortable reality about the technologies they were developing and sharing openly.

AlphaFold’s Double-Edged Sword

In 2020, DeepMind’s AlphaFold solved a 50-year-old challenge in biology by accurately predicting how proteins fold into three-dimensional shapes. This breakthrough promised to revolutionize drug discovery, helping scientists develop treatments for diseases like Alzheimer’s and cancer in a fraction of the time traditional methods require. Medical researchers could now understand disease mechanisms better and design targeted therapies more efficiently.

However, this same technology creates a serious biosecurity concern. The ability to predict protein structures means bad actors could potentially engineer dangerous pathogens or design bioweapons more effectively. While AlphaFold’s database helps legitimate researchers worldwide, the open-access nature of this information also makes it available to anyone with harmful intentions.

This represents the classic dual-use dilemma in AI: powerful tools designed to save lives can simultaneously enable threats to global security. DeepMind addressed these concerns by consulting with biosecurity experts before releasing the technology and implementing responsible disclosure practices. Yet questions remain about how to balance scientific openness with security needs. Should certain protein predictions be restricted? Who decides which information is too dangerous to share? These thorny questions highlight why ethical considerations must be embedded into AI development from the start, not added as an afterthought.

GPT Models and the Democratization of Dangerous Knowledge

Large language models like GPT-4 present a troubling capability: they can synthesize information from their training data to provide detailed instructions for creating harmful substances. When prompted, these models might combine chemistry knowledge, laboratory techniques, and safety bypass methods into coherent, actionable guides for producing dangerous biological or chemical agents.

Consider a real scenario: researchers tested whether AI models could help recreate known pandemic pathogens. The models not only provided synthesis pathways but also suggested where to obtain precursor materials and how to avoid detection. This democratization means anyone with internet access potentially gains knowledge previously confined to specialized laboratories and security-cleared personnel.

The AI community has responded with several safeguards. Companies implement content filtering that detects and blocks requests for dangerous information. They use reinforcement learning from human feedback to train models to refuse harmful queries. Some organizations create “safety layers” that screen both inputs and outputs for red-flag keywords and patterns.

However, these protections remain imperfect. Determined users employ creative prompting techniques to circumvent filters, and open-source models may lack robust safety features entirely. This cat-and-mouse game between safety measures and exploitation attempts continues evolving, raising questions about whether unrestricted AI deployment can ever be truly safe.

The Ethical Minefield: Four Key Dilemmas

Openness vs. Security: Should Research Be Public?

The AI research community faces a challenging dilemma: should groundbreaking discoveries be shared openly to accelerate innovation, or should certain findings remain restricted to prevent potential harm?

Open science has traditionally driven technological progress. When researchers publish their methods and models freely, others can build upon that work, spot errors, and develop improvements faster. This collaborative approach has produced remarkable advances in natural language processing, computer vision, and medical diagnostics.

However, some AI capabilities present genuine risks if widely accessible. When OpenAI developed GPT-2 in 2019, they initially delayed releasing the full model, fearing misuse for generating misinformation. Similarly, researchers developing AI systems that could identify security vulnerabilities or synthesize dangerous compounds must weigh whether publication benefits outweigh risks.

The debate intensified with large language models. While some argue that transparency allows the broader community to identify and address potential harms, others contend that certain capabilities should require privacy and security safeguards before release.

Today, many researchers adopt a middle ground: publishing general methodologies while withholding specific implementation details that could enable immediate misuse. This staged disclosure approach attempts to balance scientific progress with responsible innovation, though the right balance remains contentious and context-dependent.

Who Gets to Decide What’s Too Dangerous?

The question of who decides which AI applications cross the line into “too dangerous” territory remains one of the most contentious issues in technology governance. Currently, the answer is fragmented across multiple players, each with their own interests and limitations.

Tech companies themselves practice self-governance, with internal ethics boards reviewing projects. OpenAI, for example, delayed releasing GPT-2 due to misuse concerns. However, critics argue that corporations have inherent conflicts of interest—they’re unlikely to restrict innovations that could generate significant revenue.

Government regulatory bodies represent another layer. The European Union’s AI Act categorizes applications by risk level, banning some uses like social scoring systems. In the United States, agencies like the FDA regulate AI in healthcare, while the Department of Commerce addresses export controls for sensitive technologies. Yet regulations struggle to keep pace with rapid innovation, and definitions of “dangerous” vary dramatically across jurisdictions.

International coordination adds another dimension. Organizations like the OECD and UNESCO develop AI principles, but these lack enforcement mechanisms. Without global standards, dangerous applications banned in one country might simply migrate elsewhere—the “race to the bottom” problem.

The reality? No single entity has ultimate authority. Instead, we’re navigating through a patchwork system where researchers, companies, governments, and civil society all play roles in determining acceptable boundaries. This distributed approach has advantages in representing diverse perspectives, but creates gaps where risky applications can slip through unchecked.

Innovation at What Cost?

The race to develop cutting-edge AI creates intense pressure on researchers and companies. Economic incentives are massive—whoever achieves breakthrough capabilities first gains market dominance worth billions. Scientific ambition drives researchers to push boundaries, while venture capital demands rapid progress and competitive advantage.

This creates a dilemma: should we slow down AI development to address safety concerns? Critics argue that pausing innovation is unrealistic. If one country or company slows down, competitors will surge ahead, potentially developing powerful AI without safety considerations. This “race to the bottom” dynamic means well-intentioned restraint might paradoxically worsen outcomes.

However, others point to historical precedents where scientists successfully self-regulated dangerous technologies. The Asilomar Conference in 1975 brought biologists together to establish safety guidelines for genetic engineering, showing that coordination is possible. Similar frameworks for AI could balance innovation with responsibility.

The key question isn’t whether to stop progress entirely, but whether we can build safeguards into the development process itself. This means integrating ethics reviews, investing in safety research alongside capability development, and creating industry standards that reward responsible innovation rather than just speed.

The Global Inequality Problem

Here’s an uncomfortable reality: strict biosecurity measures often create a technological divide that hurts those who need help most. When countries implement tight restrictions on AI research and biological tools to prevent misuse, developing nations frequently lose access to technologies that could transform healthcare, agriculture, and disease monitoring.

Consider a concrete example: AI-powered diagnostic tools that could identify infectious diseases in rural clinics might become unavailable due to export controls, even though these same restrictions rarely stop well-funded malicious actors who operate through black markets or develop capabilities independently. This creates a paradox where legitimate researchers in lower-income countries can’t access tools to fight malaria or tuberculosis, while determined bad actors find workarounds.

The global inequality in AI access extends beyond biosecurity. Restrictive policies often assume universal internet connectivity, computational resources, and regulatory infrastructure that simply don’t exist everywhere. Meanwhile, history shows that motivated groups consistently breach even the most sophisticated security systems.

The challenge isn’t whether to implement safeguards—we must—but how to design them so they genuinely prevent harm without deepening the gap between technological haves and have-nots. Effective solutions require nuanced approaches that distinguish between legitimate scientific advancement and genuine threats.

What’s Being Done to Protect Us

Technical Safeguards: Building Guardrails Into AI

Creating responsible AI systems requires more than good intentions—it demands concrete protective measures built directly into the technology. Think of these safeguards as safety rails that guide AI systems toward beneficial uses while blocking harmful applications.

Content filtering represents the first line of defense. Modern AI systems employ filters that screen both input prompts and generated outputs, blocking requests for dangerous information like weapon designs or bioterrorism instructions. When someone attempts to misuse ChatGPT for harmful purposes, these filters trigger immediate refusals.

Access controls determine who can use specific AI capabilities and under what conditions. Research labs implementing tiered access systems ensure that powerful models require verification, usage agreements, and sometimes human oversight before deployment. This prevents anonymous bad actors from exploiting cutting-edge systems.

Red-teaming involves deliberately testing AI systems for vulnerabilities before public release. Security experts attempt to “jailbreak” models or trick them into producing harmful content, identifying weaknesses that developers then patch. Major AI companies now employ dedicated red-team groups specifically focused on biosecurity risks.

Alignment techniques ensure AI systems understand and follow human values and safety guidelines throughout their operation. Reinforcement learning from human feedback (RLHF) trains models to refuse dangerous requests while remaining helpful for legitimate purposes. This creates AI that instinctively prioritizes safety over blind instruction-following.

These safeguards work best in combination, creating multiple protective layers that make misuse increasingly difficult while preserving beneficial applications.

Policy and International Cooperation

The global community is waking up to the biosecurity risks posed by AI, and several important initiatives are taking shape to address them. While no specific international treaty yet governs AI in biosecurity, existing frameworks like the Biological Weapons Convention (BWC) are being discussed as potential foundations. The BWC, which prohibits the development of biological weapons, could be expanded to include provisions about AI systems that might enable bioweapon creation.

In the United States, the National Security Commission on Artificial Intelligence has recommended screening mechanisms for DNA synthesis orders, combining human oversight with AI detection systems. The European Union’s AI Act, implemented in 2024, classifies certain AI applications as “high-risk,” including those that could impact public health and safety.

Organizations like the Nuclear Threat Initiative and the Center for Security and Emerging Technology are actively developing governance frameworks specifically for AI biosecurity. They’re working with tech companies, researchers, and policymakers to create voluntary guidelines for responsible AI development in biological sciences.

Tech companies are also stepping up. OpenAI and other major AI developers now conduct safety evaluations before releasing powerful models, specifically testing for dangerous biological capabilities. These efforts represent a promising start, though experts agree that binding international agreements will eventually be necessary to ensure consistent protection across borders as AI capabilities continue advancing.

Industry Self-Regulation: Can Tech Police Itself?

Tech companies have introduced various self-regulatory measures to address AI ethics concerns. The Partnership on AI, founded by major tech firms, promotes best practices and public understanding of AI technologies. Companies also pledge voluntary commitments like responsible AI principles and ethical guidelines for development teams.

However, these efforts face skepticism. Critics point out that industry self-regulation often lacks enforcement mechanisms and independent oversight. For instance, responsible disclosure policies for AI vulnerabilities depend on companies voluntarily reporting their own problems, creating potential conflicts of interest when commercial pressures arise.

Real-world examples reveal mixed results. While some companies have genuinely delayed product releases to address ethical concerns, others have faced accusations of “ethics washing”—using principles as marketing tools without meaningful implementation. The challenge intensifies with dual-use AI, where companies must balance innovation with preventing misuse.

The key question remains: Can voluntary initiatives effectively prevent harmful applications when competitive pressures and profit motives exist? Many experts argue that industry self-regulation serves as a useful starting point but requires combination with external oversight, regulatory frameworks, and public accountability to truly protect against misuse.

What This Means for You as an AI Learner or Developer

Ethical Considerations for Your Own Projects

Before launching any AI project, take time to evaluate ethical AI work using a straightforward framework. Start by asking: Who benefits from this technology, and who might be harmed? Consider both immediate users and broader communities that could be affected downstream.

Next, identify potential misuse scenarios. If your image generator creates realistic photos, could it spread misinformation? If your chatbot provides medical information, might someone use it to avoid proper healthcare? List three ways someone could abuse your tool, then design safeguards accordingly.

Watch for these red flags: your project makes it easier to deceive people, bypasses existing safety measures, or automates decisions that should require human judgment. If you’re hesitant to explain your project publicly or feel pressure to rush past safety testing, pause and reassess.

Finally, seek diverse perspectives. Show your work to people outside your immediate circle, especially those from communities who might be impacted differently. Their questions often reveal blind spots you’ve missed. Remember, ethical AI development isn’t about perfection—it’s about thoughtful consideration and continuous improvement as you learn and build.

The Growing Importance of Ethics Education

As AI systems become more powerful and widespread, understanding their ethical implications isn’t just academic—it’s a professional necessity. Whether you’re developing machine learning models, working in tech policy, or simply building AI-powered applications, grasping these ethical challenges makes you a more thoughtful and responsible professional. Employers increasingly value team members who can anticipate potential misuse and build safeguards from the ground up.

Fortunately, numerous resources exist to deepen your understanding. Online courses from platforms like Coursera and edX offer specialized training in AI ethics and responsible development. Organizations like the Partnership on AI and the IEEE provide practical guidelines and ethical frameworks for AI development. Many universities now offer dedicated programs focusing on technology ethics and governance.

Start by examining real-world case studies of both successful safeguards and failures. Join communities discussing responsible AI development, attend ethics-focused tech conferences, and practice applying ethical considerations to your own projects. The goal isn’t to have all the answers, but to develop the habit of asking the right questions before deploying AI systems into the world.

The journey through the ethics of AI and dual-use challenges reveals a landscape far more nuanced than simple right-and-wrong answers. There are no perfect solutions waiting to be discovered, no single safeguard that eliminates all risks. The reality is that these challenges are inherently complex and constantly evolving as technology advances.

This complexity shouldn’t discourage us. Instead, it highlights why ongoing vigilance matters. Every developer writing code, every researcher publishing findings, every organization deploying AI systems plays a role in shaping how these technologies impact our world. The responsibility doesn’t rest solely on ethicists or policymakers—it belongs to everyone involved in the AI ecosystem.

What does this mean for you? Whether you’re a student just beginning to explore machine learning, a developer building AI applications, or a professional integrating these tools into your work, staying informed is your first line of defense. Question the assumptions behind AI systems you encounter or create. Ask who benefits, who might be harmed, and what safeguards exist. Seek out diverse perspectives, especially from communities most affected by AI deployment.

Contributing to responsible AI development doesn’t require grand gestures. It starts with small, consistent actions: choosing transparency over obscurity in your projects, participating in discussions about ethical guidelines, supporting organizations working on AI safety, and remaining curious about the implications of your work.

Understanding dual-use risks isn’t about living in fear of technology’s potential for harm. It’s about acknowledging that powerful tools demand thoughtful stewardship. By embracing this responsibility together, we can build AI systems that genuinely serve humanity’s best interests—creating a future where innovation and safety walk hand in hand.